Most raw data is noisy, unstructured, and unusable without significant processing. Businesses that want to turn data into insights need a strategy, and batch data processing is one of the most reliable ways to do it.

Batch data processing is the method of collecting data over time and processing it in bulk at scheduled intervals.

It’s efficient, easy to manage, and ideal for high-volume tasks that don’t require instant results. While real-time systems offer speed, batch processing wins in simplicity, scalability, and control, especially for teams building data workflows in-house.

This guide breaks down how batch processing works, how it compares to real-time alternatives, when to use it, and what tools can help you build a robust batch data pipeline at scale.

What Is Data Processing?

Let’s start by setting a baseline: what exactly is data processing?

Data processing is the act of converting raw data into usable information through a series of structured steps.

While the concept sounds simple, its execution is anything but, especially when dealing with massive, unstructured data from dozens of sources. Every business domain has different data needs, which influence how that raw information must be collected, cleaned, and used.

Common Factors That Shape a Data Processing Workflow:

- Data source and format: Where the data comes from and how it’s structured

- Business goals: What insights or outputs the data needs to drive

- Domain-specific requirements: Finance, marketing, operations, etc.

4 Key Steps of a Data Processing Pipeline

1. Data Ingestion

Also called data capture, this is where raw data enters the pipeline—from apps, sensors, APIs, or cloud systems.

2. Data Cleaning & Transformation

The raw data is validated, standardized, and sometimes enriched. This may involve formatting, schema mapping, or joining datasets to prepare for use.

3. Operationalization

Once ready, the data powers real-time dashboards, triggers alerts, or enables personalized user experiences.

4. Storage

Finally, processed data is stored for historical analysis, compliance, or future workflows.

Whether you’re building reports, syncing systems, or driving AI models, data processing turns “just data” into real decisions.

Batch Data Processing: A Closer Look

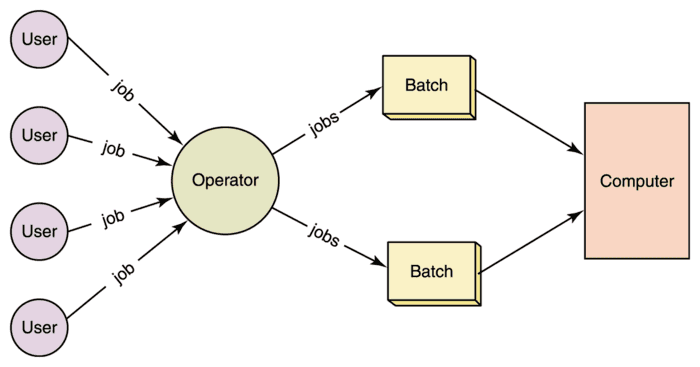

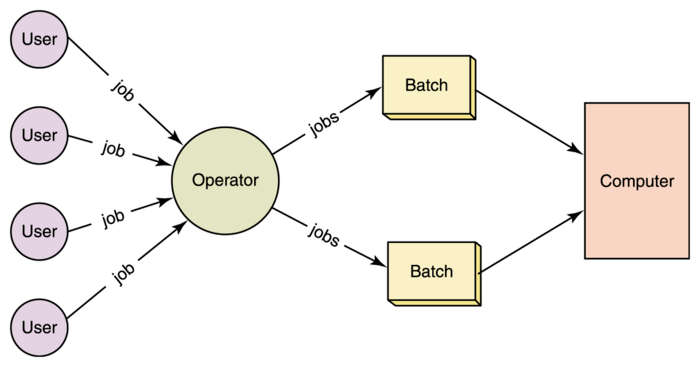

Batch data processing is the technique of collecting data over a fixed time interval, known as a batch window, and processing it all at once.

Rather than processing data continuously, the system remains idle while new data accumulates in the source. Once the batch interval ends, the pipeline executes in full: capturing new data, transforming it, operationalizing it, and storing it—all in one go.

This approach is commonly used in systems where real-time insight isn't required and where performance, simplicity, or cost efficiency take priority.

How It Works:

- Batch interval begins: The system waits while new data accumulates.

- Data collection: The source is queried to pull all new data since the last batch.

- Processing kicks in: All collected data is cleaned, transformed, and validated.

- Output is delivered: Processed data is sent to its destination—warehouse, dashboard, archive, etc.

- System resets: The next batch window begins.

This cycle repeats at fixed intervals—hourly, nightly, weekly—depending on the use case.

Batch processing has powered data workflows for decades, especially in reporting, ETL jobs, and large-scale transactional systems.

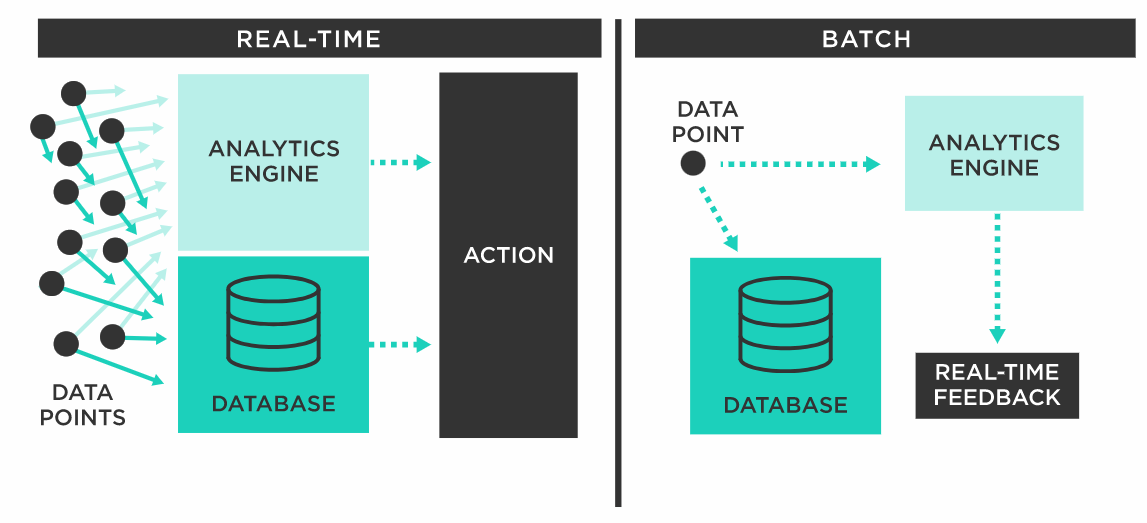

Real-time vs Batch Data Processing

Real-time data processing (also known as stream processing) analyzes and acts on data immediately as it’s generated, without waiting for accumulation or batch windows.

While batch processing handles data in scheduled groups, stream processing is always on, monitoring for new data and triggering actions the moment it arrives.

Here’s how the two methods compare:

Aspect | Batch Processing | Real-Time Processing |

|---|---|---|

| Age | Legacy approach, widely used since early computing | Modern technique, enabled by cloud and distributed systems |

| Mechanism | Queries data in chunks at set intervals | Continuously processes data as it arrives (event-driven) |

| Engineering Complexity | Simpler to implement and maintain | More complex to build, monitor, and scale |

| Latency | Higher latency (seconds to hours or more) | Very low latency (milliseconds to seconds) |

| Managed Tools Available? | Yes | Yes |

| Cost Efficiency | Affordable at small scale, costly when querying large source systems | More cost-effective at scale, avoids repetitive source queries |

| Best Use Cases | Reporting, ETL, periodic backups, end-of-day summaries | Fraud detection, personalization, IoT monitoring, real-time dashboards |

Learn more: Batch Processing vs Stream Processing

When to Use Each:

- Choose batch processing when you don’t need instant insights and want a simpler, reliable setup for large datasets.

- Use real-time processing when latency is critical, like in customer experiences, fraud prevention, or operational alerts.

Many modern businesses use both. A hybrid approach allows you to process what matters immediately, and defer what can wait.

Let’s look at the advantages and disadvantages of batch processing vs stream processing in more detail.

5 Advantages Of Batch Data Processing Over Stream Processing

Let’s look at the compelling reasons why batch data processing is often a preferred choice for many businesses, especially those who prefer to build data processing frameworks in house:

Efficiency

Batch data processing optimally utilizes idle resources to perform operations. Batch jobs are scheduled to run during off-peak hours when the system isn’t as busy. On the other hand, real-time processing systems have to run continuously, and getting them to be efficient takes more work.

Cost-Effectiveness

Batch processing is very economical as it doesn’t need high-end hardware and software. It operates on less powerful servers without needing the high-security levels that real-time data processing requires, thus saving on costs.

Accuracy

Given that batch jobs often run once a day or a week, it gives data scientists ample time to thoroughly examine the data and rectify any errors. Real-time data processing, on the other hand, provides less time for data review because of its real-time nature.

Scalability

Batch data processing is easily scalable in response to data volume fluctuations. The jobs can be executed on multiple servers and the system can be scaled up or down as needed. Real-time data processing, which is often (but not always!) limited to a single server, can only scale up to a certain limit.

Reliability

Batch processing ensures reliability for its resistance to system failures. Job distribution on multiple servers ensures the completion of the job even if one server fails. In contrast, real-time data processing, when deployed on a single server, is vulnerable to complete job failure if the server fails.

3 Potential Drawbacks Of Batch Processing

While batch processing provides numerous advantages, it comes with certain challenges that may influence an organization’s decision to use it. Here are some key disadvantages:

Training & Deployment Issues

Implementing in-house batch processing systems requires significant training and planning. Managers and system designers should understand what batch size to use, what triggers a batch, and how to schedule processing.

Continuous Monitoring

Batch processing can impact the precision of processing metrics. This could mean you spot issues and exceptions late, making your work less efficient. To avoid this, you need to manage your system actively, ensuring batch processing shows when you're bringing in and sending out events as they happen.

Increased Employee Downtime

Depending on data size, job type, and other factors, there may not be processed data available for your employees to do their jobs effectively. This may increase employee downtime and limit output.

Between real-time processing and batch processing, what you eventually choose depends on what you need to do with your data and the available resources. Let’s talk about this next.

When To Use Batch Processing

Batch processing shines under certain circumstances but there’s no absolute rule dictating its use. Batch processing is best suited for tasks that don’t demand real-time processing. When deciding whether batch processing is suitable for an organization, ask yourself the following questions:

- Does any job in the system have to wait for other jobs to finish? How is the completion of one job and the start of the next monitored?

- Does the organization manually check for new files? Is there an automated script that checks for files frequently enough to be efficient?

- Does the existing system retry jobs at the server level? Does this slow down operations or require task reprioritization? Could the server be utilized more effectively?

- Does the organization have a large volume of manual tasks? What mechanisms ensure their accuracy? Is there a system guaranteeing they are processed in the right order?

If your answer to one or more of these questions is ‘yes’, batch data processing is right for you.

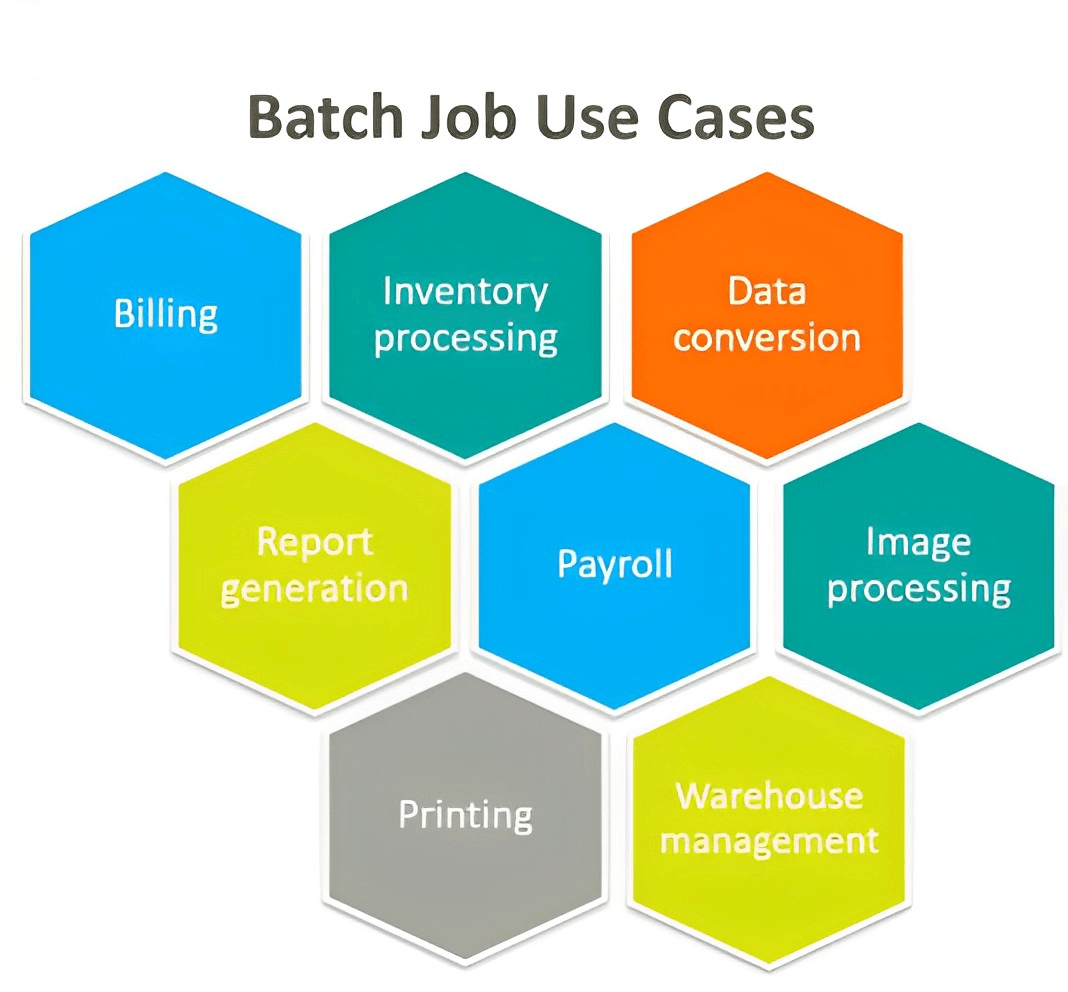

Diverse Applications Of Batch Processing Across Different Fields

Studies reveal that while 90% of business executives view data analytics as key to their organization's digital transformation, they only utilize 12% of their data. Batch processing can bridge this gap.

Let’s see some ways batch data processing can be used by real businesses:

General Use Cases

- Report Generation: Batch processing collects, sorts, and analyzes data to generate detailed reports that offer financial, operational, or performance-based insights for critical decision-making.

- Backup and Recovery: Using batch processing, you can implement automated backup schedules and manage backup files automatically. It also assists in data restoration, ensuring data integrity and accessibility.

- Integration and Interoperability: By automating data exchange and synchronization between various systems and departments, batch processing can help break data silos and promote better communication and integration.

Applications In Sales

- Lead Management: Batch processing can enrich and help prioritize leads, allowing sales personnel to target high-value prospects effectively.

- Sales Reporting: It can be set up to automatically process data related to sales for any period to provide insightful and timely sales reports.

- Order Processing: Batch processing can also accelerate the management of the order fulfillment process, inventory tracking, and customer information management.

- Campaign Management: It can enhance sales campaign management by analyzing large batches of leads or prospects, enabling sales reps to focus on specific segments.

- Customer Intelligence: You can even use batch data processing to uncover customer behavior, buying patterns, and trends. With this information, you can adapt your business to better respond to consumer needs.

Applications In Marketing

- Social Media Marketing: It can simplify the scheduling and publishing of posts on social media platforms, ensuring consistency and timely engagement.

- Email Marketing: You can use batch processing to quickly and efficiently deliver large volumes of emails to a list of subscribers to improve your business outreach and engagement.

- Marketing Analytics: All the emails you send to prospects and the ads you run on search engines and social media generate a huge amount of data. Batch processing can be used to process all the data generated during your marketing campaigns to provide valuable insights into customer behavior, engagement, and campaign ROI.

Applications In Finance

- Risk Management: It assists the finance sector in identifying and mitigating risks through data analysis.

- Fraud Detection: By analyzing large volumes of transactional data, batch processing detects patterns and anomalies indicative of fraudulent activities.

- End-of-Day Processing: Financial services companies can use the batch processing method to perform automatic and accurate end-of-day processing which includes reconciling transactions and generating reports.

Estuary: One Platform for Batch and Real-Time Data Processing

If you're building modern data pipelines, choosing between batch and real-time shouldn’t mean choosing between platforms. That’s why we built Estuary to support both.

Whether you're moving large datasets once per day or streaming events in milliseconds, Estuary gives you one unified system to ingest, transform, and deliver your data reliably, at scale, and in real time.

Why Use Estuary for Batch Processing?

- Scalable ingestion: Handle high volumes of data from file systems, APIs, and databases

- Schema inference: Automatically structure unstructured data for downstream use

- Cloud-native reliability: Built on durable infrastructure with fault tolerance by default

- Transformations made easy: Use TypeScript or streaming SQL to prepare data for BI tools

- 300+ batch connectors: Extend to virtually any source using built-in Airbyte integrations

What Makes Estuary a Real-Time Powerhouse?

- Change Data Capture (CDC) from sources like Postgres, MySQL, and MongoDB

- Sub-second latency from source to destination for low-lag operations

- Materialize anywhere: Push data directly to Snowflake, BigQuery, Salesforce, and more

- Stream processing + joins: Create real-time business logic using SQL or derivations

- No need for Kafka, Flink, or Spark—Estuary handles the complexity for you

Whether you’re batch-loading millions of records or streaming critical events in real time, Estuary helps you build production-grade pipelines in minutes, not months.

Conclusion

Batch data processing remains a smart, scalable choice for organizations that deal with large volumes of data but don’t need immediate insights. It’s reliable, cost-efficient, and simpler to manage, especially when building or maintaining internal systems.

Real-time processing, while more complex, enables instant responses and personalized experiences. But it’s not always necessary.

Ultimately, the best solution often isn’t batch vs. stream—it’s knowing when to use each.

With a unified platform like Estuary, you don’t have to compromise. You can process high-volume data in batches, stream real-time events from mission-critical systems, and manage everything from one interface.

Sign up for free to explore Estuary, or reach out to see how it fits into your architecture.

FAQs

What are examples of batch processing?

How does batch processing differ from real-time processing?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.