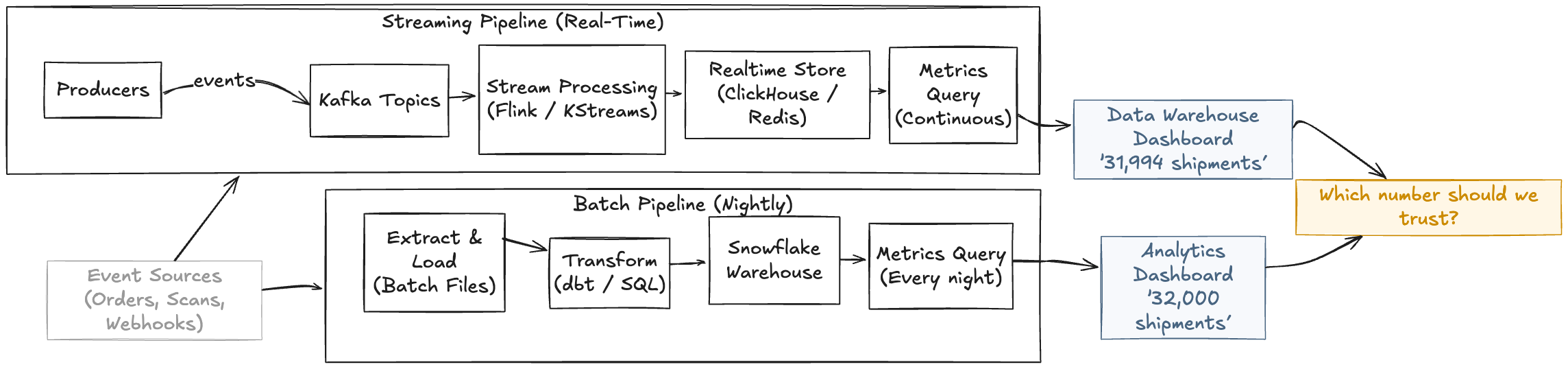

Combining batch and streaming pipelines often gets data engineers into trouble. Each pipeline has its own logic, its own schema choices, and its own timing rules, and when the dashboards stop showing the same number, the data engineering team ends up in a tough spot. As a result, you have to explain to non-technical stakeholders how the two dashboards are technically accurate, yet show two different values.

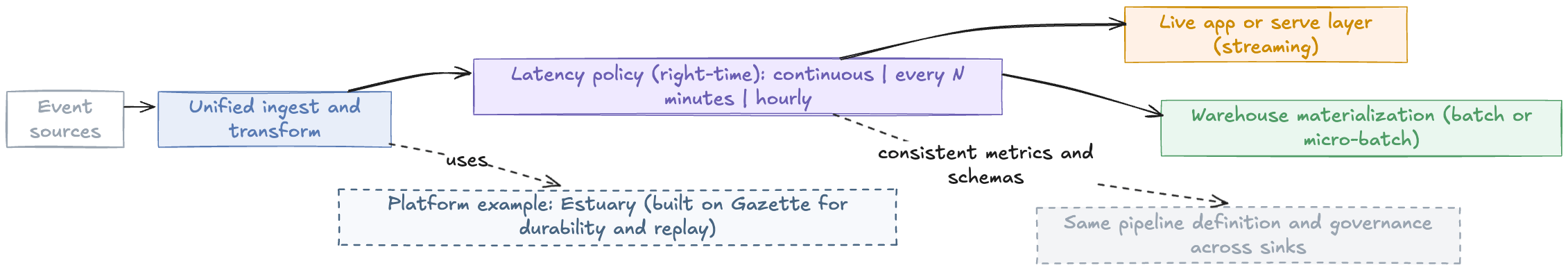

This post explains why these inconsistencies happen and how Estuary, the right time data platform, eliminates the latency gap with a simple configuration change.

Key Takeaways

Separate batch and streaming pipelines are a major cause of inconsistent dashboards and confusion for stakeholders.

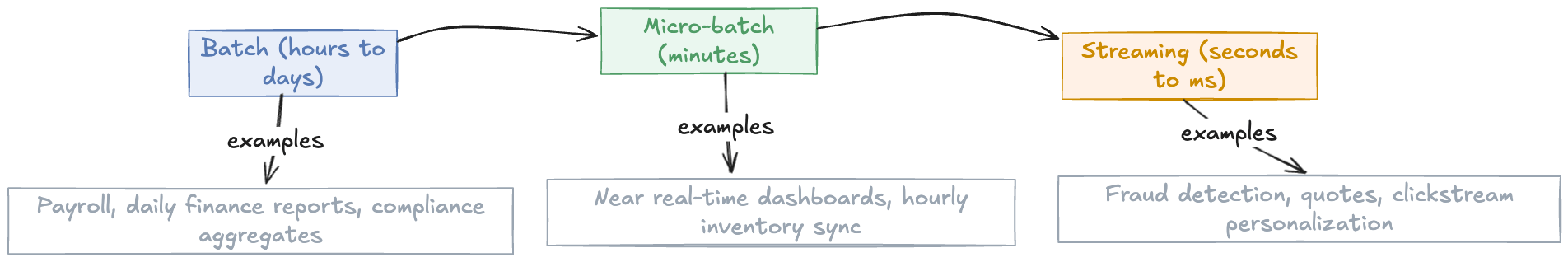

The real issue isn't just "real time vs batch" but choosing the right latency across a spectrum of batch, micro batch, and streaming.

Estuary, the right time data platform, lets you define your logic once and control data freshness with configuration instead of rewriting pipelines.

Sync Schedule allows you to switch between continuous sync and cost optimized batches while keeping data consistent.

Standard and delta updates let you decide whether you want a clean current state, a full event history, or both, all from the same pipeline.

Why Batch and Streaming Pipelines Show Different Numbers

A couple of months ago, we noticed our dashboards weren't lined up. Users expected them to be in sync, and they were understandably confused. How come one dashboard was showing 32,000 shipments, whereas the data warehouse claimed there were 31,994?

It turned out one dashboard was showing results of a batch job in Snowflake that was updated every night, whereas the other streamed events through Kafka in real time. Both were technically correct, they were just operating in different timelines.

Anyone who works with data has run into this before, and I've experienced it many times, as well. This happens because the batch and streaming pipelines have two architectures and two governance models. In the end, they also grow separately.

All the business problems we encounter are somewhere between batch, micro-batch and streaming. When we say batch, we are basically saying it can wait. When it comes to streaming, though, it’s more like this can't wait at all.

For instance, a payroll document can easily hold off until the next day. An anomaly detection system, on the other hand, can’t. If the fraud alarm goes off, it needs to be taken care of right away.

Over the last decade, I’ve seen companies build completely separate solutions around these two scenarios. On one side, you’ve got Spark, Airflow and Snowflake, and on the other, you have Kafka and event streams. The problem is that, while they are solving different latency needs, they are essentially manipulating the same data.

Right-Time in Practice: Freshness Controlled in the UI

Latency is often seen as an architectural decision. It goes through multiple design phases, setting off a cascade of events that will eventually lead to the final implementation; however, it will be based on already outdated requirements. Though this is pretty standard in software engineering, data moves quickly, and systems have to keep up with incoming requests and needs.

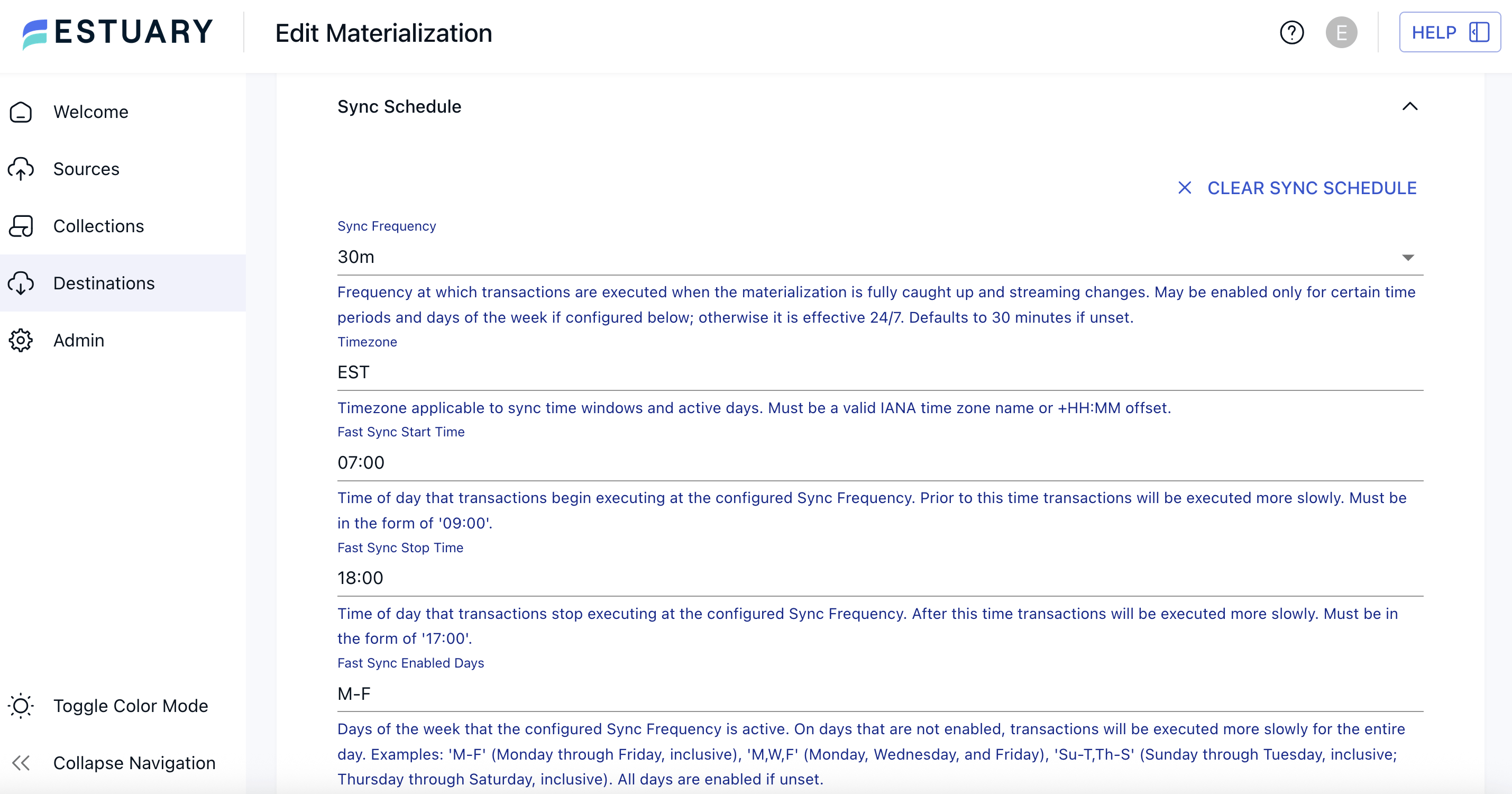

Estuary, the right time data platform, makes adjusting an architectural decision as easy as switching a setting, thanks to its Sync Schedule.

No matter what data sync frequency you opted for in the beginning, this feature makes sure you can change it on the fly. In practice, it’s as simple as a frequency field, with additional options if you want to set certain timeframes for faster or slower syncs.

There is a Sync Schedule dropdown in a materialization’s Endpoint Config where you choose how often data should land in your warehouse, whether in batches of hours or down to 0s for real-time syncs.

Freshness Comes at a Price: Cost Savings + Operational Workflow

But why would we even want to slow down our pipeline?

Think about how many abstraction layers we use today with all these modern cloud solutions. We talk about latency all the time, mostly in general terms, but for warehouses, latency literally comes with a price tag.

For example, if you continuously materialize data into Snowflake, it will have to run merges every time a new record arrives, So, if your table receives one new event per second, that’s 86,400 merge operations per day.

Let’s do the math. Say, a merge costs about 0.00003 credits.

Continuous mode (merge every second):

86,400 × 0.00003 = 2.592 credits/day

Now, let’s switch the same table to a 4-hour batch. This reduces merges to just 6 runs per day:

6 × 0.00003 = 0.00018 credits/day

That’s effectively a 99.99 percent reduction.

To make the difference even clearer:

Summarized side by side

| Mode | Merge Operations per day | Cost per merge (credits) | Total credits per day |

|---|---|---|---|

| Continuous (1 per s) | 86,400 | 0.00003 | 2.592 |

| 4-hour batch | 6 | 0.00003 | 0.00018 |

In real life, workloads aren't usually this extreme. Still, teams often see 50%–80% savings just by slowing down low-priority tables. And that's the whole point.

When it makes sense to slow down freshness:

- The table is not powering user-facing features or live decisions.

- Stakeholders are comfortable with hourly or daily updates.

- The data primarily feeds reports, BI tools, or planning workflows.

- The cost of continuous merges outweighs the value of ultra-fresh data.

Let us reiterate that you’re not building anything. You’re just changing a setting without getting into why the pipeline was designed like this. And that’s what “define once” actually means: making operational changes without rewriting your pipeline.

Standard vs. Delta Updates: How Your Destination Should Apply Changes

If the Sync Schedule decides when data lands in the destination, the update mode controls how it lands. Freshness is only one part of the equation. The other half is whether the destination keeps a clean, final state of each record or if it stores every single event as it happened. Enter standard updates and delta updates.

This is what really unifies batch and streaming in a single pipeline. Adjusting the cadence answers the when question. Standard and delta updates address the how. Do we keep just the latest state, or do we store every event?

Standard Updates

Standard updates act like a MERGE with deduplication. The system maintains a current view of a table, consolidating multiple events into a single row. This is what you need when accuracy is critical for your business. For example, when your warehouse numbers need to match what you show your stakeholders.

Delta Updates

Delta updates, on the other hand, are append-only. Every event is written as a new row, with no merging or collapsing of history. This is ideal for raw event logs, high-volume streams, or any situation where you care about every state change, not just the latest one.

When to Use Each Mode

Use standard updates for:

- Financial reporting dashboards for the finance department with accurate, reconciled numbers that match across different systems

- Inventory management systems where only current stock levels are important

- Customer profile tables containing the latest address, email, or subscription status per user

- Order status tracking with the current state of each order (shipped, delivered, etc.)

Use delta updates for:

- Fraud detection and audit logs where you need a complete trail of every action for compliance reasons

- User behavior analytics where every pageview or interaction needs to be mapped for creating user journeys

- IoT sensor data when you need the full time-series for analysis

- ML training datasets where models need to be trained on historical event sequences, not just current snapshots

From Pipelines to Agents

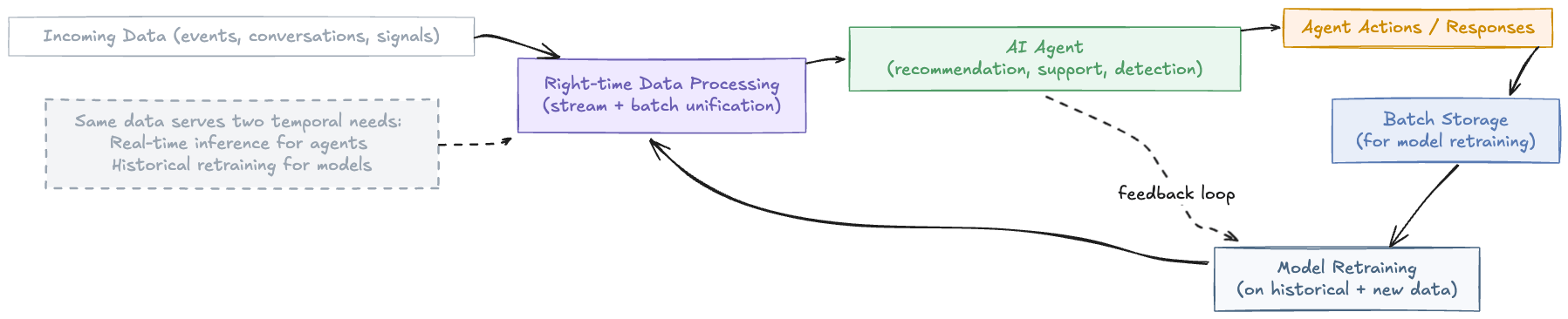

The shift from pipelines to agents becomes even more relevant as data starts powering agents instead of dashboards. AI systems like recommendation agents, customer-support assistants, or anomaly detectors don’t just consume data. They respond to it.

Naturally, these agents need consistency across timelines. The model trained overnight and the agent that is acting live on user input must see the same reality. Right-time data makes this possible by bridging the gap between analytical and operational layers and feeding both without maintaining separate codebases.

For example, think of a customer support agent that learns from conversations as they happen. Estuary streams these new messages into a live system so the agent can adapt instantly, while simultaneously storing them in batch form for future model retraining. Same data, two temporal needs, all in one flow.

Where We’re Headed

In the future of data engineering, it won’t make a difference whether data is real-time or batch. You’ll define your logic once, decide how fresh the data needs to be, and the system will handle the rest by keeping everything consistent and reliable.

That’s what “right-time” is essentially about. It provides flexibility without fragmentation, giving teams a single, dependable data pipeline that adapts to changing business needs. Instead of worrying about how to move data, you can focus on what you can do with it: build dashboards, retrain models, or power AI agents that react instantly.

For years, we chased “real-time” as the ultimate goal. But maybe the goal was never speed for its own sake. Maybe the real goal is getting it right: unified, consistent, and available exactly when it is needed.

About the author

Alessandro is a data scientist with a background in software engineering and statistics, working at the intersection of data and business to solve complex problems. Passionate about building practical solutions, from models and code to new tools. He also speaks at conferences, teaches, and advocates for better data practices.