Over the last few years, operational tools have multiplied. Now we’ve got product analytics, CRMs, CDPs, ticketing systems, marketing automation, support platforms; you name it. As all these tools got smarter, the data warehouse became kind of a central brain. However, it wasn’t an operational actor. This created pressure on data teams to close the activation gap, and Reverse ETL emerged as a natural response.

In practical terms, Reverse ETL (also known as data activation) is the practice of pushing modeled warehouse data into operational tools like CRMs, marketing platforms, and support systems so that teams can act directly where they work.

In this article, “Snowflake in reverse,” refers to using Snowflake as the source of truth and syncing changes outward at the right cadence, instead of building multiple dashboards.

Key Takeaways

Reverse ETL exists because dashboards alone don’t drive action unless data land directly inside the used tools (Salesforce, HubSpot, and Slack, for example).

The activation gap is a last-mile problem. Even correct warehouse data can still be ignored.

“Right-time” beats “real-time.” Choose the cadence that matches your business workflow (every few minutes, hourly, or daily).

With Snowflake CDC, you can react to table changes without polling the whole table repeatedly.

A Reverse ETL flow has four parts: source, transform, destination, and cadence.

The Illusion of Completeness

Some years ago, while transitioning into a more senior role, I saw a side of my work that I hadn’t seen before. Up until that point, I was mostly busy with more technical tasks and didn’t focus much on the business side of things. But once I got closer to stakeholders and became more involved in business decisions, I saw that even when “everything was working,” something was still missing. Sometimes, the data I provided wasn’t as helpful as I had thought, even though it was complete and ready to be used and turned into a beautiful chart.

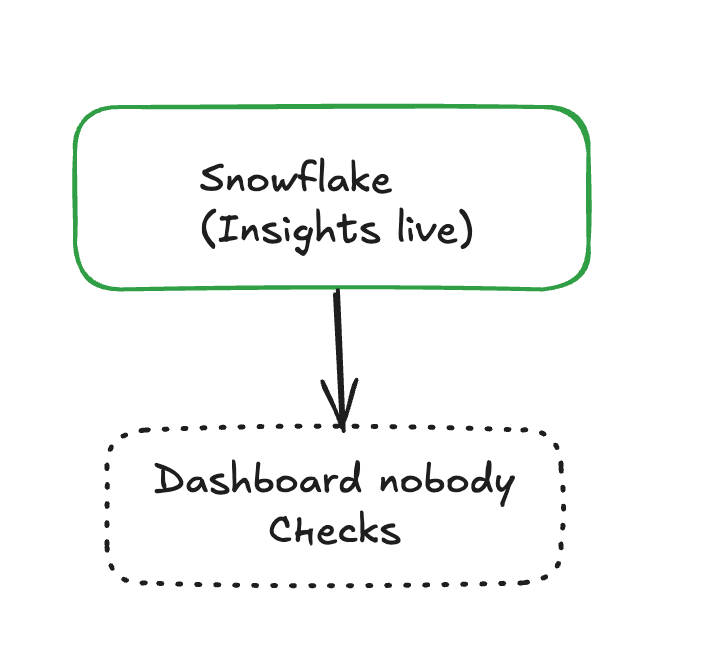

Imagine you have delivered the perfect churn model, packed with useful features and a great dashboard, but you’re still receiving emails from the marketing team with custom requests because no one remembered to check the dashboard before launching a campaign.

And that’s what the “illusion” from the heading refers to. The warehouse is there, full of information, but if your stakeholders are not really consuming it, it might as well be invisible.

Why Reverse ETL Exists

Therefore, my most important lesson was that dashboards and near-perfect models don’t drive actions unless they are connected to the right business tool. And that’s where Reverse ETL comes in. It closes the last mile, making sure the data reaches the platforms where decisions are made.

I remember working on a very successful project that involved the implementation of an A/B testing framework to help the marketing team evaluate voucher campaigns. I built exactly what my stakeholders needed, but the decisions were still made in Salesforce, not in my Tableau dashboard.

The only place where someone could take an action without switching between multiple tools was Salesforce, and my data was not there.

Right-Time: The Mindset Shift Beyond Real-Time

Besides location, the other factor that greatly impacts data in this last mile of the journey is timing.

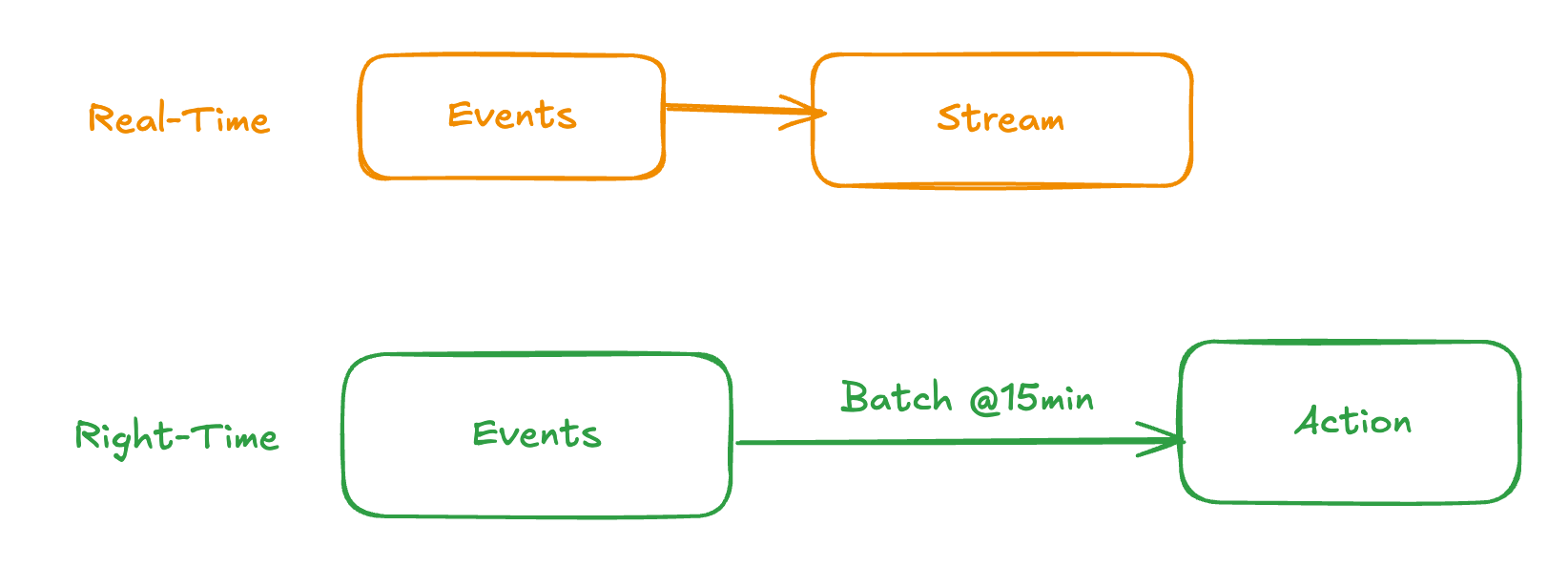

I’ve seen many buzzwords in my career, and ”real-time” is definitely one of them. What I’ve come to understand, though, is that “right-time” is way more important and specific. To put it simply, we need data at the right time, not all the time, especially in an era when huge amounts of information move from one warehouse to another, with significant costs involved (including maintenance and analytics).

Reverse ETL doesn’t always mean real-time sync. Many high-value use cases work better with scheduled updates (every 10–20 minutes, hourly, or daily). The goal is operational usefulness, not maximum frequency, and this way, they get to reduce both noise and cost.

When it comes to this subject, my favorite example is the “add to cart” problem. Does your marketing team need a sub-second event stream every time a customer adds products to a cart and leaves? Of course not. Instead, they need a sync every 10 or 20 minutes to trigger some sort of abandoned-cart pipeline that sends an email to the customer.

Making the Simple Reverse ETL Architecture Tangible

People want more than Grafana dashboards. They want something alive.

Dashboards are useful, but they’re also often neglected, opened only when someone remembers they exist. Another part of the issue here is that no one enjoys constantly jumping between multiple tools.

What teams actually pay attention to is Slack, and this becomes even more important when you put a new machine learning model in production.

Every time I promote a model (say, a demand forecasting system), I want to understand how it behaves online, not just in my offline tests. The main problem is that no matter how many experiments or backtests I run, reality always finds a way to surprise me. There should be a mechanism that signals small drifts and changes, not necessarily errors. That way, you can react before the situation escalates. These signals almost always live in Snowflake, quietly stored in a table that updates as the model produces its predictions.

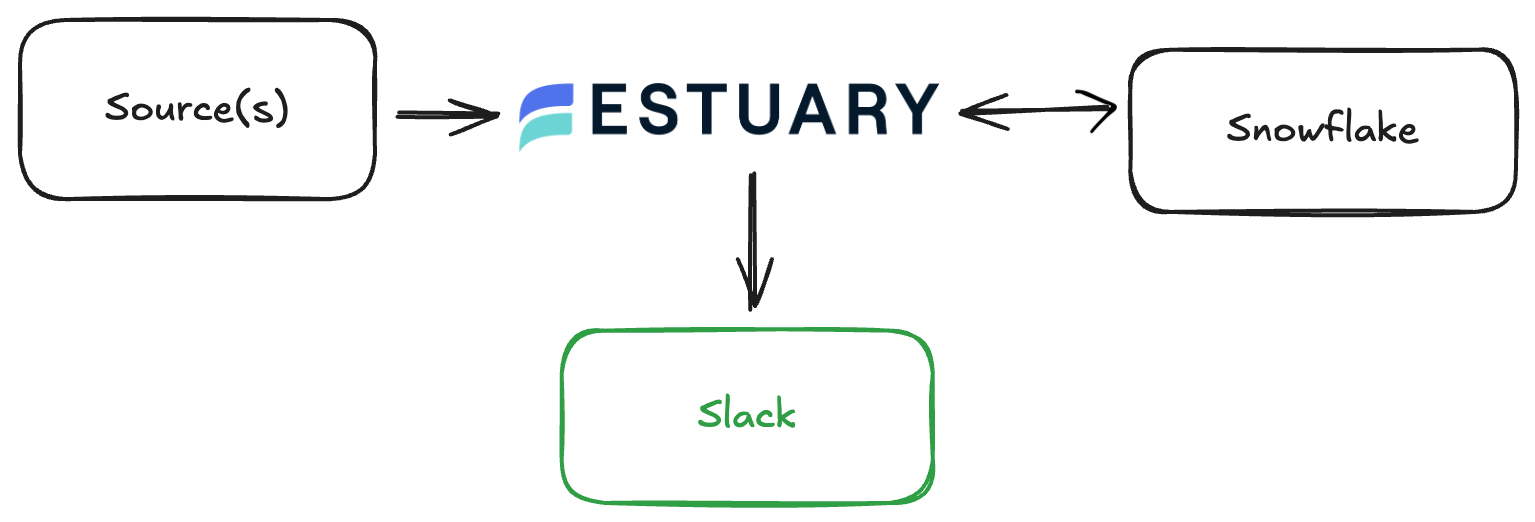

With Snowflake’s change data capture, these quiet tables become dynamic feeds. Instead of polling the entire table over and over again, Estuary’s Snowflake capture connector listens for change, turning every new prediction, recalculated metric, or tiny shift into a stream of small, precise events. Snowflake emits those changes, and Estuary ingests them and turns them into a continuous feed you can actually use.

Imagine the following situation: new predictions are saved in Snowflake, and you need a Slack alert when something unexpected happens (when prices spike, for instance). On paper, you have three tedious options:

- A raw script that compares “before vs. after”

- A cron job that reads from Snowflake every N minutes

- A pipeline that you have to maintain

All of them can lead to extra code, silent failures, unclear ownership, and manual retries.

Or, you can use Estuary, a right-time data platform, which allows you to listen for Snowflake changes using CDC and send only what matters to Slack.

If you want to experiment with this flow yourself, you can start with a free Estuary account.

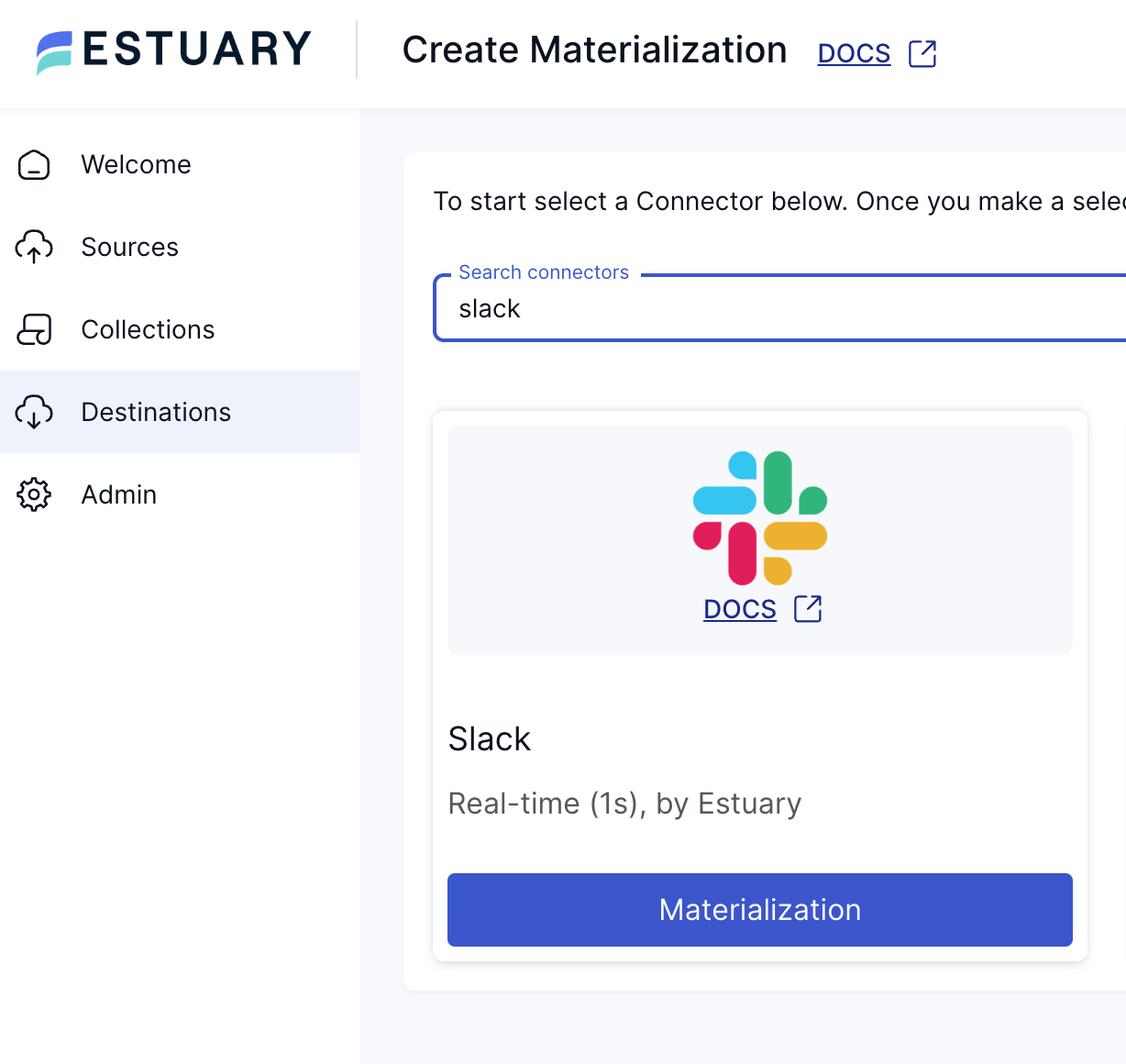

Setting up the above pipeline with Estuary is simple. You only need to create a new Snowflake Capture by selecting it from the Sources tab. Then materialize it into Slack or another destination with one of the existing connectors:

Once that’s done, you need to materialize your data collections into a Slack channel, specifying the desired channel name and message appearance.

Now, whenever there is a spike, a sudden drop, or a strange pattern, you’ll see it immediately rather than letting that useful information sit in Snowflake. And the great news is, you don’t need to remember which dashboard you need to check for specific metrics. The data comes to you directly, at the right time and where your team actually works. Full analytical dashboards are left for those situations when you need the deeper context.

However, Slack isn’t only useful for immediate alerts. The same pipeline can be used to send weekly summaries or reports, reminders about alerts, and similar. Perhaps every Monday, you can receive a summary of your model’s metrics. This actually illustrates the “right-time” approach even better: some signals arrive immediately and others once per week.

Conclusion: Four Building Blocks of Reverse ETL

When you design a Reverse ETL or right-time flow, you don’t need to think like an engineer. It all comes down to four simple building blocks:

- Source: The table or view in Snowflake that already captures your business logic.

- Transform: Shaping or filtering the data to make it compatible with the destination tool, often as simple as selecting the right columns.

- Destination: The operational tool (Salesforce, HubSpot, Zendesk, or Slack) where the data becomes useful.

- Cadence: When the data should move (every event, every 15 minutes, hourly, or daily, depending on the business workflow).

Once you break it down this way, you realize you don’t need to build DIY pipelines, write scripts, or maintain custom jobs. All you have to do is define these four parts and let a platform like Estuary handle the rest.

The real focus is on ensuring reliability, not working on reinventing connectors (you’ve already got that covered). The true design challenge lies in translating business needs, like turning “sales says they want real-time” into “they only need data updated every hour.” As for the rest, you’ve got all the tools you need at your disposal to make the implementation process easy.

About the author

Alessandro is a data scientist with a background in software engineering and statistics, working at the intersection of data and business to solve complex problems. Passionate about building practical solutions, from models and code to new tools. He also speaks at conferences, teaches, and advocates for better data practices.