Introduction: MySQL to CSV: Every Export Method Explained

If you need to export MySQL data to CSV, the right method depends on one thing: whether you need to do this once or repeatedly.

For a one-time export of a small table, a single SQL command gets the job done in seconds. For large tables, scheduled exports, or data that needs to reach a warehouse or downstream system reliably, a different approach is needed entirely. Using the wrong method for your situation is the most common reason MySQL to CSV exports fail, time out, or quietly produce corrupted output.

This guide covers every method available, starting with the fastest command line approaches, moving through GUI options, and ending with when manual exports stop being the right tool and what to use instead.

Here is the fastest answer if you just need the command:

plaintext language-sqlSELECT * FROM your_table

INTO OUTFILE '/tmp/your_export.csv'

FIELDS TERMINATED BY ','

ENCLOSED BY '"'

LINES TERMINATED BY '\\n';Run this in your MySQL client with appropriate file permissions. If you hit a secure_file_priv error, jump to the troubleshooting section below where that fix is covered in full.

For everything else, read on.

What this guide covers:

- SELECT INTO OUTFILE: the fastest native export method

- mysqldump to CSV: when and how to use it correctly

- MySQL Workbench export: step by step

- phpMyAdmin export: step by step

- Troubleshooting the five most common export failures

- When manual CSV exports stop scaling, and what to do instead

What is a CSV file?

A CSV (Comma-Separated Value) file is a plain text file used to store data. The values in CSV files are separated using commas. Since separating data with commas is a simple process, the CSV files are lightweight. As a result, it is a popular choice for exporting and importing data among data professionals.

Although the data in CSV files are separated in comma-delimited format, you can quickly convert them into a columnar format. This allows you to quickly read and analyze data with different programming languages.

What is MySQL?

MySQL is an open-source Relational Database Management System. It is widely used to store structured data to perform quick analysis. With MySQL, you can store data across multiple tables and apply the relationship among data points for better accessibility.

MySQL works with Structured Query Language (SQL), making it accessible to a wider range of users. However, you can also talk to the MySQL database with different programming languages like Python, Java, and more.

Method 1: Export MySQL to CSV Using SELECT INTO OUTFILE

SELECT INTO OUTFILE is MySQL's native export command. It is the fastest method available for exporting data directly from the database server to a file, and it requires no additional tools or software beyond your MySQL client.

Basic Syntax

plaintext language-sqlSELECT column1, column2, column3

INTO OUTFILE '/tmp/export.csv'

FIELDS TERMINATED BY ','

ENCLOSED BY '"'

LINES TERMINATED BY '\\n'

FROM your_table;

Each clause does a specific job:

INTO OUTFILEspecifies the file path where MySQL will write the output. This path is on the server, not your local machine.FIELDS TERMINATED BY ','sets the delimiter between columns. A comma is standard for CSV but you can use a tab character (\\t) for TSV output.ENCLOSED BY '"'wraps each field value in double quotes. This is important for fields that may contain commas, line breaks, or special characters that would otherwise corrupt the CSV structure.LINES TERMINATED BY '\\n'sets the line ending. Use\\r\\nif the file needs to be opened in Excel on Windows.

How to Include Column Headers

SELECT INTO OUTFILE does not include column headers by default. This trips up a lot of developers. The cleanest solution is to use a UNION to prepend a header row:

plaintext language-sqlSELECT 'column1', 'column2', 'column3'

UNION ALL

SELECT column1, column2, column3

FROM your_table

INTO OUTFILE '/tmp/export_with_headers.csv'

FIELDS TERMINATED BY ','

ENCLOSED BY '"'

LINES TERMINATED BY '\\n';

Replace the string literals in the first SELECT with the exact column names you want to appear in the header row.

Exporting a Filtered Dataset

You are not limited to full table exports. Any valid SELECT query works with INTO OUTFILE, including WHERE clauses, JOINs, and ORDER BY:

plaintext language-sqlSELECT order_id, customer_id, total_amount, created_at

INTO OUTFILE '/tmp/orders_2024.csv'

FIELDS TERMINATED BY ','

ENCLOSED BY '"'

LINES TERMINATED BY '\\n'

FROM orders

WHERE created_at >= '2024-01-01'

AND created_at < '2025-01-01'

ORDER BY created_at ASC;

This is useful when you need to export a specific date range, filter by status, or join multiple tables into a single export file.

The secure_file_priv Error and How to Fix It

This is the most common failure point for SELECT INTO OUTFILE. If you run the command and see this error:

plaintextERROR 1290 (HY000): The MySQL server is running with the

--secure-file-priv option so it cannot execute this statement

It means MySQL has restricted file writes to a specific directory, or has disabled file writes entirely. Here is how to diagnose and fix it.

First, check your current setting:

plaintext language-sqlSHOW VARIABLES LIKE 'secure_file_priv';

The output will show one of three things:

- A specific directory path, for example

/var/lib/mysql-files/. This means MySQL will only write files to that directory. Change your OUTFILE path to write inside that directory. - An empty string. This means there is no restriction and you can write to any directory the MySQL process has permission to access.

- NULL. This means file exports are completely disabled and you cannot use SELECT INTO OUTFILE at all until you change the server configuration.

If the value is NULL or you need to change the allowed directory, you need to edit your MySQL configuration file. On Linux this is typically /etc/mysql/mysql.conf.d/mysqld.cnf. Add or modify this line under the [mysqld] section:

plaintextsecure_file_priv = /tmp

Then restart MySQL:

plaintext language-bashsudo systemctl restart mysql

Note that on managed cloud databases like Amazon RDS or Google Cloud SQL, you do not have access to the MySQL configuration file and SELECT INTO OUTFILE is disabled entirely. For those environments, mysqldump or a pipeline tool is the only option.

Permissions Required

Your MySQL user needs the FILE privilege to use SELECT INTO OUTFILE. You can check this with:

plaintext language-sqlSHOW GRANTS FOR 'your_username'@'localhost';

If FILE is not listed, a database administrator can grant it with:

plaintext language-sqlGRANT FILE ON *.* TO 'your_username'@'localhost';

FLUSH PRIVILEGES;

Limitations of SELECT INTO OUTFILE

SELECT INTO OUTFILE is fast and built into MySQL, but it has real limitations worth knowing before you rely on it:

It writes the file to the server filesystem, not your local machine. If you are connecting to a remote MySQL server, you need a separate step to copy the file to your local machine using SCP or SFTP.

It will fail if the output file already exists. MySQL will not overwrite an existing file. You need to delete or rename the previous export before running the command again, which makes automation more complex.

It requires FILE privilege, which many managed database environments and production databases do not grant to application users for security reasons.

For large tables, the entire query runs before any output is written, which can cause timeout issues on tables with tens of millions of rows.

Method 2: Export MySQL to CSV Using mysqldump

mysqldump is a command line utility that ships with MySQL. Most developers know it as a tool for creating full database backups in SQL format, but it can also export data as tab-separated values, which with the right flags produces output that any CSV-compatible tool can read.

It is important to understand upfront that mysqldump does not natively produce comma-separated output. What it produces is tab-separated output with optional quoting. For most downstream tools including Excel, Google Sheets, Python pandas, and data warehouses, this is perfectly acceptable and often preferable to comma-separated format because tab-separated values handle embedded commas in field values without additional escaping. If you specifically need comma separation, a post-processing step is required, which is covered at the end of this section.

Basic mysqldump Tab-Separated Export

plaintext language-bashmysqldump \\

--host=localhost \\

--user=your_username \\

--password \\

--no-create-info \\

--tab=/tmp/export_directory/ \\

your_database your_table

Breaking down each flag:

-no-create-infosuppresses the CREATE TABLE statement that mysqldump normally includes. Without this flag, the output file contains SQL DDL mixed with your data, which is not what you want for a CSV export.-tab=/tmp/export_directory/tells mysqldump to write tab-separated data files to the specified directory. This flag requires the directory to already exist and the MySQL server process to have write permission to it. mysqldump will create two files per table: a.sqlfile containing the table schema and a.txtfile containing the tab-separated data.- The directory path in

-tabfaces the samesecure_file_privrestriction as SELECT INTO OUTFILE. The path must be within the directory allowed by your MySQL server configuration.

After running this command, your export will be at /tmp/export_directory/your_table.txt.

Exporting Multiple Tables at Once

To export all tables in a database:

plaintext language-bashmysqldump \\

--host=localhost \\

--user=your_username \\

--password \\

--no-create-info \\

--tab=/tmp/export_directory/ \\

your_database

This produces one .txt file per table in the specified directory. For databases with many tables this is significantly faster than running SELECT INTO OUTFILE once per table.

Exporting a Specific Query Result with mysqldump

mysqldump exports full tables by default. If you need to export a filtered dataset, use the --where flag:

plaintext language-bashmysqldump \\

--host=localhost \\

--user=your_username \\

--password \\

--no-create-info \\

--tab=/tmp/export_directory/ \\

--where="created_at >= '2024-01-01' AND status = 'completed'" \\

your_database your_table

The --where clause accepts any valid MySQL WHERE condition. Note that this applies the same filter to every table you export in a single command, so it is most useful when exporting a single table with a specific filter.

For complex multi-table queries with JOINs, SELECT INTO OUTFILE is a better fit than mysqldump because mysqldump operates at the table level rather than the query level.

Exporting Without Access to the Server Filesystem

One significant advantage mysqldump has over SELECT INTO OUTFILE is that it writes output to your local machine rather than the server filesystem when you pipe the output correctly. This makes it usable on remote servers and managed cloud databases where you do not have server filesystem access.

To write the output directly to a local file:

plaintext language-bashmysqldump \\

--host=your_remote_host \\

--user=your_username \\

--password \\

--no-create-info \\

--no-tablespaces \\

--skip-extended-insert \\

--complete-insert \\

your_database your_table \\

| grep -v "^--" \\

| grep -v "^/\\*" \\

| grep -v "^$" \\

> /local/path/export.sql

However, this approach still produces SQL INSERT statements rather than tab-separated data when used without the --tab flag, because --tab requires server filesystem access. For true CSV output from a remote server without filesystem access, SELECT INTO OUTFILE with a subsequent SCP transfer, or a dedicated export tool, is a more reliable approach.

Converting mysqldump Tab-Separated Output to True CSV

If your downstream tool specifically requires comma-separated format rather than tab-separated, you can convert the output with a simple sed command on Linux or macOS:

plaintext language-bashsed 's/\\t/,/g' /tmp/export_directory/your_table.txt > /tmp/export.csv

This replaces every tab character with a comma. This works cleanly when your data does not contain embedded commas. If your data contains commas inside field values, you need a more robust conversion that handles quoting properly. Python is the most reliable way to do this:

pythonimport csv

with open('/tmp/export_directory/your_table.txt', 'r') as tsv_file:

with open('/tmp/export.csv', 'w', newline='') as csv_file:

writer = csv.writer(csv_file, quoting=csv.QUOTE_ALL)

for line in tsv_file:

row = line.rstrip('\\n').split('\\t')

writer.writerow(row)

This script reads the tab-separated file line by line, splits on tabs, and writes each row to a properly quoted CSV file. The csv.QUOTE_ALL option wraps every field in double quotes, which handles embedded commas, line breaks, and special characters correctly.

Including Column Headers with mysqldump

Like SELECT INTO OUTFILE, mysqldump tab-separated output does not include column headers by default. The cleanest solution is to prepend the headers using MySQL's information schema:

plaintext language-bashmysql \\

--host=localhost \\

--user=your_username \\

--password \\

--batch \\

--execute="

SELECT GROUP_CONCAT(COLUMN_NAME ORDER BY ORDINAL_POSITION SEPARATOR '\\t')

FROM INFORMATION_SCHEMA.COLUMNS

WHERE TABLE_SCHEMA = 'your_database'

AND TABLE_NAME = 'your_table';

" \\

| tail -1 > /tmp/export_with_headers.txt

cat /tmp/export_directory/your_table.txt >> /tmp/export_with_headers.txt

This queries the information schema for the column names in their original order, writes them as the first line of the output file, then appends the data rows.

mysqldump vs SELECT INTO OUTFILE: When to Use Each

| SELECT INTO OUTFILE | mysqldump | |

|---|---|---|

| Output format | CSV with configurable delimiter | Tab-separated by default |

| Writes to | Server filesystem only | Server filesystem (with --tab) or local machine (piped) |

| Supports complex queries | Yes, any SELECT query | No, table-level only (--where for simple filters) |

| Includes headers | No, requires UNION workaround | No, requires separate step |

| Works on RDS / Cloud SQL | No | Yes (without --tab flag) |

| Speed on large tables | Fast | Fast |

| Requires FILE privilege | Yes | No |

| Best for | Single filtered query exports | Full table or full database exports |

The practical rule is straightforward. Use SELECT INTO OUTFILE when you need to export the result of a specific query with JOINs or complex filtering, and you have server filesystem access. Use mysqldump when you need to export full tables, export multiple tables at once, or when you are working with a remote server where you do not have FILE privilege.

Method 3: Export MySQL to CSV Using MySQL Workbench

MySQL Workbench is the official GUI tool for MySQL, developed and maintained by Oracle. It provides a visual interface for exporting data to CSV without writing any SQL or command line instructions, making it the most accessible option for users who are not comfortable with the command line.

There are two ways to export to CSV in MySQL Workbench depending on whether you need to export a full table or the results of a specific query.

Method 3a: Export a Full Table to CSV

This method uses the Table Data Export Wizard, which handles large tables reliably and gives you control over column selection, file format, and encoding.

Step 1: Open MySQL Workbench and connect to your database server.

Step 2: In the Navigator panel on the left, expand your database and right-click the table you want to export.

Step 3: Select "Table Data Export Wizard" from the context menu.

Image Credit: dev.mysql.com

Step 4: The wizard opens. On the first screen, select which columns to include in the export. By default all columns are selected. Deselect any columns you do not need.

Image Credit: dev.mysql.com

Step 5: Click Next. On the second screen, set the following options:

- File path: Choose where to save the CSV file on your local machine.

- Format: Select CSV.

- Field separator: The default is a comma. Change to a semicolon if your data will be opened in Excel in a locale that uses commas as decimal separators, such as most European countries.

- Line separator: Select CRLF for Windows compatibility or LF for Linux and macOS.

- Enclose strings: Keep this checked. It wraps string fields in double quotes, which prevents fields containing commas from corrupting the CSV structure.

Image Credit: dev.mysql.com

Step 6: Click Next, then Execute. Workbench runs the export and shows a progress log. For large tables this may take several minutes.

Step 7: Click Finish when the export completes. Your CSV file is saved to the path you specified.

A key advantage of the Table Data Export Wizard over SELECT INTO OUTFILE is that it writes directly to your local machine rather than the server filesystem, making it usable with remote servers and managed cloud databases like Amazon RDS and Google Cloud SQL where server filesystem access is not available.

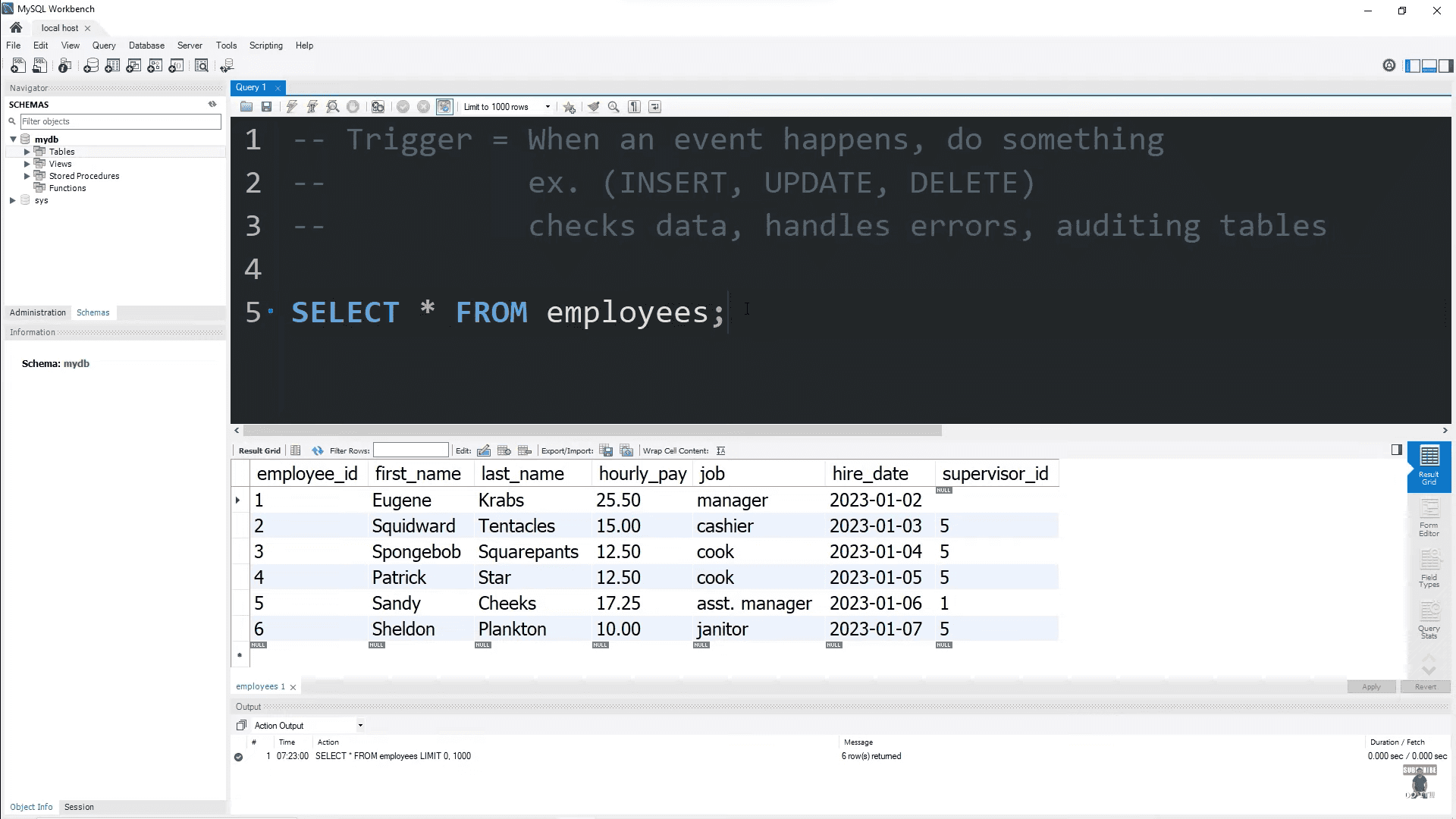

Method 3b: Export Query Results to CSV

This method exports the results of a specific SQL query rather than a full table. It is faster to set up than the wizard but has fewer configuration options.

Step 1: Open a new SQL tab in MySQL Workbench and write your query:

plaintext language-sqlSELECT order_id, customer_id, total_amount, created_at

FROM orders

WHERE created_at >= '2024-01-01'

ORDER BY created_at ASC;

Step 2: Run the query by pressing Ctrl+Enter (Windows/Linux) or Cmd+Enter (macOS). The results appear in the result grid at the bottom of the screen.

Step 3: In the result grid toolbar, click the "Export recordset to an external file" button. It is the icon that looks like a grid with an arrow pointing to a document, located in the top right of the result grid panel.

Step 4: A save dialog opens. Choose your file location, set the file type to CSV, and click Save.

Step 5: Workbench exports the visible result set to your chosen file.

One important limitation of this method: it only exports the rows currently loaded in the result grid. MySQL Workbench limits result sets to 1,000 rows by default. If your query returns more rows than the current limit, you will only export the first batch.

To check or change this limit, go to Edit, then Preferences, then SQL Editor, and look for the "Limit Rows" setting. Increasing this value or unchecking the limit entirely allows you to export larger result sets. However, for tables with millions of rows, loading the entire result set into the Workbench result grid is slow and memory-intensive. For large exports, SELECT INTO OUTFILE or mysqldump is a better choice.

Limitations of MySQL Workbench for CSV Export

MySQL Workbench is a solid option for occasional manual exports but has practical limitations that make it unsuitable for production or automated workflows:

It requires a GUI desktop environment, which means it cannot be used in headless server environments or automated scripts.

The result grid row limit means you need to manually adjust settings before exporting large datasets, and forgetting to do so silently produces incomplete exports.

There is no scheduling capability. Every export requires manual intervention, which means it does not scale for teams that need fresh data exports daily or more frequently.

For anything beyond occasional one-off exports, the command line methods or an automated pipeline are more reliable and repeatable.

Method 4: Export MySQL to CSV Using phpMyAdmin

phpMyAdmin is a web-based MySQL administration tool that is commonly available on shared hosting environments, WAMP, XAMPP, and LAMP stacks. If you are managing a MySQL database through a web hosting control panel like cPanel, phpMyAdmin is likely the tool you have access to.

Exporting a Full Table to CSV

Step 1: Log into phpMyAdmin and select your database from the left panel.

Step 2: Click the table you want to export from the list of tables on the left.

Step 3: Click the "Export" tab in the top navigation bar.

Image Credit: inmotionhosting.com

Step 4: phpMyAdmin presents two export modes. Quick mode exports the full table with default settings. Custom mode gives you control over format options. Select Custom.

Image Credit: inmotionhosting.com

Step 5: Under Format, select CSV from the dropdown. Do not select "CSV for MS Excel" unless you specifically need the file for Excel, as that format uses different line endings and quoting that can cause issues with other tools.

Step 6: Scroll down to the Format-specific options section. The default settings work for most use cases but review these:

- Columns separated with: Default is a comma. Change only if your downstream tool requires a different delimiter.

- Columns enclosed with: Default is double quotes. Keep this as is.

- Lines terminated with: Select AUTO to let phpMyAdmin detect the appropriate line ending, or set explicitly to LF for Linux/macOS compatibility.

- NULL replaced by: By default NULL values are exported as the string NULL. If your downstream tool interprets empty strings differently from NULL, change this to an empty value.

Step 7: Under the Output section, select "Save output to a file" to download the export directly to your local machine.

Step 8: Click Go. phpMyAdmin runs the export and your browser downloads the CSV file.

Exporting Query Results to CSV in phpMyAdmin

Step 1: Click your database in the left panel, then click the SQL tab in the top navigation.

Step 2: Write your query in the SQL input box:

plaintext language-sqlSELECT order_id, customer_id, total_amount, created_at

FROM orders

WHERE status = 'completed'

ORDER BY created_at DESC;

Step 3: Run the query by clicking Go. The results display in a table below.

Step 4: Scroll to the bottom of the results table and click the Export link that appears below the result set.

Step 5: Follow the same format selection steps as the full table export above.

One important limitation: phpMyAdmin has a default export size limit configured by the PHP memory_limit and max_execution_time settings on the server. For large tables, exports may time out or fail silently. If you are exporting more than a few hundred thousand rows through phpMyAdmin, you will likely hit this limit. In that case, use SELECT INTO OUTFILE or mysqldump instead.

Limitations of phpMyAdmin for CSV Export

phpMyAdmin is convenient for small, infrequent exports in hosting environments where command line access is not available. It is not suitable for production data workflows for these reasons:

Export size is constrained by PHP memory and timeout settings that are often outside your control in shared hosting environments.

Like MySQL Workbench, it requires manual intervention for every export with no scheduling or automation capability.

In environments where phpMyAdmin is publicly accessible, using it for sensitive data exports introduces security considerations around session management and access control.

For anything beyond small one-off exports, the command line methods are faster, more reliable, and more secure.

Troubleshooting MySQL to CSV Export Failures

This section covers the five most common failure points when exporting MySQL data to CSV. These are the errors that developers actually encounter in production and that most MySQL to CSV guides do not address.

Problem 1: secure_file_priv Blocking the Export

This is the most frequently encountered error with SELECT INTO OUTFILE and mysqldump with the --tab flag.

Error message:

plaintextERROR 1290 (HY000): The MySQL server is running with the

--secure-file-priv option so it cannot execute this statement

Diagnosis:

plaintext language-sqlSHOW VARIABLES LIKE 'secure_file_priv';

Fix based on the output:

If the value is a directory path, write your output file inside that directory:

plaintext language-sqlSELECT * FROM your_table

INTO OUTFILE '/var/lib/mysql-files/export.csv'

FIELDS TERMINATED BY ','

ENCLOSED BY '"'

LINES TERMINATED BY '\\n';

If the value is NULL, file exports are disabled at the server level. Edit /etc/mysql/mysql.conf.d/mysqld.cnf and add:

plaintextsecure_file_priv = /tmp

Then restart MySQL:

plaintext language-bashsudo systemctl restart mysql

If you are on a managed cloud database like Amazon RDS, Google Cloud SQL, or Azure Database for MySQL, secure_file_priv is set to NULL and cannot be changed. You cannot use SELECT INTO OUTFILE on these platforms. Use mysqldump without the --tab flag, MySQL Workbench, phpMyAdmin, or an automated pipeline tool instead.

Problem 2: Output File Already Exists

SELECT INTO OUTFILE will not overwrite an existing file. If the output path already exists, the command fails immediately.

Error message:

plaintextERROR 1086 (HY000): File '/tmp/export.csv' already exists

Fix:

Delete the existing file before running the export:

plaintext language-bashrm /tmp/export.csv

For automated or scheduled exports where the same filename is used repeatedly, add a timestamp to the filename to ensure uniqueness:

plaintext language-sqlSET @filename = CONCAT('/tmp/export_', DATE_FORMAT(NOW(), '%Y%m%d_%H%i%s'), '.csv');

SET @query = CONCAT(

"SELECT * INTO OUTFILE '", @filename,

"' FIELDS TERMINATED BY ',' ENCLOSED BY '\\"' LINES TERMINATED BY '\\n' FROM your_table"

);

PREPARE stmt FROM @query;

EXECUTE stmt;

DEALLOCATE PREPARE stmt;

Alternatively, use a shell script that removes the previous file before running the MySQL export command:

plaintext language-bash#!/bin/bash

EXPORT_FILE="/tmp/export.csv"

rm -f "$EXPORT_FILE"

mysql -u your_username -p your_database -e \\

"SELECT * INTO OUTFILE '$EXPORT_FILE'

FIELDS TERMINATED BY ','

ENCLOSED BY '\\"'

LINES TERMINATED BY '\\n'

FROM your_table;"

Problem 3: Special Characters Corrupting the CSV Output

If your data contains commas, double quotes, newlines, or non-ASCII characters, your CSV output can become malformed in ways that are not immediately obvious until the file is opened in another tool.

Commas inside field values break CSV structure if fields are not properly enclosed. Always use ENCLOSED BY '"' in your SELECT INTO OUTFILE command. Never omit this clause if your data contains text fields.

Double quotes inside field values need to be escaped. MySQL handles this automatically when you use ENCLOSED BY '"', escaping internal double quotes by doubling them, which is the standard CSV escaping convention. Verify this is working correctly by checking a few rows that contain double quotes after export.

Newlines inside field values are the most destructive issue. A single newline character inside a text field causes the CSV reader to interpret that field as the end of the row, splitting one record into two incomplete rows. MySQL does not automatically escape embedded newlines in CSV export. The cleanest fix is to strip or replace newlines at the query level:

plaintext language-sqlSELECT

order_id,

REPLACE(REPLACE(notes, '\\n', ' '), '\\r', ' ') AS notes,

created_at

INTO OUTFILE '/tmp/export.csv'

FIELDS TERMINATED BY ','

ENCLOSED BY '"'

LINES TERMINATED BY '\\n'

FROM orders;

Character encoding issues appear when your database uses UTF-8 but the export file is read by a tool expecting a different encoding, or vice versa. Force UTF-8 encoding explicitly in your export:

plaintext language-sqlSET NAMES utf8mb4;

SELECT * INTO OUTFILE '/tmp/export.csv'

FIELDS TERMINATED BY ','

ENCLOSED BY '"'

LINES TERMINATED BY '\\n'

FROM your_table;

For Excel specifically, UTF-8 encoded CSV files need a BOM (Byte Order Mark) at the start of the file to be displayed correctly. MySQL does not add this automatically. If your CSV will be opened in Excel and contains non-ASCII characters, add the BOM using a post-processing step:

plaintext language-bashprintf '\\xEF\\xBB\\xBF' | cat - /tmp/export.csv > /tmp/export_excel.csv

Problem 4: Export Timing Out on Large Tables

For tables with tens of millions of rows, SELECT INTO OUTFILE and mysqldump can hit timeout limits before the export completes.

For SELECT INTO OUTFILE, the entire query must execute before any output is written. On very large tables, this means MySQL holds the result set in memory or in temporary storage for the full duration. If the query takes longer than the net_write_timeout or wait_timeout server variable allows, the connection is dropped.

Check your current timeout settings:

plaintext language-sqlSHOW VARIABLES LIKE 'net_write_timeout';

SHOW VARIABLES LIKE 'wait_timeout';

Increase them for the duration of your export session:

plaintext language-sqlSET SESSION net_write_timeout = 3600;

SET SESSION wait_timeout = 3600;

For very large tables, a chunked export approach is more reliable than a single query. Export the table in batches using the primary key as a cursor:

plaintext language-bash#!/bin/bash

BATCH_SIZE=500000

OFFSET=0

BATCH_NUM=1

while true; do

ROW_COUNT=$(mysql -u your_username -pyour_password your_database -se \\

"SELECT COUNT(*) FROM your_table LIMIT $BATCH_SIZE OFFSET $OFFSET;")

if [ "$ROW_COUNT" -eq "0" ]; then

break

fi

mysql -u your_username -pyour_password your_database -e \\

"SELECT * INTO OUTFILE '/tmp/export_batch_${BATCH_NUM}.csv'

FIELDS TERMINATED BY ','

ENCLOSED BY '\\"'

LINES TERMINATED BY '\\n'

FROM your_table

LIMIT $BATCH_SIZE OFFSET $OFFSET;"

OFFSET=$((OFFSET + BATCH_SIZE))

BATCH_NUM=$((BATCH_NUM + 1))

done

cat /tmp/export_batch_*.csv > /tmp/export_final.csv

rm /tmp/export_batch_*.csv

This exports the table in batches of 500,000 rows, writing each batch to a separate file, then concatenates them into a single final CSV.

Note that using LIMIT with OFFSET on very large tables has its own performance cost because MySQL must scan and discard all rows before the offset on each query. A more efficient approach for very large tables uses a range condition on an indexed primary key:

plaintext language-sqlSELECT * INTO OUTFILE '/tmp/export_batch_1.csv'

FIELDS TERMINATED BY ','

ENCLOSED BY '"'

LINES TERMINATED BY '\\n'

FROM your_table

WHERE id BETWEEN 1 AND 500000;

Increment the range for each subsequent batch.

Problem 5: Missing FILE Privilege

If your MySQL user does not have the FILE privilege, SELECT INTO OUTFILE fails with a permissions error.

Error message:

plaintextERROR 1045 (28000): Access denied for user 'your_user'@'localhost'

(using password: YES)

Or in some MySQL versions:

plaintextERROR 1 (HY000): Can't create/write to file '/tmp/export.csv'

(OS errno 13 - Permission denied)

Diagnosis:

plaintext language-sqlSHOW GRANTS FOR 'your_user'@'localhost';

Look for GRANT FILE ON *.* in the output. If it is absent, the FILE privilege has not been granted.

Fix:

A database administrator with SUPER privilege can grant it:

plaintext language-sqlGRANT FILE ON *.* TO 'your_user'@'localhost';

FLUSH PRIVILEGES;

Note that FILE is a global privilege in MySQL, meaning it cannot be restricted to a specific database. Granting it gives the user the ability to read and write files anywhere on the server filesystem that the MySQL process has access to. In production environments, many database administrators intentionally withhold FILE privilege for this reason.

If FILE privilege cannot be granted in your environment, use mysqldump without the --tab flag, MySQL Workbench, phpMyAdmin, or an automated pipeline tool that does not require server filesystem access.

When Manual CSV Exports Stop Scaling

Every method covered so far in this guide is a manual process. You run a command, a wizard, or a query, and you get a file. For a one-time data extraction or an occasional report, that is perfectly sufficient.

But most teams that start with manual CSV exports eventually hit a point where the approach stops working. Recognizing that point early saves significant engineering time and prevents the kind of silent data quality failures that only surface when someone questions a dashboard number in a business review.

Here are the specific situations where manual CSV exports become the wrong tool.

You Are Running the Same Export Repeatedly

If someone on your team is exporting the same MySQL table to CSV on a daily, weekly, or hourly basis and uploading it to a warehouse, a BI tool, or another system, that workflow has already outgrown manual exports. Every manual step in a recurring data workflow is a point of failure: the person forgets to run it, runs it at the wrong time, exports the wrong date range, or uploads to the wrong destination.

Recurring data movement between systems is exactly what automated pipelines exist to solve. A pipeline runs on a defined schedule or continuously without human intervention, delivers data to the destination reliably, and alerts you when something goes wrong rather than silently producing stale or missing data.

Your Tables Are Growing Beyond What Manual Exports Handle Well

SELECT INTO OUTFILE and mysqldump work well for tables up to tens of millions of rows, with the chunking workarounds described in the troubleshooting section above. Beyond that scale, manual export methods become increasingly fragile. Exports take longer, timeouts become more frequent, and the files themselves become large enough that importing them into a destination system is a separate engineering problem.

At this scale, the fundamental issue is that a full table scan on every export is wasteful. You are re-exporting data that has not changed since the last run. What you actually need is incremental data movement, where only the rows that have been inserted, updated, or deleted since the last sync are captured and delivered to the destination.

This is what Change Data Capture does. Instead of querying the table, CDC reads the MySQL binary log directly, capturing every change as it happens with minimal load on the source database and delivering only the changed rows to the destination.

You Need Data in the Destination Within Minutes, Not Hours

A CSV export workflow has inherent latency. Even if you automate the export and import steps with scripts, you are looking at a minimum of several minutes between a change occurring in MySQL and that change being reflected in the destination, and realistically much longer if exports run on hourly or daily schedules.

For use cases where data freshness matters, this latency has real business consequences. A fraud detection system working on hour-old transaction data misses fraud happening right now. An inventory dashboard showing stock levels from this morning's export creates overselling risk for a busy ecommerce operation. A customer-facing feature powered by daily batch data feels slow and disconnected from reality.

Real-time pipelines eliminate this latency by propagating changes from MySQL to the destination within seconds or milliseconds of them occurring at the source.

You Need to Deliver MySQL Data to Multiple Destinations

A CSV export produces a file. Getting that file into multiple destinations, for example Snowflake for analytics, Elasticsearch for search, and a Postgres replica for a reporting application, requires separate import steps for each destination. Each step is another manual process, another potential point of failure, and another piece of infrastructure to maintain.

A pipeline with multiple materializations solves this cleanly. Data is captured once from MySQL and delivered to every destination independently, with each destination staying in sync continuously without additional engineering work per destination.

Automating MySQL Data Movement with Estuary

Estuary is a managed data pipeline platform that handles real-time data movement from MySQL to destinations including Snowflake, BigQuery, Redshift, PostgreSQL, Apache Iceberg, Databricks, Elasticsearch, and others.

Under the hood, Estuary uses Change data capture (CDC) to read MySQL's binary log directly, capturing every insert, update, and delete as it happens without running queries against your MySQL tables. This means:

Your production database experiences no additional query load from the pipeline running.

Data arrives at the destination within milliseconds of changes occurring at the source rather than on a batch schedule.

Deletes and updates are propagated correctly to the destination, not just inserts. This is a critical difference from CSV-based workflows where deleted rows in MySQL silently remain in your destination data until a full refresh overwrites them.

Schema changes in MySQL are detected automatically. When you add a column to a MySQL table, Estuary detects the change and updates the destination schema accordingly without manual intervention.

Setting up a MySQL capture in Estuary requires enabling binary logging on your MySQL server if it is not already enabled, which is a one-time configuration change, and then connecting Estuary to your MySQL instance through the web dashboard. No infrastructure to provision, no Kafka cluster to manage, and no custom code to write or maintain.

To check whether binary logging is already enabled on your MySQL server:

plaintext language-sqlSHOW VARIABLES LIKE 'log_bin';

If the value is OFF, enable it by adding the following to your MySQL configuration file under the [mysqld] section:

plaintextlog_bin = mysql-bin

binlog_format = ROW

binlog_row_image = FULL

server_id = 1

Then restart MySQL:

plaintext language-bashsudo systemctl restart mysql

These settings enable row-level binary logging, which is the format Estuary's CDC connector requires to capture individual row changes accurately.

From there, Estuary handles the rest: capturing changes from the binary log, storing them durably in your own cloud storage bucket as an intermediate layer, validating documents against a schema, and materializing the data to your chosen destination continuously.

For teams that have outgrown manual CSV exports but do not want to build and operate their own streaming infrastructure, this is the path that eliminates the operational overhead while delivering real-time data movement at any scale.

You can connect your first MySQL source and start a pipeline for free at dashboard.estuary.dev.

Conclusion

Exporting MySQL data to CSV comes down to choosing the right method for your situation.

For a one-time export with server filesystem access, SELECT INTO OUTFILE is the fastest option. For full table or multi-table exports, mysqldump with the --tab flag is more flexible. For remote servers and managed cloud databases like RDS or Cloud SQL where filesystem access is not available, MySQL Workbench and phpMyAdmin handle exports reliably for moderate data volumes.

When you hit the limits of manual exports, whether that is table size, frequency, destination requirements, or data freshness, the right move is incremental CDC-based pipelines rather than trying to scale manual CSV workflows further. Full table scans on large databases are expensive, slow, and fragile. CDC reads only what changed, delivers it within milliseconds, and handles updates and deletes correctly, which no CSV-based workflow does natively.

Here is a quick reference for choosing the right method:

| Situation | Recommended Method |

|---|---|

| One-time export, server filesystem access | SELECT INTO OUTFILE |

| Full table export, multiple tables | mysqldump with --tab |

| Remote server or managed cloud database | MySQL Workbench or phpMyAdmin |

| Large table, millions of rows | Chunked SELECT INTO OUTFILE or mysqldump |

| Recurring export to a warehouse or BI tool | Automated pipeline with CDC |

| Real-time data freshness required | Estuary CDC pipeline |

| Multiple destinations from one MySQL source | Estuary with multiple materializations |

Start Moving MySQL Data Without the Manual Work

If you have outgrown manual CSV exports and need MySQL data delivered to Snowflake, BigQuery, Redshift, PostgreSQL, Apache Iceberg, Databricks, or Elasticsearch reliably and in real time, Estuary connects to your MySQL instance using CDC, captures every change as it happens, and keeps your destinations in sync continuously.

No Kafka cluster to manage. No custom scripts to maintain. No full table scans on your production database.

You can connect your first MySQL source and start a pipeline for free at dashboard.estuary.dev.

FAQs

Can I export MySQL to CSV on Amazon RDS or Google Cloud SQL?

How do I automate MySQL to CSV exports on a schedule?

Why is my CSV file corrupted when I open it in Excel?

About the author

With over 15 years in data engineering, a seasoned expert in driving growth for early-stage data companies, focusing on strategies that attract customers and users. Extensive writing provides insights to help companies scale efficiently and effectively in an evolving data landscape.