Moving data from HubSpot to Redshift allows teams to centralize CRM data for deeper reporting, advanced analytics, and scalable storage. While HubSpot powers marketing, sales, and customer service workflows, Amazon Redshift provides the performance and scale needed for complex BI queries and long-term data warehousing.

HubSpot does not provide a built-in, first-party destination for Amazon Redshift. As a result, organizations typically either build a custom pipeline using the HubSpot API and S3 staging, or use a data integration platform to automate synchronization. The best method depends on your latency requirements, engineering capacity, and operational complexity tolerance.

In this guide, we compare two reliable ways to sync HubSpot to Redshift, including manual API-based approaches and a right-time data pipeline that supports both real-time and batch data movement.

What is HubSpot?

HubSpot is a cloud-based customer relationship management (CRM) platform that offers software products for customer service, inbound marketing, and sales. HubSpot CRM provides a centralized database where you can manage your contacts, tasks, and companies. Some of the useful features it offers include lead management and tracking, document tracking, ticketing, sales automation, data sync, and landing page builder, among others.

What is Redshift?

Amazon Redshift is a fully managed cloud data warehouse service provided by Amazon Web Services (AWS). It is designed to run fast, scalable analytics queries across large volumes of structured and semi-structured data using standard SQL.

Redshift uses a columnar storage format and massively parallel processing (MPP) architecture, allowing it to execute complex queries efficiently across distributed compute resources.

Redshift can be deployed in two main ways:

- Provisioned clusters, where you manage compute nodes (commonly RA3 nodes with managed storage).

- Redshift Serverless, which automatically scales compute capacity based on workload without requiring cluster management.

In Redshift, data is typically loaded from Amazon S3 using the COPY command or through integration tools that stage data in S3 before loading it into warehouse tables.

When moving data from HubSpot to Redshift, organizations use Redshift to centralize CRM data for reporting, forecasting, and advanced analytics across sales and marketing teams.

Why Move Data From HubSpot to Redshift?

While HubSpot provides built-in reporting for sales and marketing activities, it is not designed to function as a full-scale analytics warehouse. As data volumes grow, teams often need more flexibility to combine CRM data with data from product systems, billing platforms, advertising tools, and internal databases.

Moving data from HubSpot to Redshift allows organizations to:

- Run complex SQL queries across large CRM datasets

- Join HubSpot data with other operational or product data

- Store historical data for long-term trend analysis

- Power business intelligence tools like Tableau, Looker, or QuickSight

Redshift is optimized for large-scale analytical workloads. Its columnar storage and distributed query engine enable fast performance even when analyzing millions of records from contacts, deals, campaigns, and tickets.

For teams that require near real-time insights, syncing HubSpot with Redshift ensures analytics dashboards and reports reflect up-to-date sales and marketing activity.

Methods to Move Data From HubSpot to Redshift

There are two primary approaches to building a HubSpot to Redshift integration: a custom API-based pipeline or a managed data integration platform. The right choice depends on whether you need simple batch exports or a dependable, continuously updating data pipeline.

You can move data from HubSpot to Redshift using one of the following methods:

- Method #1: HubSpot API + Amazon S3 + Redshift COPY

A custom-built pipeline that extracts data using the HubSpot API, stages files in Amazon S3, and loads them into Redshift using the COPY command. - Method #2: Using a Managed Platform Like Estuary

A configured pipeline that handles extraction, incremental updates, S3 staging, and Redshift materialization automatically.

Below, we explore both methods in detail so you can determine which HubSpot to Redshift strategy fits your technical and operational requirements.

Method #1: Use the HubSpot API + Amazon S3 + Redshift COPY

A common way to move HubSpot data to Redshift is to extract records from HubSpot using the HubSpot REST APIs, stage the results as files in Amazon S3, and then load those files into Redshift tables using the COPY command.

This approach can work well for batch or scheduled loads, especially if your HubSpot objects are not changing constantly and you can tolerate some latency.

Step 1: Extract data from HubSpot using the HubSpot API

HubSpot exposes REST APIs that return JSON responses for common CRM objects like contacts, companies, deals, and tickets. In production pipelines, extraction is usually implemented as a script or service (rather than Postman) that:

- Authenticates using OAuth access tokens (or private app token if your org uses it)

- Pulls records from one or more object endpoints

- Handles pagination and object associations (when needed)

Step 2: Normalize and write files to Amazon S3

Because Redshift loads data most reliably from files, the extracted HubSpot data is typically transformed into newline-delimited JSON (JSONL), CSV, or Parquet before staging to S3.

In practice, you’ll usually also need to:

- Flatten nested HubSpot properties into columns (or store as SUPER if using semi-structured patterns)

- Standardize timestamps and numeric fields

- Handle nullability and missing properties (HubSpot schemas vary across portals)

Step 3: Load data into Redshift using COPY

Once files are in S3, use the Redshift COPY command to load them into target tables. COPY is built for parallel loading and is the standard approach for ingesting S3-staged data into Redshift at scale.

Most teams run COPY as part of a scheduled job (hourly/daily) or triggered workflow.

Example: Loading HubSpot Data into Redshift Using COPY

Below is a simplified example of using the Redshift COPY command to load staged HubSpot data from Amazon S3 into a target table:

plaintext language-sqlCOPY hubspot_contacts

FROM 's3://your-bucket/hubspot/contacts/2026-02-01/'

IAM_ROLE 'arn:aws:iam::123456789012:role/RedshiftCopyRole'

FORMAT AS JSON 'auto'

TIMEFORMAT 'auto'

REGION 'us-east-1';

In this example:

- Data files are stored in an S3 prefix organized by date.

- The IAM role grants Redshift permission to read from the S3 bucket.

- JSON 'auto' allows Redshift to automatically map fields from structured JSON.

- TIMEFORMAT 'auto' ensures timestamps are parsed correctly.

For production use, you should review AWS guidance on compression, file sizing, and parallel loading to optimize COPY performance and reduce load times.

Refer to the Amazon Redshift documentation for detailed COPY command options and performance best practices.

Step 4: Implement incremental sync (required for anything beyond one-time loads)

This is the part most “API + COPY” guides skip. If you need ongoing HubSpot to Redshift syncing, you must build incremental logic such as:

- Querying recently updated records using timestamps (e.g., updatedAt-style patterns depending on the endpoint)

- Maintaining state (last successful sync time per object)

- Upserting changes in Redshift (staging table + MERGE-like logic or delete/insert patterns)

- Handling deletes (HubSpot delete events are not always straightforward across objects)

Without incremental logic, you’ll either re-load everything each time (costly) or miss changes.

Example: Incremental Sync Using an Updated Timestamp Cursor

To avoid reloading all records on every sync, most teams implement a cursor-based incremental strategy.

A simplified approach looks like this:

- Store the last successful sync timestamp for each HubSpot object (for example,

last_synced_at). - Query the HubSpot API for records updated after that timestamp.

- Write only those updated records to S3.

- Load into a staging table in Redshift.

- Merge updated rows into the final table.

- Update

last_synced_atonce the load completes successfully.

For example:

- Last successful sync:

2026-02-01T00:00:00Z - API query filters records updated after that time.

- Only changed contacts or deals are extracted.

- Redshift staging table handles upserts via primary key matching.

This approach reduces load costs and API usage, but requires careful handling of:

- API pagination

- Clock skew and time precision

- Failed load retries

- Deleted records

- Idempotent merges to prevent duplicates

Without reliable cursor management and merge logic, HubSpot to Redshift pipelines can easily miss updates or duplicate records.

Limitations of API + COPY for HubSpot to Redshift

This method is flexible, but it becomes operationally heavy as requirements grow:

- API rate limits: large portals hit limits quickly, slowing extraction and forcing backoff/retry logic.

- Near real-time is hard: to get low-latency sync, you’ll likely need webhook/event-driven design, which adds complexity.

- Schema drift: HubSpot properties change often; you must update your mappings and Redshift table definitions safely.

- Data type issues: JSON fields don’t always map cleanly to Redshift column types; incorrect coercions can break loads or corrupt values.

- Reliability and recovery: you must build monitoring, retries, deduplication, and idempotent loading to avoid missing or duplicating records.

If your goal is a dependable HubSpot to Redshift pipeline with minimal engineering overhead, this is usually where teams start looking at managed integration approaches.

Method #2: Use Estuary to Sync HubSpot to Redshift (Right-Time Data Pipelines)

If you want to move data from HubSpot to Redshift without building and maintaining API scripts, schedulers, retry logic, and upsert workflows, you can use Estuary, the Right-Time Data Platform. “Right-time” means you can choose when data moves: sub-second, near real-time, or batch, depending on your use case.

With Estuary, you configure a HubSpot capture and a Redshift materialization. Estuary handles ongoing sync, staging, and loading so your Redshift tables stay continuously up to date.

Prerequisites

Before you start, you’ll need:

- A Redshift cluster accessible either directly or via an SSH tunnel

- A database user with permission to create tables in the target schema

- An S3 bucket used as a temporary staging area for Redshift loads

- AWS credentials (access key + secret) with read/write access to the staging bucket

Note: The Redshift connector creates tables automatically based on your specification. Pre-created tables are not supported.

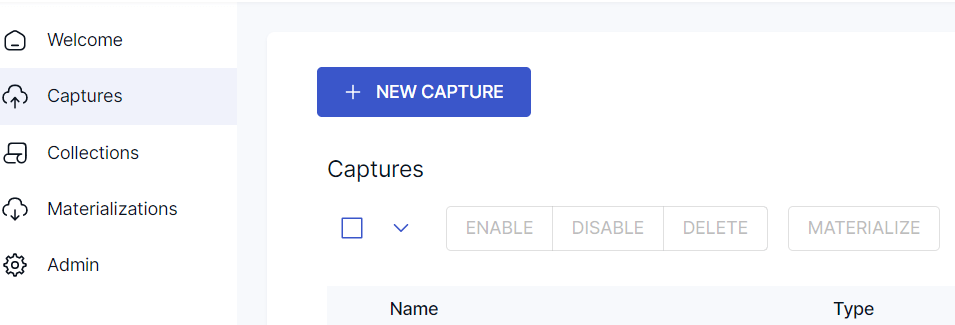

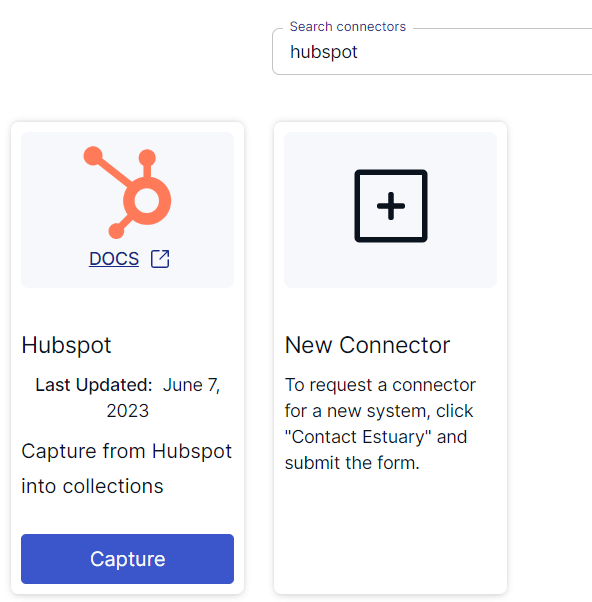

Step 1: Create a HubSpot Capture (Source)

In the Estuary web app:

- Go to Captures and select +New Capture.

- Search for HubSpot and open the connector.

- Authenticate with OAuth2 using your HubSpot account.

- Estuary will discover supported HubSpot resources (for example: contacts, companies, deals, tickets, engagements, campaigns, marketing emails, custom objects, and more).

- Select the resources you want and Save and Publish.

Important connector detail: calculated properties

HubSpot CRM objects can include calculated properties whose values change without updating the record’s updatedAt timestamp. Since incremental sync typically relies on updatedAt, those calculated-property changes may not be captured automatically. Estuary supports a scheduled refresh for calculated properties using a cron expression at the binding level, which fetches current calculated-property values and merges them into the associated collections.

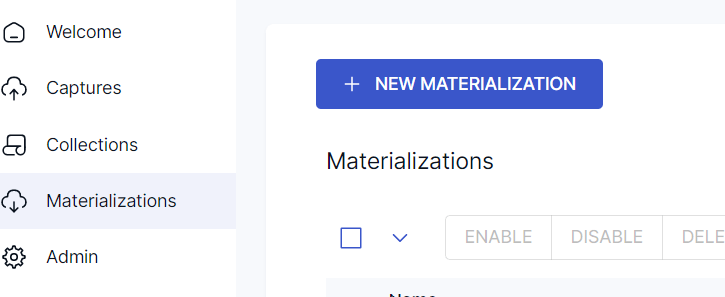

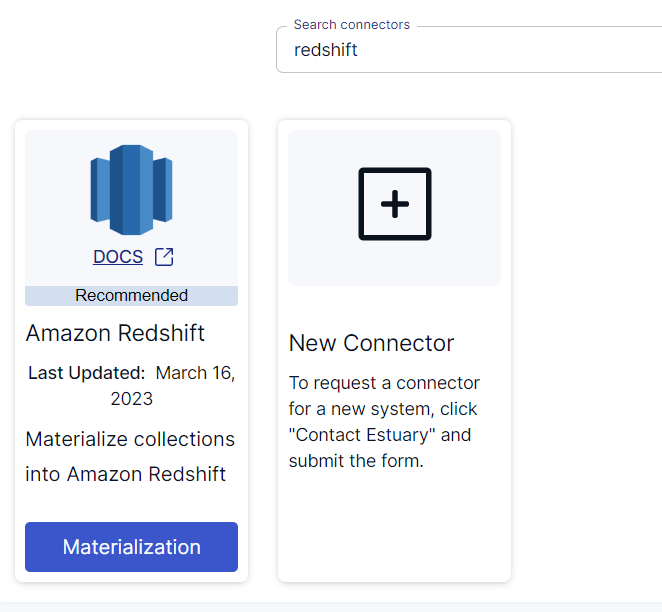

Step 2: Create an Amazon Redshift Materialization (Destination)

Next, configure Redshift as the destination:

- Go to Materializations and select +New Materialization.

- Choose the Amazon Redshift connector.

- Enter Redshift connection details (address, user, password, database, schema).

- Provide your AWS details for S3 staging (bucket name, region, access key, secret key).

- Bind the HubSpot collections you created to destination table names.

- Save and Publish.

Estuary will continuously materialize your HubSpot collections into Redshift tables using S3 as a staging layer for efficient loading.

Notes and Best Practices for Redshift Sync

- Delta updates vs standard updates: Redshift materializations support both. Standard updates (merge-style) are the default; delta updates are useful for specific update patterns, but you should ensure your downstream expectations match the behavior.

- Performance guidance: For best performance, keep one active Redshift materialization per schema, and add multiple HubSpot resources as bindings under that one materialization.

- Record size limit: The maximum size of a single input document is 4 MB. If you have very large HubSpot objects, exclude heavy fields or use a derivation to reduce document size.

If you’d like a more detailed set of instructions, read into the Estuary documentation on:

- HubSpot source connector

- Redshift materialization connector

- Detailed steps to create a Data Flow like this one

Comparing DIY vs Managed HubSpot to Redshift Integration

Choosing how to sync HubSpot to Redshift depends on your team’s engineering capacity, latency requirements, and tolerance for operational complexity.

Below is a practical comparison between building your own API + COPY pipeline and using a right-time data platform.

Architecture Comparison

| Capability | HubSpot API + S3 + COPY | Estuary |

|---|---|---|

| Initial setup | Custom scripts and AWS configuration | UI-based configuration |

| Incremental sync | Must be built manually | Built-in |

| Real-time or near real-time | Requires webhooks + orchestration | Supported |

| API rate limit handling | Manual backoff and retry logic | Managed |

| Schema drift handling | Manual table updates required | Managed through collection schemas |

| Upserts / merge logic | Must implement staging + merge pattern | Managed |

| Exactly-once guarantees | Complex to implement | Built-in transactional handling |

| Monitoring & recovery | Custom logging + alerting required | Managed |

When the API + COPY Approach Makes Sense

Building your own HubSpot to Redshift pipeline may be reasonable if:

- You only need periodic batch loads (for example, nightly sync)

- Your HubSpot schema is stable and changes infrequently

- You already maintain internal data engineering infrastructure

- You want full control over every transformation step

However, as requirements evolve toward near real-time analytics or multi-system joins, the operational overhead increases significantly.

When a Managed HubSpot to Redshift Pipeline Is Better

A managed integration approach is typically better when:

- Sales dashboards must reflect updates quickly

- Marketing performance data needs continuous syncing

- You want dependable data pipelines without maintaining orchestration code

- Your HubSpot portal frequently adds or changes properties

- You want predictable data movement without building incremental logic

Instead of stitching together API calls, schedulers, retries, S3 staging, and Redshift merge logic, the pipeline is configured once and runs continuously.

The Operational Reality

Most teams start with scripts to move HubSpot data to Redshift. Over time, they encounter:

- API rate limits slowing extraction

- Duplicate records due to retry logic

- Missed updates because timestamp logic failed

- Schema changes breaking Redshift loads

- Increased maintenance burden as data volume grows

For long-term scalability, the difference between a working pipeline and a dependable pipeline becomes significant.

Conclusion

Syncing HubSpot to Redshift enables organizations to centralize CRM data for scalable analytics, advanced reporting, and cross-system visibility. While HubSpot is optimized for managing customer relationships and marketing workflows, Redshift provides the performance and flexibility required for complex SQL analysis across large datasets.

There are two primary approaches to building a HubSpot to Redshift integration. A custom pipeline using the HubSpot API, Amazon S3, and the Redshift COPY command offers flexibility and control, but requires ongoing management of incremental logic, schema updates, retries, and monitoring. As data volume and reporting demands grow, this operational overhead can increase significantly.

Alternatively, a right-time data platform allows teams to choose when data moves—sub-second, near real-time, or batch—without building and maintaining orchestration code. The right approach depends on your latency requirements, internal engineering resources, and long-term scalability goals.

By selecting the appropriate method, organizations can ensure their HubSpot data remains accessible, reliable, and analytics-ready inside Amazon Redshift.

FAQs

How do I load HubSpot data into Redshift?

What HubSpot objects can be synced to Redshift?

What are the main challenges when syncing HubSpot to Redshift?

About the author

With over 15 years in data engineering, a seasoned expert in driving growth for early-stage data companies, focusing on strategies that attract customers and users. Extensive writing provides insights to help companies scale efficiently and effectively in an evolving data landscape.