PostgreSQL to Iceberg - Streaming Lakehouse Foundations

Stream Real-Time Data from Postgres to Iceberg with Change Data Capture and Estuary Flow In this step-by-step tutorial, we demonstrate how to set up and stream real-time data from a PostgreSQL database into Iceberg tables using change data capture (CDC) with Estuary Flow. Learn how to capture, ingest, and materialize data using Estuary Flow's seamless integration. This demo uses a sales database to showcase how changes in a PostgreSQL table are tracked and replicated into an Iceberg table stored in AWS S3.

Check out Estuary Flow's Iceberg integration: https://estuary.dev/destination/s3-iceberg/

Join Estuary Flow's community Slack: https://estuary-dev.slack.com/join/shared_invite/zt-86nal6yr-VPbv~YfZE9Q~6Zl~gmZdFQ#/shared-invite/email

00:00 - Introduction: Streaming Data from Postgres to Iceberg

00:18 - Postgres Sales Database Overview

01:08 - Starting Change Data Capture (CDC) with Estuary Flow

02:09 - Materializing Data into Apache Iceberg

04:17 - Backfilling Data into Iceberg

05:21 - Querying Iceberg Tables with Python

06:10 - Conclusion: Demo Recap

More videos

Streaming Data Lakehouse Tutorial: MongoDB to Apache Iceberg

Learn how to connect MongoDB to Apache Iceberg in Iceberg table format using Estuary Flow. In this step-by-step demo, we show you how to: 1. Set up a MongoDB source and configure secure connections. 2. Create real-time pipelines to load data into Amazon S3. 3. Leverage the AWS S3 Iceberg Connector with AWS Glue for table cataloging. Estuary Flow simplifies real-time data integration with powerful features like advanced security connections, automated materialization, and streamlined pipeline management. Whether you're handling transactional data or syncing complex data streams, Estuary Flow has you covered. 👉 Try Estuary Flow: https://dashboard.estuary.dev/register 👉 Read the Documentation: https://docs.estuary.dev/ #MongoDBtoIceberg 0:00 - Introduction: Overview of the demo and Estuary Flow. 0:07 - Step 1: Setting Up MongoDB Source: Configuring MongoDB as the data source. 0:44 - Step 2: Reviewing Collections: Selecting collections to sync. 1:03 - Step 3: Setting Up S3 Destination: Configuring the AWS S3 Iceberg connector. 1:37 - Step 4: Testing and Publishing Pipeline: Testing the connection and publishing the pipeline. 2:07 - Final Verification: Verifying MongoDB data in S3 as Iceberg tables.

Estuary | The Right Time Data Platform

Welcome to Estuary, the Right Time Data Platform built for modern data teams. With Estuary, you can move and transform data between hundreds of systems at sub second latency or in batch, depending on your business needs. • Capture data from source systems using pre built, no code connectors. • Automatically infer schemas and manage both real time and historical events in collections. • Materialize your data to any destination with ease and flexibility. • Choose your deployment model: fully SaaS, Bring Your Own Cloud, or private deployment with enterprise level security. Start streaming an ocean of data and get going today: 🌊 https://dashboard.estuary.dev/register/?utm_source=youtube&utm_medium=social&utm_campaign=overview_video Learn more: 🌐 On our site: https://www.estuary.dev/?utm_source=youtube&utm_medium=social&utm_campaign=flow_overview 📚 In our docs: https://docs.estuary.dev/?utm_source=youtube&utm_medium=social&utm_campaign=flow_overview Connect with us: 💬 On Slack: https://go.estuary.dev/slack 🧑💻 In GitHub: https://github.com/estuary ℹ️ On LinkedIn: https://www.linkedin.com/company/estuary-tech/ #righttimedata #datapipelines #streamingdata #realtimeanalytics #CDC #dataengineering #Estuary

Real-time Data Products with Estuary - Ad Performance

In this detailed demo, Dani shows you how to build a real-time data product using Estuary. Learn how to capture and process data from a PostgreSQL database using Change Data Capture (CDC) and stream it into Snowflake for real-time ad performance calculations. This tutorial covers how to join and transform data using Estuary’s derivations and materialize the final output into Snowflake. Follow along as we work with ad clicks and impressions to perform transformations in real-time! 00:00 - Introduction: Real-Time Data Product with Postgres and Snowflake 01:30 - Setting Up Postgres CDC with Estuary Flow 04:45 - Defining Derivations for Real-Time Transformations 09:45 - Materializing Data into Snowflake 12:03 - Real-Time Ad Performance Calculation in Snowflake 12:46 - Conclusion: Wrap-up and Further Resources

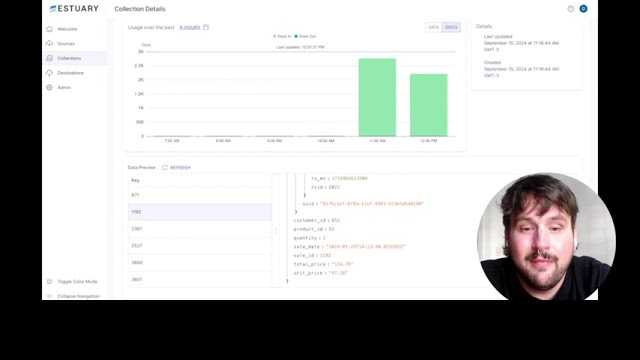

MongoDB to Snowflake in real-time (no Debezium)

In this video, Jeff from Estuary walks you through how to move data from MongoDB to Snowflake using Estuary Flow, a real-time ETL platform. Learn the key benefits of using Estuary, including low-latency Change Data Capture (CDC) and automatic unpacking of nested documents. You'll also see a step-by-step guide to setting up a MongoDB Atlas database and creating a real-time data pipeline with Estuary Flow. Key features covered: - Real-time data replication from MongoDB to Snowflake - Low-latency data movement and automatic flattening of nested documents - Backfilling data and setting up materializations to Snowflake in just a few clicks #MongodbtoSnowflake #changedatacapture If you have any questions, feel free to join our community Slack. Start building real-time data pipelines with Estuary today! Sign up for a free account: https://dashboard.estuary.dev/register Join our Slack community: https://estuary-dev.slack.com/join/shared_invite/zt-86nal6yr-VPbv~YfZE9Q~6Zl~gmZdFQ#/shared-invite/email Blog: https://estuary.dev/mongodb-to-snowflake/ 0:00 – Introduction: Moving Data to Snowflake with Estuary 0:12 – Key Benefits of Using Estuary: Real-Time Data Integration 1:18 – Automatic Flattening of Nested Data 2:08 – Testing Connection to the MongoDB Source 2:25 – Saving and Publishing the Real-Time Pipeline 2:54 – Sending Data to Snowflake and Other Destinations 3:12 – Real-Time Backfill and Data Materialization to Snowflake

What’s Next for Data Warehouses? Lessons from Our Benchmark and Emerging Trends

Dani and Ben talks about key findings on performance ceilings, cost traps, and failure modes, and explore the major trends reshaping data warehouse architecture, including: - Separation of Compute & Storage – How Snowflake Gen2, Databricks serverless, and open table formats like Iceberg are changing the game. - Lakehouse Reality Check: What’s working for teams adopting Iceberg, schema evolution patterns, and lake-native pipelines. - Flexibility Over Centralization: Moving beyond “one warehouse to rule them all.

Create a Webhook-to-Snowflake Data Pipeline

Create a complete data pipeline in 3 minutes that captures Square (or any other platform's) webhooks and materializes to Snowflake. With Estuary Flow, you can create endpoints to receive webhook data without setting up and maintaining your own server. Try it out for free at → https://dashboard.estuary.dev/register Ready for more? - See our site: https://estuary.dev/ - Learn more about webhooks: https://estuary.dev/blog/webhook-setup/ - Read our webhook capture docs: https://docs.estuary.dev/reference/Connectors/capture-connectors/http-ingest/ - Or our Snowflake materialization docs: https://docs.estuary.dev/reference/Connectors/materialization-connectors/Snowflake/ 0:00 Intro 0:19 Set up webhook capture 1:17 Configure webhook in Square 2:06 Create Snowflake materialization 2:48 Outro

Unify Your Data in Microsoft Fabric with Estuary

Want to get your data into Microsoft Fabric—fast and without writing code? Discover what unified data can do with a Microsoft Fabric integration. We’ll cover what makes this relatively new data platform unique and how you can enhance its capabilities further using Estuary. A step-by-step demo walks through Fabric warehouse connector setup in Estuary so you can get your data flowing. Interested in more? - Register for a free Estuary account: https://dashboard.estuary.dev/register - Learn more about Microsoft Fabric: https://estuary.dev/blog/what-is-microsoft-fabric/ - Find source connectors to go with your Fabric destination: https://docs.estuary.dev/reference/Connectors/capture-connectors/ - Join us on Slack: https://go.estuary.dev/slack Media resources used in this video are from Pexels and the YouTube Studio Audio Library. 0:00 Introduction 0:26 Microsoft Fabric 1:33 Covering gaps with Estuary 2:33 Beginning connector creation 3:23 Creating a warehouse 3:59 Configuring a service principal 5:59 Creating a storage account 6:55 Wrapping up the connector 7:33 Outro

How to Stream Data to MotherDuck with Estuary (Step-by-Step)

Learn how to load your data into MotherDuck—cloud-based DuckDB—with Estuary. We’ll cover a little about what makes DuckDB unique before diving into a step-by-step demo. Whether you're building a real-time pipeline from NetSuite, Snowflake, BigQuery, PostgreSQL, or MongoDB to MotherDuck, Estuary lets you do it in minutes — no code required. Find more: - Register at Estuary: https://dashboard.estuary.dev/register - Sign up with MotherDuck: https://app.motherduck.com/?auth_flow=signup - Read Estuary’s docs on MotherDuck: https://docs.estuary.dev/reference/Connectors/materialization-connectors/motherduck/ - Follow along with MotherDuck’s tutorial on working with Estuary: https://motherduck.com/blog/streaming-data-to-motherduck/ Media resources used in this video are from Pexels and the YouTube Studio Audio Library. 0:00 Intro 1:01 DuckDB's features 1:45 MotherDuck 2:16 Connector demo with Estuary 2:57 Setting up AWS resources 3:46 Setting up MotherDuck 4:23 Publishing the connector 4:53 Outro

Stream Data to Apache Iceberg with Estuary

Learn about the Apache Iceberg table format, why it’s essential for organizing your data lake, and how to load data into Iceberg using Estuary. We’ll cover a brief intro to Iceberg before demoing the connector setup with Estuary, Amazon S3, and AWS Glue for real-time and batch data integration. With Estuary, you can stream structured or unstructured data directly into Iceberg tables — whether your source is PostgreSQL, Kafka, Snowflake, MongoDB, or many others — making it easy to build a scalable, query-ready data lakehouse architecture. Find more at Estuary’s: - Website: https://estuary.dev/ - Docs: https://docs.estuary.dev/ - Introduction to Iceberg: https://estuary.dev/apache-iceberg-tutorial-guide/ - Iceberg connector documentation: https://docs.estuary.dev/reference/Connectors/materialization-connectors/amazon-s3-iceberg/ #ApacheIceberg #datalakehouse Media resources used in this video are from Pexels and the YouTube Studio Audio Library. 0:00 Intro 1:00 What is Iceberg? 2:30 Beginning connector setup in Estuary 3:17 AWS resources 5:00 Additional config and catalogs 6:05 Wrapping up connector creation 6:36 Review and outro

Change Data Capture for PostgreSQL with Estuary

In this quick tutorial, Dani demonstrates how to effortlessly set up a PostgreSQL Change Data Capture (CDC) pipeline using Estuary in less than a minute. Watch as he works with a live sales table, showing you just how simple it is to connect your Postgres database and start replicating data in real time. You'll also see how Estuary handles schema capturing and backfills your existing data, making real-time data integration both fast and efficient. #PostgresCDC #changedatacapture Try Estuary today for real-time data pipelines and seamless integration with your databases! - Sign up for a free account: https://dashboard.estuary.dev/register - Join our Slack community: https://estuary-dev.slack.com/join/shared_invite/zt-86nal6yr-VPbv~YfZE9Q~6Zl~gmZdFQ#/shared-invite/email - Make sure to check out our Postgres CDC guide: https://estuary.dev/the-complete-change-data-capture-guide-for-postgresql/ Key things covered: 0:00 – Introduction: Setting up PostgreSQL CDC Pipeline 0:09 – Sales Table Example and Real-Time Updates 0:21 – Creating a Postgres Capture in Estuary 0:33 – Verifying the Sales Table and Schema 0:44 – Backfilling Data and Real-Time Replication

Real-time CDC with MongoDB and Estuary in 3 minutes

Build a Real-Time CDC Pipeline from MongoDB using Estuary: This tutorial demonstrates how to create a real-time change data capture (CDC) pipeline from MongoDB using Estuary. It covers setting up MongoDB Atlas, configuring Estuary, and monitoring data replication in real-time. #MongodbCDC #Changedatacapture Start building for free at: https://dashboard.estuary.dev/register Blog Post MongoDB CDC: https://estuary.dev/mongodb-change-data-capture/ 0:00 – Introduction: Real-Time CDC Pipeline from MongoDB using Estuary 0:07 – Provisioning MongoDB Atlas 0:56 – Creating a Real-Time CDC Pipeline in Estuary 1:17 – Discovering Database Objects for Replication 1:47 – Saving and Publishing the CDC Pipeline 2:36 – Inserting a New Record in MongoDB 3:01 – Verifying Record Update in Estuary

Estuary Overview

Discover the power of Estuary, a platform built to make creating real-time data pipelines easy. In this overview, we’ll show you how Estuary helps you move data from source to destination in real time, with no coding required. 🌐 Check out our website to learn more about Estuary: https://www.estuary.dev/ ➡️ Start building your pipelines for free now: https://dashboard.estuary.dev/register if you’re curious for more, check out our docs or jump into our community Slack to ask questions! 📚 Explore our docs for detailed guides and tutorials: https://docs.estuary.dev/ 💬 Join our Slack community to connect with developers and ask questions: https://estuary-dev.slack.com/ #Estuary #RealtimeETL #DataStreaming #DataOps #dataengineering

Seamless Data Integration, Unlimited Potential

Discover the simplest way to connect and move your data.Get hands-on for free, or schedule a demo to see the possibilities for your team.