We all know how important data processing and management is, but with the average person generating 1.7 MB of data every second, imagine the scale of data businesses have to deal with. Multiply that by the number of customers, transactions, and interactions a business has in a day and you'll quickly realize the challenge they face. This calls for an efficient stream processing framework that can handle the continuous flow of data.

Stream processing framework is the secret sauce that helps you process and analyze data in real time. They play a crucial role in turning raw data into actionable insights, which helps drive informed decision-making and gives your business a competitive edge.

Now, this brings us to the matter at hand: how to find the best stream processing framework. It's pretty simple. Read this guide on the 9 best stream processing engines; we promise you’ll discover the right solution for your needs.

9 Best Stream Processing Frameworks For Better Data Processing Workflows

Here are our top 3 picks for the most popular stream-processing frameworks:

- Estuary - Top Pick

- Apache Spark - Best for accelerating big data & stream processing

- Apache Kafka - Trusted by Fortune 500 companies

Let's review each of these 9 stream processing systems in greater detail and see which one of these meets your requirements.

Estuary - Top Pick

Estuary is a comprehensive DataOps platform that offers managed Change Data Capture (CDC) and Extract, Transform, Load (ETL) pipelines along with streaming SQL transforms. It effortlessly handles real-time data movements and transformations, making it ideal for quick data processing tasks.

It is designed to easily handle the growth of your business while ensuring reliability. Flow offers a complete solution for businesses looking to employ real-time data in their analytics and daily operations.

Estuary Features

- Advanced analytics: Offers real-time analytics capabilities and integrated machine learning models for swift and efficient insight generation.

- Fully managed platform: Cost-effective and user-friendly solution that supports multiple data sources and destinations through its robust set of pre-built connectors.

- Real-time data capture & transformation: Instantly grabs data from databases and SaaS applications and transforms it swiftly using streaming SQL and JavaScript.

- Schema inference: Effectively converts unstructured data into a structured format which is helpful in stream processing scenarios where data comes in various formats.

- Extensibility & real-time analytics: Allows for an effortless addition of connectors via its open protocol and delivers real-time analytics for responding to events promptly.

- Scalability and reliability: As a distributed system, Estuary scales with data volumes up to 7 GB/s, maintaining transactional consistency via exactly-once semantics.

Estuary Pricing

Estuary offers three flexible pricing tiers designed for teams of all sizes—from hobby projects to enterprise-grade deployments:

- Free: Includes 10 GB/month, 2 connector instances, millisecond latency, and real-time syncing.

- Cloud: $0.50/GB + up to $100/connector instance. Includes 12 connector instances, your own cloud storage, 99.9% SLA, and Slack/email support. 30-day free trial available.

- Enterprise (Custom pricing): Custom pricing. Adds private deployments, SOC2 & HIPAA, SSO, 24/7 support, and dedicated customer success.

Apache Spark - Best For Accelerating Big Data & Stream Data Processing

Apache Spark is an open-source stream processing engine capable of processing massive amounts of data for real-time streaming operations. It handles complex event processing at high speeds, outpacing most platforms by a significant margin. Spark supports different programming languages and provides APIs for developers. This makes big data processing and distributed computing simpler and more manageable.

Apache Spark Features

- Flexible cluster integration: Easily integrates with different cluster nodes like Hadoop YARN, Kubernetes, and Apache Mesos, adding to its adaptability and versatility.

- Versatile language support: It provides support for multiple programming languages including Python, Java, Scala, and SQL. It also offers APIs for Python, R, Java, SQL, and Scala.

- Integrated Machine Learning: Comes equipped with machine learning-enabled data analytics modules. This integration helps the system learn and improve its data processing capabilities over time.

- Stream processing: With the Spark Streaming module, data from various sources like Kafka, Flume, and Twitter can be processed in real-time. This functionality makes Spark ideal for building streaming applications.

- Fault-Tolerance & data parallelism: Provides excellent fault tolerance – a crucial feature for maintaining data integrity in distributed systems. It also uses implicit data parallelism to enhance performance in cluster computing.

Apache Spark Pricing

Apache Spark is free to use.

Apache Kafka - Trusted By Fortune 500 Companies

Apache Kafka is a robust open-source stream processing platform that receives, stores, and delivers data in real time. Envisioned initially as a messaging queue, it's now built on a distributed commit log abstraction which enables seamless data stream handling for trillions of events daily.

Apache Kafka Features

- Broad adoption: Trusted by more than 80% of Fortune 100 companies indicating its reliability and efficiency.

- Advanced data integration: Being a Java library, it ensures seamless integration with existing services and enhances their scalability and fault tolerance.

- Distributed stream processing: Handles high-volume data streams across various end-users and downstream applications for effective data organization and delivery.

- Dual utility: An absolute must-have for building real-time stream processing pipelines that efficiently handle data processing and transportation, and for powering applications that consume data streams.

- Kafka streams API: Offers a Java-based API for executing stream processing operations without writing any code. This feature helps in data processing techniques like filtering, joining, and aggregating.

Apache Kafka Pricing

Kafka is free to use.

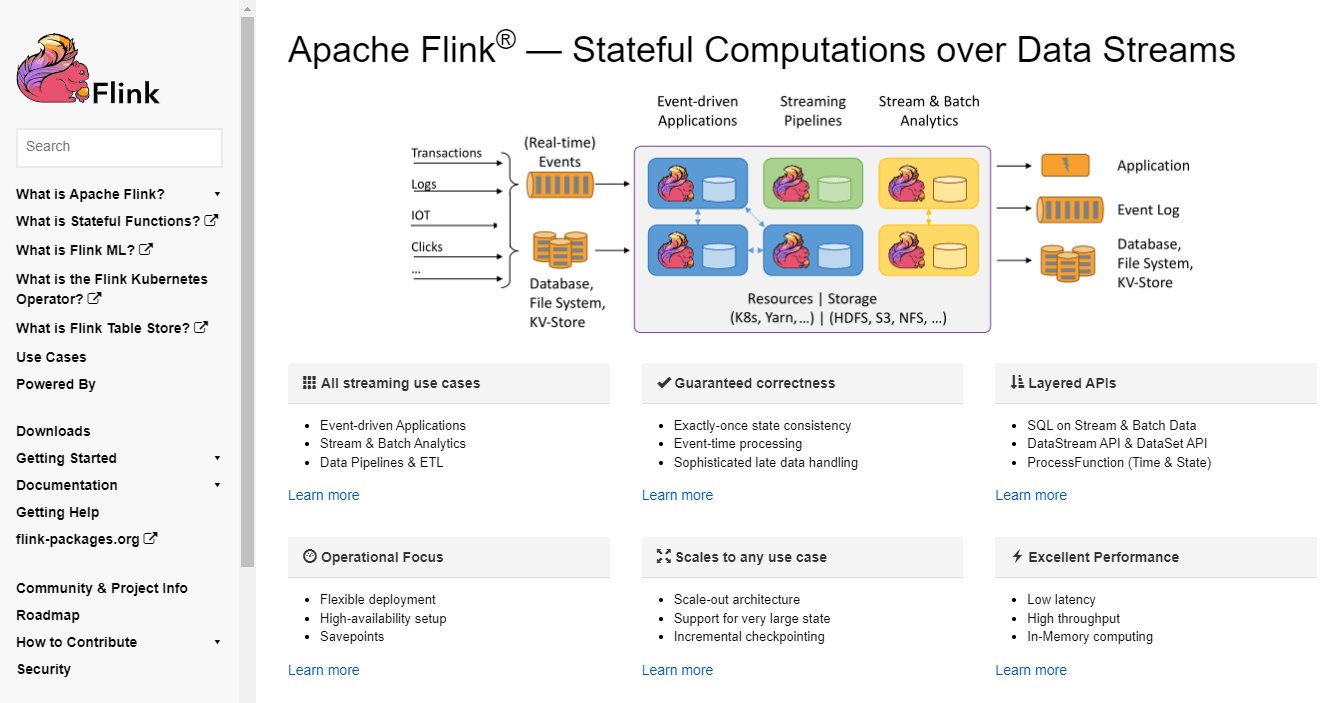

Apache Flink - Ideal for Both Bounded & Unbounded Stream Processing

Apache Flink is an open-source streaming data analytics platform that is specially designed to process unbounded and bounded data streams. It fetches, analyzes, and distributes streaming data across numerous nodes while facilitating stateful stream processing applications at any scale.

Apache Flink Features

- User-Friendly interface: Offers an easy-to-use UI that requires minimal technical knowledge.

- Infinite resource utilization: Permits almost infinite utilization of CPUs, main memory, disk, and network IO.

- Application parallelization: Builds applications that are parallelized into thousands of distributed and concurrently executed jobs in a cluster.

- Wide-Ranging integration: Integrates with popular cluster resource managers like Hadoop YARN, Apache Mesos, and Kubernetes. It can also run as a standalone cluster.

- Large application states: Handles massive application states because of its asynchronous and incremental checkpointing method. This ensures low processing latencies and maintains exact-once state consistency.

Apache Flink Pricing

Apache Flink is freely available for download and use.

Google Cloud Dataflow - Most Streamlined Serverless Data Processing

Google Cloud Dataflow is a cloud-based service that simplifies both real-time and batch data processing. As a fully managed serverless solution within the Google Cloud Platform environment, it lets you focus on programming tasks instead of managing server clusters.

With its distributed processing capabilities, it can process data at scale. It integrates with other Google Cloud services, making it easy to ingest and process streaming data from different sources. It also effortlessly integrates real-time analytics results with downstream services like BigQuery or Data Studio.

Google Cloud Dataflow Features

- Dynamic execution: Creates a cluster of Virtual Machines (VMs), distributing tasks across them and expanding based on job performance.

- Autoscaling: Minimizes pipeline delay, optimizes resource utilization, and reduces processing costs per data record.

- Serverless solution: Offers a serverless environment that lets you concentrate more on programming rather than managing server clusters.

- Real-time rebalancing: Dynamically partitions and rebalances data inputs in real-time to equalize worker resource use and minimize the impact of "hotkeys" on pipeline performance.

Google Cloud Dataflow Pricing

The total resources used to implement stream processing tasks, including vCPUs, memory, and storage, determine pricing. Get in touch with their team for custom plans.

Microsoft Azure Stream Analytics - Modernizing Real-time Data Processing

Microsoft's Azure Stream Analytics is a robust real-time stream processing system designed to analyze and process large volumes of live data. From stock trading to credit card fraud detection, it's an ideal tool for diverse continuous data streams. As a fully managed Platform-as-a-Service (PaaS) solution, it simplifies the analytics process and frees you from maintaining hardware or resources.

Azure Stream Analytics Features

- Real-time analytics: Helps identify real-time patterns and relationships that can trigger workflows, issue alerts, or alter data for later use.

- Diverse data sources: Gathers data from multiple sources, including devices, clickstreams, sensors, social media feeds, and applications.

- User-friendly query language: Uses Stream Analytics Query Language, a T-SQL variation, facilitating quick adaptation for users with SQL backgrounds.

- Versatility: Capable of routing task output to various storage systems like Azure SQL Database, Azure Blob storage, Azure CosmosDB, and Azure Data Lake Store.

Azure Stream Analytics Pricing

Pay-as-you-go pricing based on the number of streaming units used for stream processing tasks.

Spring Cloud Data Flow - Ideal For Microservice-Based Data Processing

Spring Cloud Data Flow is a microservice-based platform that offers both streaming and batch processing. Ideal for creating intricate data pipeline topologies, it uses Spring Boot apps based on the Spring Cloud Stream or Spring Cloud Task microservice frameworks. This platform is highly versatile and supports ETL, event streaming, and predictive analytics.

Spring Cloud Data Flow Features

- Pre-Built starter apps: Offers a variety of pre-built stream and task/batch starter apps for various data integration and processing scenarios.

- Custom application support: Lets you create custom stream and task applications using the familiar Spring Boot-style programming model.

- Intuitive pipeline DSL: Provides a simple stream pipeline DSL for specifying apps to deploy and managing connections between outputs and inputs.

- Interactive dashboard: Includes a graphical editor for interactive stream processing pipeline building and monitoring apps with metrics using Wavefront, Prometheus, Influx DB, or other systems.

- REST API & command line support: Exposes a REST API for composing and deploying data pipelines, along with a separate shell for easy API usage from the command line.

Spring Cloud Data Flow Pricing

Spring Cloud Data Flow is an open-source platform with charged support. Contact their sales team for more details.

Apache Samza - Best For Data Reliability

Apache Samza is a distributed stream processing framework that creates stateful applications. These applications can perform real-time data processing from diverse sources, with Apache Kafka being one of them. Samza offers flexible deployment options, running on YARN or as a standalone library. It is designed to provide high throughput and extremely low latencies for instant data analysis.

Apache Samza Features

- Write once, run anywhere: Same code can process both batch and streaming data.

- High performance: Designed to provide extremely low latencies and high throughput.

- Pipeline drain: Offers the ability to drain pipelines and allow incompatible intermediate schema changes.

- Horizontal scalability: It can scale to handle several terabytes of state and is supported by features like incremental checkpoints and host affinity.

- Pluggable architecture: Integrates with several sources including Kafka, HDFS, AWS Kinesis, Azure Eventhubs, K-V stores, and ElasticSearch.

- Powerful APIs: Provides rich APIs for building applications. You can choose from low-level, Streams DSL, Samza SQL, and Apache BEAM APIs.

Apache Samza Pricing

Samza is free to use.

Apache Storm - Accelerating Real-Time Computation In Open-Source Environment

Apache Storm is an open-source distributed computation system that offers a straightforward solution to reliably process unbounded data streams in real time. It is versatile and can be used in real-time analytics, continuous computation, distributed RPC, ETL, and more.

When it comes to ensuring data reliability, Apache Storm provides superior data guarantees. It offers reliable features like message acknowledgment and tuple anchoring to ensure that data is processed securely and without any loss.

Apache Storm Features

- Fault-tolerant: Ensures that data processing continues even in the event of a node failure.

- Integration friendly: Integrates with existing queueing and stream processing technologies.

- High volume and velocity data processing: Can ingest streaming data in large quantities and high-velocity effectively.

- Rapid and dependable: Offers quick and reliable processing, capable of processing up to 1 million tuples per second per node.

- Highly scalable: Apache Storm is horizontally scalable and can add more nodes to your Storm cluster for increased processing capacity.

Apache Storm Pricing

Strom is a free and open-source platform.

The 3 Best Streaming Solutions For Effective Data Transport

So far, we've been talking about the 9 best stream processing frameworks. But there's another important piece of the puzzle we should talk about, and that's streaming systems. While they are closely related, they refer to different aspects of the data processing landscape. Let’s take a look at the 3 best streaming solutions to get a better idea of how these pieces fit together.

Amazon Kinesis Data Streams

Amazon Kinesis Data Streams is a fully managed, highly scalable, and durable real-time data streaming service. It can capture gigabytes of data per second from diverse sources. This makes this stream processor ideal for businesses looking for instantaneous analytics like anomaly detection, dynamic pricing, and real-time dashboards.

Amazon Kinesis Data Streams Features

- AWS Integration: Seamlessly integrates with other AWS services for building complete applications.

- Low latency: Processes streaming data to real-time analytics applications within 70 milliseconds of collection.

- Dedicated throughput: Supports up to 20 consumers per data stream, each with dedicated read throughput.

- Secure & compliant: Provides data encryption for compliance needs and secure access via Amazon Virtual Private Cloud (VPC).

- Flexible capacity mode: Offers a choice between on-demand mode for automated capacity management and provisioned mode for granular control over scaling.

- Serverless architecture: With Kinesis, there are no servers to manage and the on-demand mode automatically scales capacity with workload traffic changes.

- High availability & durability: Data is synchronously replicated across 3 Availability Zones (AZs) in an AWS Region and stored for up to 365 days for data loss protection.

Amazon Kinesis Data Streams Pricing

Kinesis Data Streams provides a pay-as-you-go pricing model.

Apache Pulsar

Apache Pulsar is a cloud-native, distributed messaging and streaming engine that has proven its mettle in large-scale deployments like Yahoo. With the ability to scale to over a million topics, it is a high-performance solution for server-to-server messaging and geo-replication of messages across clusters.

Apache Pulsar Features

- Scalability: Scales to over a million topics, accommodating growing data needs.

- Simple client API: Provides a simple client API with bindings for Java, Go, Python, and C++.

- High performance: Ensures very low publish and end-to-end latency which makes it ideal for real-time data processing.

- Tiered Storage: Offloads data from hot/warm storage to cold/long-term storage (like S3 and GCS) when the data is aging out.

- Guaranteed Message Delivery: With persistent message storage provided by Apache BookKeeper, Pulsar guarantees message delivery.

- Multi-Cluster support: Natively supports multiple clusters in an instance, enabling seamless geo-replication of messages across clusters.

Apache Pulsar Pricing

Free to use.

Apache Flume

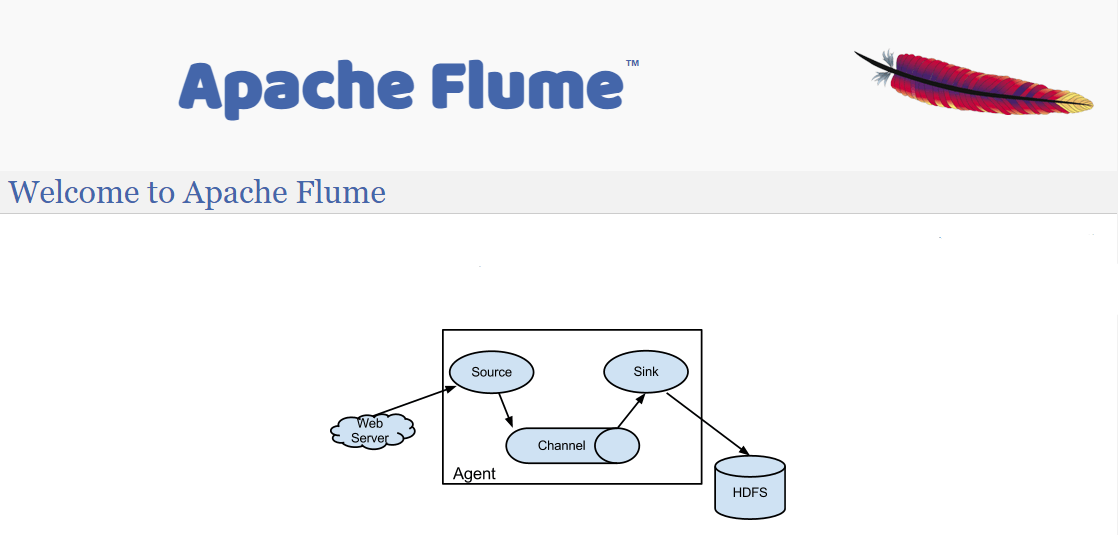

Apache Flume is a distributed system specifically designed for collecting, aggregating, and transferring massive amounts of log data from various sources to a centralized data repository like Hadoop HDFS. This open-source, extensible, and fault-tolerant system provides a scalable solution to maintain a steady data flow.

Apache Flume Features

- End-to-End reliability: Guarantees the reliable delivery of events from source to repository with its transactional approach.

- Steady data flow: Mediates between data producers and centralized stores to ensure a steady data flow when the incoming data rate exceeds the writing rate.

- Multi-hop data flow: Apache Flume creates multi-hop, fan-in, and fan-out flows, enabling contextual routing and fail-over for unsuccessful hops.

- Event recovery: Data events are staged in a Flume channel on each agent to facilitate failure recovery. It also supports a durable File channel backed by the local file system.

Apache Flume Pricing

Apache Flume is a cost-free distributed system solution.

Conclusion

From their robust fault tolerance mechanisms to their seamless integration with popular data sources, these stream processing frameworks have proven their worth in handling the complexities of modern data processing.

The constant evolution and innovation within the stream processing space indicate a promising future for these frameworks. This will ensure that organizations can continue to leverage cutting-edge technologies and stay ahead in an increasingly competitive landscape.

A standout stream processing framework in this space is Estuary. It's designed for real-time data movement and transformation, which makes it an ideal choice for stream data processing.

Flow supports a wide range of data sources and integrates with popular tools and technologies. Whether you're working with different data storage systems, messaging platforms, or data formats, Estuary ensures smooth connectivity to make data ingestion and transformation workflows a breeze.

Ready to experience the versatility and power of Estuary for yourself? Sign up for free today and unlock the full potential of your data processing workflows.

FAQs

How do I choose the best stream processing engine?

Is Estuary better than open-source frameworks like Kafka or Flink?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.