Data ingestion forms the foundation for data-driven decision-making, analytics, and reporting. It’s one of the most important steps in any ELT or ETL pipeline, especially when loading data into Snowflake, a leading cloud-based data warehousing platform. Snowflake’s real-time ingestion capabilities make it possible to capture, process, and store data from diverse sources in a centralized location for analysis.

Still, organizations often face challenges with ingestion — from handling multiple source formats, to meeting schema requirements, to ensuring low-latency updates at scale. This guide will show you practical solutions to overcome those challenges.

Most importantly, we’ll compare three proven methods for loading real-time data into Snowflake:

- Estuary, a modern, low-code alternative that gives you the benefits of Snowpipe and Snowpipe Streaming without the complexity.

- Snowpipe, Snowflake’s managed micro-batch ingestion tool.

- Snowpipe Streaming, Snowflake’s fastest ingestion method for millisecond-level latency, but one that typically requires custom SDKs or Kafka connectors.

By the end of this guide, you’ll not only understand Snowflake’s ingestion options but also see how platforms like Estuary help businesses unlock real-time analytics without the overhead of building everything in-house.

Exploring The Snowflake AI Data Cloud For Maximizing Business Insights & Efficiency

As the importance of data analysis in business grows, organizations are turning to cloud data platforms to handle the sheer scale and speed of the tremendous amount of data. One such platform is the Snowflake AI Data Cloud, a powerful tool that enables businesses to load data into Snowflake efficiently, supporting multi-cloud infrastructure environments.

Let’s take a deeper look at Snowflake’s data platform and explore what makes it so popular for loading data into Snowflake.

What Is Snowflake?

Snowflake AI Data Platform is a fully managed warehousing solution designed to store and process massive amounts of data. Snowflake provides near real-time data ingestion, data integration, and querying at a large scale.

One of Snowflake's key features is its unique architecture that separates the compute and storage layers. This enables users to scale resources independently and pay only for what they use.

Snowflake supports various data types and sources, including structured, semi-structured, and unstructured data. It also integrates very well with SaaS applications, various APIs, and data lakes which makes it extremely versatile.

3 Main Components Of The Snowflake AI Platform

The Snowflake AI platform is built upon three foundational components, which together form the basis of its cloud data platform:

- Cloud Services: Snowflake's cloud services layer coordinates activities across the platform, handling tasks like authentication, infrastructure, metadata, and access control. The platform takes care of data security and encryption and holds certifications like PCI DSS and HIPAA.

- Query Processing: Snowflake uses independent "virtual warehouses" for query execution. Each Snowflake data warehouse is formed as a separate cluster. This prevents competition for computing resources and ensures that warehouses don’t impact each other's performance.

- Database Storage: Snowflake databases can store structured and semi-structured data for processing and analysis. The platform takes care of managing every aspect of data storage such as organization, structure, file size, compression, metadata, and statistics. Access to this stored data is exclusively available through SQL query operations within Snowflake, ensuring security and control.

6 Key Features & Benefits Of Snowflake

What makes Snowflake popular are its unique features and the many benefits it provides. Some important ones are:

- Unique Architecture: Snowflake’s unique approach of separating compute and storage components of DataOps allows users to scale resources independently and pay only for what they use. Its multi-cloud approach and highly parallel design ensure efficient data processing and increase the reliability of the system.

- Data Type and Source Support: Snowflake can handle a variety of data types including:

- Unstructured data (e.g., images, text files)

- Structured data (e.g., SQL databases)

- Semi-structured data (e.g., JSON, AVRO, or XML)

- Unstructured data (e.g., images, text files)

It integrates with various data sources, including SaaS applications, APIs, and a data lake.

- Scalability: Snowflake's architecture enables easy scaling for handling large datasets and sudden spikes in data volume.

- Performance: The platform's design allows for fast and efficient query execution.

- Ease of Use: Snowflake offers a user-friendly interface for creating, managing, and querying data.

- Security: Advanced security features like multi-factor authentication, encryption, and role-based access control are provided.

4 Popular Use Cases Of Snowflake

Snowflake is a diverse platform offering a range of services. This allows organizations to leverage their power in a variety of ways. Below are the 4 most important ones:

- Data warehousing: Snowflake is ideal for handling large amounts of structured and semi-structured data.

- Analytics: The platform's architecture and data support make it suitable for data visualization and machine learning applications.

- Data sharing: It offers built-in secure and efficient data sharing between departments or organizations.

- ETL processes: Users can easily extract data from different sources, transform it into the desired format, and load it into Snowflake for analysis.

Now that we understand Snowflake, its components, and its features, let’s take a deeper look into data ingestion and more specifically, real-time data ingestion to understand how Snowflake leverages it.

Understanding Real-Time Data Ingestion For Unlocking Actionable Insights

Data ingestion refers to the process of collecting large volumes of data from various types of sources and transferring them to a destination where they can be stored and analyzed. These target destinations may include databases, data warehouses, document stores, or data marts. Data ingestion often consolidates data from multiple sources at once, such as web scraping, spreadsheets, SaaS platforms, and in-house applications.

Now let’s talk about what real-time data ingestion is and why you need it.

Real-Time Data Ingestion

Real-time data ingestion is the process of collecting and processing data in real-time or near real-time. This approach is crucial for time-sensitive use cases where up-to-date information is essential for decision-making.

This approach focuses on collecting data as it is generated and creating a continuous output stream, making it an invaluable tool for businesses across various industries.

Let’s look at a couple of use cases:

- In eCommerce and retail, real-time transactional data ingestion allows companies to accurately forecast demand, maintain just-in-time inventory, or adjust pricing more rapidly.

- In manufacturing, real-time analytical data ingestion can provide IoT sensor alerts and maintenance data, reducing factory floor downtime and optimizing production output.

Comparing Data Ingestion Methods: Batch Processing Vs. Real-time Ingestion

There are 2 primary types of data ingestion methods: real-time and batch-based.

Batch processing collects data over time and processes it all at once. This method is suitable for situations where data types and volumes are predictable long-term.

In contrast, real-time ingestion is vital for teams that need to handle and analyze data as it is produced, especially in time-sensitive scenarios.

With a solid understanding of real-time data ingestion, it's time to see how you can ingest real-time data into Snowflake.

How Can You Load Real-Time Data into Snowflake? 3 Proven Methods

Several methods for real-time data ingestion into Snowflake cater to diverse use cases and requirements. Let’s look at 3 different approaches for ingesting real-time data into Snowflake.

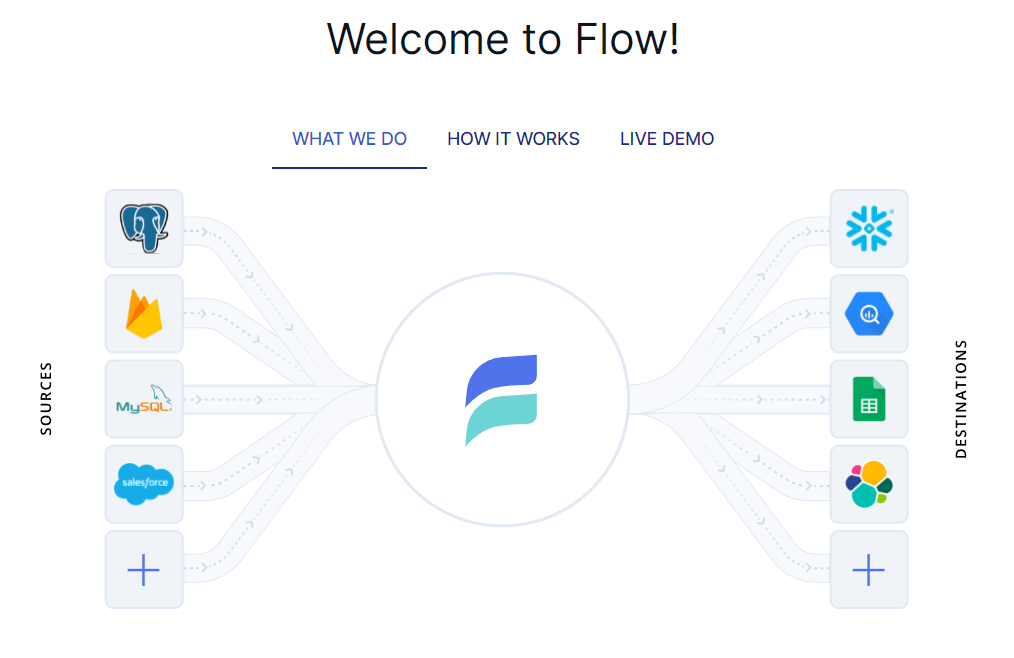

Estuary: A Powerful Tool for Loading Data into Snowflake

Estuary is a real-time data integration and streaming ETL platform that unifies data capture, transformation, and delivery in one place. Unlike traditional ETL or ELT tools that rely heavily on batch processing, Estuary is built on a streaming-first architecture designed to handle both continuous real-time ingestion and large backfills of historical data.

At its core, Estuary helps businesses eliminate the trade-off between real-time speed and cost efficiency. It provides:

- High throughput – capable of ingesting hundreds of MB/s to multi-GB/s pipelines.

- Low latency – sub-second end-to-end delays using Snowpipe Streaming with Delta Updates.

- Scalability – elastic architecture that adjusts automatically as your workloads grow.

- Flexibility – supports SQL and NoSQL databases, SaaS APIs, event streams like Kafka, and cloud storage buckets as sources.

- Durability – all ingested data is persisted in cloud object storage, giving you replayability and time-travel.

For teams moving data into Snowflake, Estuary’s Snowflake materialization connector gives you the best of both worlds: an easy-to-use UI that hides Snowflake’s complexity while still letting you choose between ingestion methods — Bulk Copy, Snowpipe, or Snowpipe Streaming.

Why Use Estuary with Snowflake?

Snowflake’s native ingestion options come with trade-offs:

- Bulk Copy is reliable but only supports batch ingestion.

- Snowpipe automates loading but still delivers data with a lag of minutes.

- Snowpipe Streaming offers real-time speed but requires implementing a Java SDK and managing row-based writes, which can add engineering overhead.

Estuary removes these barriers. Its connector integrates seamlessly with Snowflake, allowing you to:

- Choose your ingestion method (Bulk Copy, Snowpipe, or Snowpipe Streaming) without writing code.

- Enable Delta Updates to reduce warehouse compute costs while still maintaining fast, deduplicated inserts.

- Manage both batch and real-time datasets in the same connector on a per-binding basis.

In short, Estuary gives you Snowflake’s fastest ingestion option — Snowpipe Streaming — without the complexity of implementing it yourself.

Setting Up Your Pipeline in Estuary

Once you have a Snowflake account, here’s how you can load real-time data with Estuary:

- Prepare Snowflake resources

- Use a simple SQL script (provided in Estuary docs) to create a warehouse, database, schema, and user.

- Generate a public/private RSA key pair with openssl. Add the public key to your Snowflake user, and upload the private key (rsa_key.p8) to Estuary for secure JWT authentication.

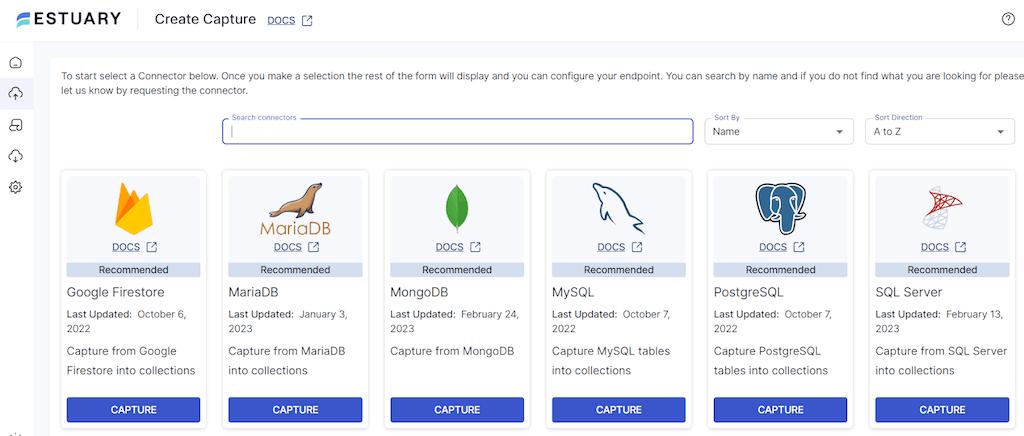

- Capture data from your source

- In the Estuary dashboard, go to Captures → New Capture.

- Connect to your data source (PostgreSQL, MongoDB, Kafka, SaaS, etc.), verify credentials, and select the collections you want to sync.

- Save and publish your capture.

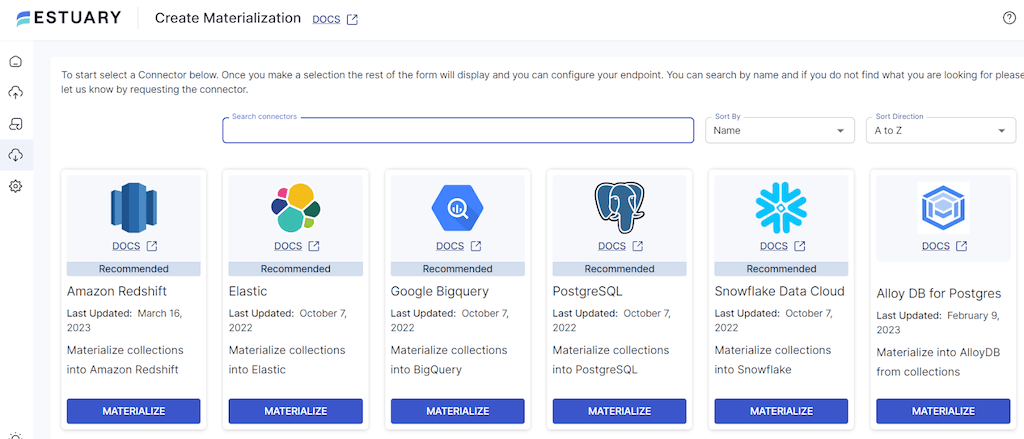

- Materialize into Snowflake

- Go to Destinations → New Materialization → Snowflake.

- Provide the Snowflake host URL, database, schema, warehouse, and role from step 1.

- Upload the private key file for authentication.

- Add the captured collections you want to sync, then save and publish.

- Customize your pipeline (optional)

- Adjust sync frequency if you prefer scheduled batch loads.

- Enable Snowpipe Streaming with Delta Updates for true real-time ingestion with lower compute usage.

- Mix batch and streaming pipelines in the same connector, depending on the dataset.

With that, Estuary will immediately start moving your data into Snowflake, optimizing for both performance and cost.

Refer to the technical documentation of the Snowflake connector and this guide to set up a basic data flow in Estuary before getting started.

Want to see this in action? Watch our quick demo below:

How to Stream Data into Snowflake with Estuary

Using Snowpipe to Load Data into Snowflake in Real-Time

If you’re looking to ingest data into Snowflake continuously, Snowpipe is one of the most popular options. Snowpipe enables loading data stored in files directly into Snowflake tables without having to run manual COPY INTO commands.

However, it’s important to note that Snowpipe ingests in micro-batches. This means data typically arrives with a delay of a few minutes rather than true millisecond-level streaming. For many analytics use cases this is sufficient, but if your workloads demand real-time streaming, consider Estuary with Snowpipe Streaming, which delivers lower latency without the engineering overhead.

Let’s see how Snowpipe works to get your data into Snowflake.

Step 1: Setting Up Your Stage

First, you’ll need to set up a stage for your data files. A stage is a storage location where Snowpipe can find your data. You can choose from Amazon S3, Google Cloud Storage, or Microsoft Azure as your stage location.

plaintext-- Create an Amazon S3 stage

CREATE STAGE my_s3_stage

URL = 's3://my-bucket/path/'

CREDENTIALS = (AWS_KEY_ID='my_key_id', AWS_SECRET_KEY='my_secret_key');Step 2: Creating a Pipe

Next, you need to create a pipe. A pipe is a named object in Snowflake that holds a COPY statement. The COPY statement tells Snowpipe where to find your data (in the stage) and which target table to load it into.

plaintext-- Create the target table

CREATE TABLE my_target_table (data VARIANT);

-- Create the pipe with a COPY statement

CREATE PIPE my_pipe AS

COPY INTO my_target_table(data)

FROM (SELECT $1 FROM @my_s3_stage)

FILE_FORMAT = (TYPE = 'JSON');Step 3: Detecting Staged Files

Snowpipe needs to know when new files are available in your stage. You have two options here:

- Automate Snowpipe using cloud messaging services like Amazon S3 Event Notifications or Azure Event Grid to trigger notifications.

- Call Snowpipe REST endpoints directly from scripts or tools to notify Snowpipe of new files.

Step 4: Continuous Data Loading

Once set up, Snowpipe will load data automatically in near real-time. As new files appear in your stage, Snowpipe ingests them into the target table according to the COPY statement.

Step 5: Monitoring Your Data Ingestion

Finally, monitor your ingestion process by checking Snowpipe history and logs.

plaintext-- Query the history of your pipe

SELECT *

FROM TABLE(INFORMATION_SCHEMA.PIPE_USAGE_HISTORY('my_pipe'))

ORDER BY START_TIME DESC;With Snowpipe, you get automated, low-maintenance ingestion that’s faster than batch copy but not truly real-time. For use cases where every millisecond counts, Estuary with Snowpipe Streaming is a stronger fit.

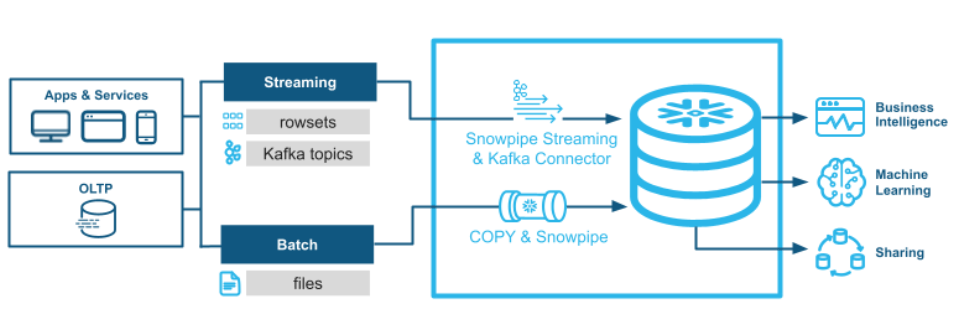

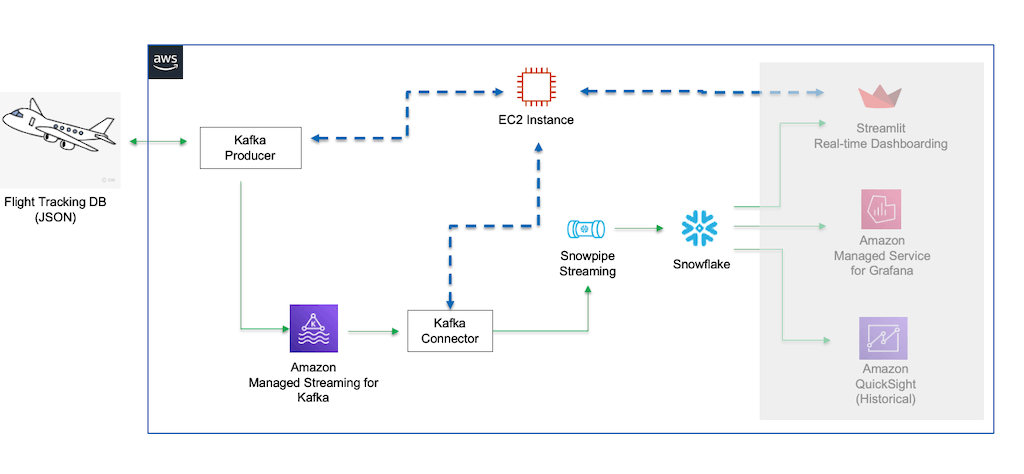

Ingesting Real-Time Data With Snowpipe Streaming API

Snowpipe Streaming is Snowflake’s newest ingestion method, designed to deliver millisecond-level latency by loading streaming data directly into Snowflake tables. Unlike Snowpipe, which ingests in micro-batches, Snowpipe Streaming writes rows as they arrive, making it ideal for high-frequency event data.

To use Snowpipe Streaming natively, you typically need to implement the Snowflake Ingest Java SDK or set up the Snowflake Kafka Connector. Here’s a simplified version of the process:

Step 1: Install Apache Kafka, Snowflake Kafka Connector & OpenJDK

- Install Apache Kafka locally as the streaming platform for ingestion.

- Download the Snowflake Kafka Connector to bridge Kafka and Snowflake.

- Ensure OpenJDK is installed to run Kafka.

Step 2: Configure Snowflake & Local Setup

- In Snowflake, create a database, schema, and a user with ingestion permissions.

- Set up a storage integration object to connect Kafka to Snowflake.

- On the Kafka side, configure topics, partitions, and the Snowflake connector with account credentials and target details.

Step 3: Start the Environment

- Launch Kafka and create a topic.

- Start the Snowflake Kafka Connector, which begins streaming messages from Kafka to Snowflake.

Step 4: Test the System

- Publish sample events to the Kafka topic.

- Verify that the connector ingests data into the Snowflake target table in real time.

This setup provides the fastest ingestion path into Snowflake, but it comes with significant engineering overhead: maintaining Kafka, configuring Java-based connectors, and managing operational complexity.

👉 Simpler Alternative with Estuary

While Snowpipe Streaming typically requires implementing the Java SDK or Kafka connector, Estuary gives you access to Snowpipe Streaming under the hood — without touching Java at all. With just a few clicks in Flow’s dashboard, you can enable Snowpipe Streaming with Delta Updates per collection binding and get true real-time ingestion into Snowflake, minus the setup burden.

Real-time Data Ingestion Examples With Snowflake

Let’s discuss in detail how various companies utilize Snowflake with real-time data.

Yamaha Corporation

Yamaha Corporation leverages Snowflake's multi-cluster shared data architecture and flexible scaling to handle real-time data. This eliminates resource bottlenecks, enables fresher data imports, and speeds up visualization rendering in Tableau.

Snowflake-powered machine learning models are helping Yamaha take data analytics to new heights. This is expected to open up exciting new avenues of data utilization for the company. For example, showing a dealer's likelihood to buy in Tableau can help the sales team spot revenue opportunities more easily.

Sainsbury

Snowflake has streamlined Sainsbury’s data consolidation and data science workloads by providing a single source of truth (SSOT) across all its brands. Using Snowflake Streams and Tasks, Sainsbury's processes transaction and click-stream data in real-time. This has helped the company democratize data access and foster innovation.

Petco

Petco is a health and wellness company that specializes in improving the lives of pets. Snowflake’s data platform supports Petco by offering a scalable data pipeline for real-time data ingestion, processing, and utilization.

Petco's data engineering team has built an advanced real-time analytics platform based on Snowflake. This has helped simplify data warehouse administration for the company and free up resources for crucial tasks like democratizing data analytics.

AMN Healthcare

AMN Healthcare is a leading healthcare staffing solutions provider in the US. AMN Healthcare utilizes the Snowflake platform to quickly fulfill clients' ad-hoc reporting requests during critical moments in near-real-time.

Using Snowflake virtual warehouses, AMN Healthcare achieves a 99.9% pipeline success rate and a 75% reduction in data warehouse runtime. By writing over 100 GB of data and replicating 1,176 tables to Snowflake daily, AMN Healthcare consistently meets data replication service level agreements (SLAs).

Pizza Hut

Snowflake's near-real-time analytics allows Pizza Hut to make swift decisions during high-impact events like the Super Bowl. The platform’s instant elasticity supports virtually unlimited computing power for any number of users while separating storage and compute offers performance stability and cost visibility.

Pizza Hut also uses the Snowflake Data Marketplace for easy access to weather and geolocation data, and Snowflake Secure Data Sharing for direct data access from partners.

Conclusion

Real-time data ingestion into Snowflake is critical for organizations that want to act on insights as soon as data is generated. Snowflake provides multiple options — but each comes with trade-offs:

- Bulk Copy / Snowpipe: Great for scheduled or micro-batch loading, but not truly real-time.

- Snowpipe Streaming: Delivers millisecond-level ingestion, but requires implementing a Java SDK or Kafka connector, which adds significant engineering overhead.

Estuary bridges the gap. It gives you the flexibility to use Bulk Copy, Snowpipe, or Snowpipe Streaming — without writing custom code or managing complex integrations. You can scale from historical backfills to high-throughput real-time pipelines, adjust sync schedules, or enable Delta Updates for faster, more cost-efficient loads.

Whether you’re handling transactional data, IoT streams, or SaaS analytics, Estuary offers a durable, scalable, and low-code way to get your data into Snowflake.

With Estuary, you don’t have to choose between speed, simplicity, and cost-efficiency — you get them all in one platform. Sign up for free at Estuary and start streaming your data into Snowflake today.

If you're interested in loading data from one source to Snowflake, check out our related guides:

FAQs

What is the difference between Snowpipe and Snowpipe Streaming?

How does Estuary work with Snowflake?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.