How Does Query Processing Happen in Snowflake

Architecture, processing, and best practices... Looking to become a better data professional by understanding Snowflake query processing? Start here.

Overview of Snowflake and query processing

If you’re asking yourself, “How does query processing happen in Snowflake?” You’ve come to the right place.

Let’s start with a summary of Snowflake: It’s a self-managed, cloud-based tool used for processing, warehousing & analyzing large amounts of data. Snowflake follows ANSI SQL standards and has a hybrid architecture (more on this later) to handle query processing. They self-identify as a “data platform as a self-managed service.” Flexibility and ease of use are their most important offerings to data teams. As of 2026, Snowflake describes itself as a full data platform, supporting not just SQL analytics but also Python, Spark, and AI/ML workloads within the same compute engine. We’ll dive further into exactly how they support those goals through their approach to query processing.

Importance of understanding query processing in Snowflake

Understanding query processing is important for a number of reasons. Fundamentally, you’ll become a better data professional for it. It offers greater visibility into the entire lifecycle of the data you’re working with. This will help you understand where to go next in the midst of troubleshooting performance issues.

If you’re comfortable with SQL fundamentals and want to upskill, this is your next step. You’ll also be able to make more informed decisions about schema and database design. These topics are the bread and butter of data, and essential to know for professional development.

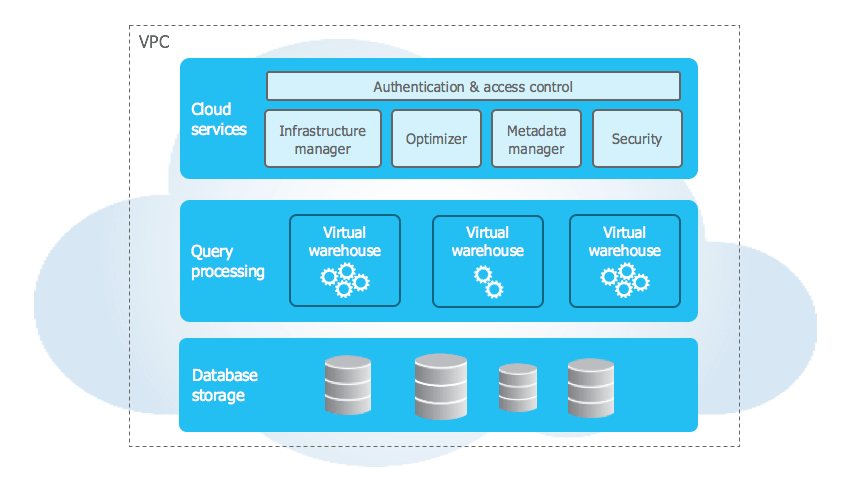

Architecture of Snowflake

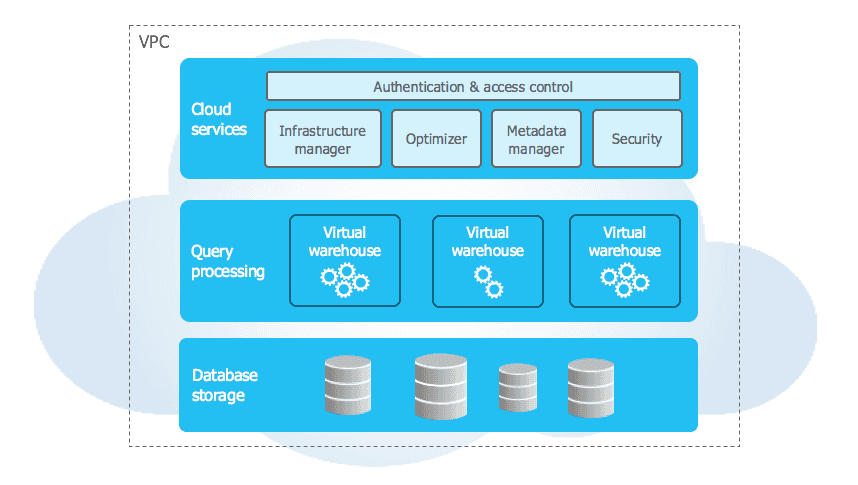

Snowflake’s architecture is a unique hybrid of traditional shared-disk and shared-nothing architecture. It resembles shared-disk because all persisted data is stored in a central repository that all compute nodes can access. It resembles shared-nothing because it processes queries in a way that each node within a particular cluster stores a portion of the data locally. Each update request is handled by a single node, which eliminates contention between them.

See the image above, courtesy of Snowflake. At a more granular level, it consists of key layers: database storage, query processing, and cloud services. When data is persisted into Snowflake, it reorganizes it at the database storage level in a way that is optimized for complex query processing. Queries are then processed in virtual warehouses (i.e compute nodes). These compute cluster sizes are defined by the user when they are initially created, but are capable of auto-scaling, and auto-resuming. The cloud services layer is responsible for handling authentication, access control, query parsing and optimization, and more.

Multi-cluster shared data architecture

A multi-cluster architecture means that Snowflake distributes compute resources across multiple clusters. This enables users to concurrently process and query data across different resources. This is especially useful during peak business hours, and handling high user volumes. For example, this would allow you to have Airflow orchestrating tables updates into Snowflake, analysts querying data, and a team of Engineers validating data in a dev environment simultaneously with no performance issues. This means you can allocate dedicated compute resources for each of these scenarios, making consistent and predictable performance achievable. The rest of this article will dive a little further into exactly how that is done.

Separation of compute and storage

Snowflake is able to offer this to users because their compute and storage are separate. In typical warehousing systems, processing power and storage capacity are tightly coupled. There are a number of benefits from having the two separate.

First, this allows you to spin up multiple virtual warehouses at a time. For example, your analytics team would be querying data using one warehouse, and your engineering team would be querying data using a separate warehouse. You can adjust these compute resources separately to best complement various workload requirements. This flexibility is also cost-effective because you’re only paying for the resources you need.

Snowflake's virtual warehouses

Virtual warehouses are an important part of Snowflake’s architecture. A virtual warehouse is a compute resource that allows you to work with the data you want.

Snowflake offers different sizes of warehouses that you can choose from based on the workload requirements. The sizes correspond to the amount of CPU and memory you need in each particular warehouse. For example, your analytics and engineering teams likely have different requirements, and therefore need different virtual warehouses to be assigned to them. You can scale each warehouse up and down as needed, and enable auto-suspend and auto-resume policies as mentioned earlier. This ensures each warehouse is paused when not in use, and resumes when required without manual intervention.

As your organization continues to scale, you can also consider enabling Snowflake’s Multi-Cluster Warehouse (MCW) feature. This allows you to group multiple virtual warehouses together under a single name, which can be easier to manage at scale.

Query processing in Snowflake

Steps in query processing

As discussed earlier, Snowflake’s query processing layer is separate from the disk storage layer. At a high level, here are the steps of query processing in Snowflake:

- A SQL query is submitted by a user either in the Snowflake UI (via Worksheets), a third-party tool with appropriate user access permissions (dbt, Azure, PowerBI, etc), or with SnowSQL.

- Snowflake then validates the query’s syntax. If it passes without error, it’s converted into a different representation that is further processed by Snowflake downstream.

- The query is then optimized, taking into account the table size, available system resources, data distribution and complexity, among other considerations.

- Snowflake retrieves metadata about the table schema, and is then used to further optimize the given query.

- Snowflake then considers what compute resources are necessary (and what is available) to execute the query. These virtual warehouses are autoscaled for this reason.

- The query is then executed against the data in question, and made available to the requesting user.

- Snowflake then collects data on the query performance and metrics. This information helps to optimize following queries, and identify potential bottleneck performance issues.

- The query & its output are stored (i.e. cached), which is accessible to users if they need to rerun it.

Dynamic Tables: Incremental Query Processing

Dynamic Tables are a declarative, SQL-based approach to building incrementally refreshed materialized results, and they have become one of the most important query-processing concepts in Snowflake since going generally available in 2024.

Unlike a regular view (which reruns its query every time it is called) or a standard table (which requires you to manually schedule updates), a Dynamic Table automatically refreshes its results when upstream data changes. You define the query once; Snowflake handles the incremental refresh logic behind the scenes.

This makes Dynamic Tables a native alternative to orchestrating incremental loads with Airflow DAGs or dbt scheduled runs. Snowflake computes only the changed rows, not the full dataset, which significantly reduces compute cost on large tables. Dynamic Tables now support filtering by current time and date for incremental refresh, making time-windowed pipelines easier to build without external tooling.

When to use Dynamic Tables vs. Snowpipe Streaming: if your use case requires sub-minute data freshness (e.g., live dashboards ingesting from Kafka), Snowpipe Streaming is the right tool. If you need refreshed aggregations or transformed datasets and can tolerate refresh intervals of a minute or more, Dynamic Tables are simpler to maintain and cheaper to operate.

Parsing and validating queries

Parsing and validating queries is an important step of how Snowflake handles data processing.

Snowflake’s query parser verifies the given query adheres to ANSI SQL standard, checks availability of the database objects and resources in question, searches for any defined constraints, and more.

If no errors occur, it turns that given SQL query into a data structure called an abstract syntax tree (AST). Essentially, it becomes a version that’s functionally equivalent to what the user inputted, but is better optimized for Snowflake to use. If errors are found, the parser outputs a detailed error code for the user to resolve the issue manually before trying again.

Query optimization

One of the main goals of the Snowflake parser is to generate cost- and performance-efficient execution plans for queries. Once the query is parsed and validated, the parser generates a number of possible execution plans and chooses the most cost-efficient one.

Snowflake offers a query-optimizing tool for enterprise accounts, which continuously monitors query performance, and adjusts execution plans based on their runtime results. It also offers tools for enterprise-level clients like search optimization and acceleration services that can aid in this process.

Execution and caching

Snowflake caches queries, which allows previously-run queries to execute without needing to re-computing the data. If you’re running a particular query often, this is helpful for performance and cost.

When a query is executed, the resulting cache is in-memory, meaning that it lives in the virtual warehouse it was executed in. Before running any given query, Snowflake checks to see if a fingerprint (i.e. a hash value) already exists for said query. If a match exists, Snowflake will return the results from cache. If it does not exist, a new fingerprint is created and stored. When the cache limit is met, the oldest fingerprints are dropped. This feature assists users with cost-management because it avoids reusing resources where not required.

Beyond caching, Snowflake's execution engine is built on a columnar, vectorized processing model. Rather than reading data row by row, Snowflake stores and processes data in columnar format, which means it reads only the columns a query actually needs. This provides faster reads, better compression, and more efficient scanning. The engine processes data in batches of thousands of column values at a time, known as vectorized execution, which makes CPU cache usage more efficient and enables modern CPU SIMD instructions. The result is a massively parallel, low-latency execution model that handles large datasets without proportionally scaling compute costs.

Optimizing query performance in Snowflake

Best practices for designing tables and schemas

Following best practices around tables and schemas helps support data integrity, and performance. Here are some tips to consider while designing your own resources:

Using appropriate data types and file formats

When you create a table or view in Snowflake, you must assign a particular data type for each column. Snowflake supports a wide variety of types including boolean, integer, timestamp, varchar, etc. Varchar is known as the “catch-all” type because it can hold numbers, letters, and special characters. Although it may be tempting to assign each column to this type because it’s easier, it may require more storage, impact your performance, or be inappropriate in the context of stakeholder needs or downstream application dependencies.

Optimizing query syntax

Beyond using correct data and file types, there are a couple of different ways to further optimize queries. Arguably, designing your Snowflake resources in a way that expects increased data and complexity is the most important.

You should consider how often you expect the table to be queried against, how the table is updated and at what interval, retention policies (i.e. Time Travel and Fail Safe featuring), and more. If you don’t consider the future while designing these resources, problems, and needs are often unacknowledged until an issue appears in production.

There are a number of ways to load data into Snowflake, and each of them depends on your use case. For example, if you have a complex, high-volume table that needs to be accessible for near real-time analytics, batch loading this data may not be the most suitable option. Instead, it may be wise to consider streaming the data with Snowpipe, a managed service, or a combination of open-source tooling.

You can also improve query processing time with complex or large data by implementing compression on the data where appropriate. For example, columnar compression on a high-cardinality column like a shopping cart ID or user ID can significantly improve query performance.

Using appropriate virtual warehouses

Choosing the correct virtual warehouse size for your use case depends on a number of factors we’ve discussed such as volume of data, performance requirements, and overall complexity. Warehouse sizes are abstracted as t-shirt sizes, but just mean how many compute nodes are available. The most important part of choosing a warehouse size comes down to understanding your requirements, and continuously monitoring how it performs at a given scale.

Automatic Indexing and Query Acceleration

Scaling your warehouse up is no longer the only or even the first lever to pull when queries slow down. Snowflake now automatically builds and uses indexes behind the scenes to speed up point lookups for specific columns or keys, at no additional charge. Snowflake covers all build and maintenance costs, and there is nothing for you to configure or manage.

The way it works: Snowflake's warehouse analyzes your query workload, identifies queries that are doing highly selective lookups (e.g., fetching a single customer record by ID), and automatically creates and maintains the appropriate index. You do not need to declare indexes at table creation time or manage them as your data grows.

You can monitor the impact of automatic indexing via the Performance Explorer Dashboard in Snowsight, or programmatically via the snowflake.account_usage.query_insight view. If you're troubleshooting a slow point-lookup query, check this view before reaching for a larger warehouse — the index may already be in place, or building.

AI-Powered Query Processing with Cortex

One of the most significant shifts in Snowflake is the native integration of AI directly into the query processing layer. Rather than requiring external ML infrastructure or data movement, Snowflake now allows you to run AI-powered operations inside standard SQL queries through a suite of tools collectively called Snowflake Cortex.

Cortex Analyst: Natural Language to SQL

Cortex Analyst is a fully managed, LLM-powered feature that allows business users to ask questions about structured data in plain English, which are then translated into SQL and executed against your Snowflake tables. For data engineers, this matters because it shifts the query interface for non-technical stakeholders — reducing the volume of ad hoc SQL requests routed to the data team.

Cortex AI Functions in SQL

Cortex AI Functions bring AI capabilities directly into Snowflake's SQL engine, allowing teams to build AI pipelines over structured and unstructured data using standard SQL commands. These functions run entirely inside Snowflake — no external APIs, no data movement:

- AI_FILTER: evaluates a plain-language yes/no question against text or image input, usable in WHERE and JOIN clauses

- AI_CLASSIFY: assigns text or images into user-defined categories based on plain-language definitions

- AI_AGG: aggregates a text column and returns insights across multiple rows based on a custom prompt, without context window limitations

- AI_EXTRACT: extracts structured information from text, documents, and images

- AI_TRANSCRIBE: transcribes audio and video files stored in a Snowflake stage

These functions reached general availability in November 2025 and are now production-grade for enterprise workloads.

Cortex AI Functions Inside Dynamic Tables

The most powerful application of Cortex AI Functions for data engineers is their integration with Dynamic Tables. You can now use Cortex AI Functions in the SELECT clause of a Dynamic Table in incremental refresh mode. This means AI-powered transformations, like classifying incoming support tickets, scoring customer sentiment from reviews, or extracting entities from documents, run automatically as new rows arrive, without any external orchestration. The AI logic is defined once in the table definition, and Snowflake handles the rest incrementally.

Monitoring and troubleshooting queries

Query history and profile

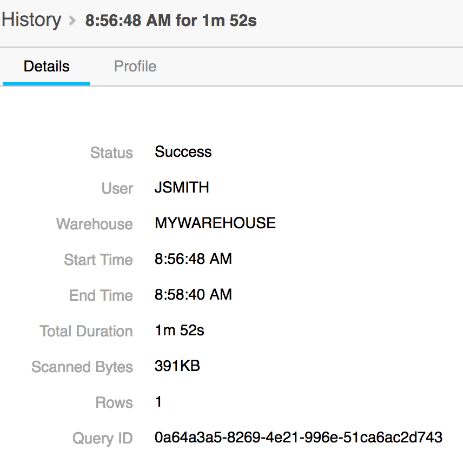

Snowflake stores information about the queries you run, such as start and end times, and how much compute resource was required to execute them. This data is accessible to users and is important for performance monitoring schemas and warehouses.

Snowflake offers a tool called the Query Profile, which helps you understand what’s happening “underneath the hood.” It helps you identify mistakes in your SQL queries, but also any compute bottlenecks that may be affecting performance downstream. You can easily access this tool on the Snowflake UI by clicking on any given query ID. In the photo below courtesy of Snowflake, you’ll see the details tab as mentioned.

Query performance metrics

Query compilation time, CPU and memory usage, queue time, concurrency, and volume of data processed are some examples of query performance metrics that Snowflake tracks.

Identifying and Resolving Query Issues

Identifying and resolving performance bottlenecks can be simplified with continued observation over time. For example, let's say you have a SQL query with multiple sub-joins. You're querying against multiple tables that are small right now, but are expected to grow over time. You can observe the query compilation time over time to help you identify what, if anything, needs to be changed.

If over six months you observe performance metrics for a table have significantly worsened, the quickest approach is to increase the size of your warehouse. The long-term approach is to refactor your SQL query and look into other performance tools Snowflake already offers.

Where Snowflake Query Processing Is Heading

Rather than speculating, it is more useful to look at what Snowflake has already shipped in 2025 and 2026 to understand the direction of the platform.

Task scheduling now supports intervals as short as every 10 seconds, down from the previous 1-minute minimum. This opens the door to streaming-like behavior—refreshing small, targeted datasets at near-real-time frequency—without needing to deploy Kafka, Spark, or external orchestrators. For teams that need just enough real-time without the overhead of true stream processing, this is a meaningful option.

Snowflake Scripting now supports asynchronous job execution, meaning stored procedures or SQL blocks can be run in parallel within a single session using the ASYNC keyword. This eliminates the need for an external orchestrator like Airflow just to parallelize steps in a data pipeline.

Apache Iceberg table support has matured significantly, with bi-directional data access, external query engine support, and write capabilities now generally available. This positions Snowflake as a viable hub for open lakehouse architectures where compute is decoupled from the table format itself.

Taken together, the trajectory is clear: Snowflake is moving from a warehouse you query into a platform where pipelines, AI transformations, and analytics all run within the same governed compute layer.

Conclusion

Snowflake is a self-managed data warehousing tool comprised of a hybrid, three-tier architecture. It has a multi-clustered infrastructure that offers customization and cost-effectiveness for users. They also offer a number of resources to help data professionals understand query performance to better resolve performance bottlenecks.

Importance of query processing in Snowflake for data professionals

Understanding how query processing happens in Snowflake is important to understanding the end-to-end lifecycle of the data you’re working with, developing yourself professionally, and best supporting stakeholders.

Want to learn more about Snowflake?

Author