Organizations often rely on multiple data storage and processing solutions to meet their diverse business needs. Amazon S3 and PostgreSQL are two popular choices that are frequently used for storing data. While S3 provides scalable and cost-effective object storage, PostgreSQL offers powerful querying capabilities. So, when you copy data from S3 to Postgres, it ensures seamless data integration, enabling advanced analytics and reporting. It also serves as a backup mechanism in the event of data loss or disaster impacting S3.

What is S3?

Amazon S3 (Simple Storage Service) is a cloud storage service provided by Amazon Web Services (AWS). You can store an unlimited amount of data in Amazon S3, and you pay only for what you use.

S3 uses an object storage model, where data is stored as objects rather than blocks. An object has three main components—the object’s content or the data, the unique object ID, and metadata. Objects are stored in buckets in Amazon S3 storage, and you can store an unlimited number of objects in a bucket. There is a 5 TB limitation on the size of the object that can be stored in the bucket.

Here are some key features of Amazon S3 that make it a highly versatile and reliable cloud storage solution:

- Versioning: S3 supports versioning, which you can use to preserve, restore, and retrieve previous versions of objects. Versioning is especially useful for maintaining data integrity, auditing, and compliance. It helps recover the data in case of any accidental deletions or overwrites.

- Storage Classes: S3 storage classes define the purpose or the class of storage. You can set the storage class at the object level or set the default storage class for objects created at the bucket level. Some examples of storage classes are S3 Standard, S3 Glacier, S3 Standard-IA, and S3 Intelligent Tiering. The classes are mostly defined based on the performance requirements for read and write operations.

- Security and Access Controls: Amazon S3 offers multiple security features for data protection. You can specify permissions for different AWS accounts or users to control access at the bucket and object levels. With AWS IAM, you can control who is authenticated and authorized to use resources.

What is Postgres?

PostgreSQL, also known as Postgres, is an open-source, relational database management system (RDBMS). It supports both SQL (relational) and JSON (non-relational) querying, making it among the most stable, compliant, and mature relational databases available.

Apart from being free and open-source, Postgres is highly extensible. You can build custom functions, define your own data types, and even write code with different programming languages.

Here are some of the key features of PostgreSQL that make it a popular choice for many applications:

- ACID Compliance: Postgres is ACID (Atomicity, Consistency, Isolation, Durability) compliant and ensures reliable processing of database transactions. It maintains data integrity by ensuring transactions are either fully completed or rolled back in case of failures.

- Multi-Version Concurrency Control (MVCC): PostgreSQL employs MVCC to manage concurrent access to data. With MVCC, multiple transactions can read and write data simultaneously without blocking each other. Each transaction will see a consistent snapshot of the data and avoid locking issues.

- Advanced Data Types and Features: Postgres offers a range of built-in and extended data types beyond the traditional numeric and string types. It supports arrays, JSON/JSONB data, XML, geometric types, and more. Postgres also provides specialized indexing options like B-tree, GiST, and GIN to optimize performance.

- Security and Authentication: PostgreSQL provides robust security features for data protection and ensures authorized access. It includes various authentication mechanisms like SSPI, SCRAM-SHA-256, and GSSAPI for secure connections. Postgres also offers features like row- and column-level security for additional data protection.

Methods to Replicate Data From S3 to Postgres

To copy from S3 to Postgres, you can use one of the following methods:

- Method #1: Using Python

- Method #2: Using AWS DMS

- Method #3: Using SaaS Alternatives like Estuary

Method #1: Use Python to Load Data from S3 to Postgres

You can develop custom scripts using Python to load data from S3 to Postgres. While this method offers a customizable solution, it isn’t among the easiest to implement.

You can utilize the boto3 library, the official AWS SDK for Python, to interact with S3. After importing the package, use the client function as shown below:

plaintextimport boto3

s3 = boto3.client(“s3”)This code will create an S3 class.

Next, you can use the download_file() function to download a file from an S3 bucket and immediately save it.

plaintexts3.dowload_file(

Bucket=”sample-bucket-1504”, Key=”data_copy.csv”, Filename=”data/downloaded_from_s3.csv”

)The different parameters of the download_file() function include:

- Bucket: The name of the S3 bucket from where you want to replicate data.

- Key: The name of the S3 object or file that you want to download.

- Filename: The name of the file that will save the data from the downloaded file.

Once the data is obtained, you can use Python’s data manipulation libraries, like pandas or NumPy, for transformation. You can perform data preprocessing, filtering, or formatting through such libraries before moving the data to PostgreSQL.

You can use Python libraries like psycopg2 or SQLAlchemy for connecting with Postgres. These libraries provide functions and methods to establish a connection, execute SQL queries, and insert the transformed data into PostgreSQL tables.

If you’d like to schedule the execution of the replication process for continuous synchronization, you can incorporate the replication code into a script.

Method #2: Using AWS DMS to Replicate S3 Data to Postgres

AWS Database Migration Service (AWS DMS) is a fully-managed service that simplifies the process of migrating data between different databases, including from S3 to Postgres.

You can replicate S3 data with AWS DMS using two different methods—AWS DMS Management Console or AWS CLI/AWS DMS API.

When using AWS DMS to migrate data from an Amazon S3 bucket, first provide access to the bucket containing the data files you want to transfer. The S3 bucket must also include an external table definition as a JSON file to describe how AWS DMS must interpret the data from S3. To use S3 as an AWS DMS source, you must ensure your S3 bucket is in the same AWS region as the DMS replication instance. And the AWS account you use for the migration must have read access to the source bucket.

While you can use a PostgreSQL database as an AWS DMS target to replicate your S3 data, there are some things to keep in mind. AWS DMS only supports PostgreSQL versions 9.4 and higher, 10.x, 11.x, 12.x, 13.x, and 14.x. And for security purposes, the user account used for data migration must be a registered user in the PostgreSQL database.

Method #3: Use SaaS Alternatives like Estuary to Copy from S3 to Postgres

Estuary offers real-time integration capabilities between S3 and PostgreSQL. The data replication process is simplified with visual interfaces, automation features, and pre-built connectors. Flow offers a simple and efficient way to set up and manage the data replication process in real time.

Here are the steps involved in using Flow for replicating your S3 data to Postgres.

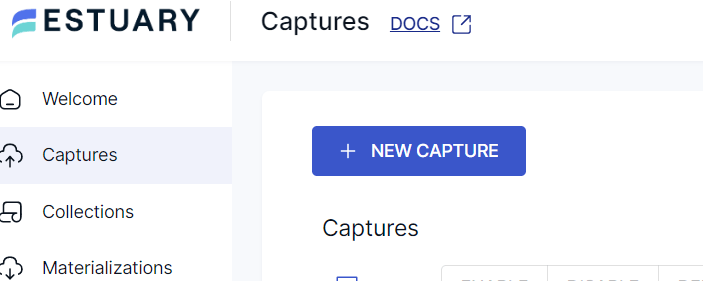

Step 1: Capture S3 Data

To use Flow for replicating data from S3 to Postgres, you’ll need an Estuary account. If you don’t already have an account, register for a free account. And if you have one, log in to your account.

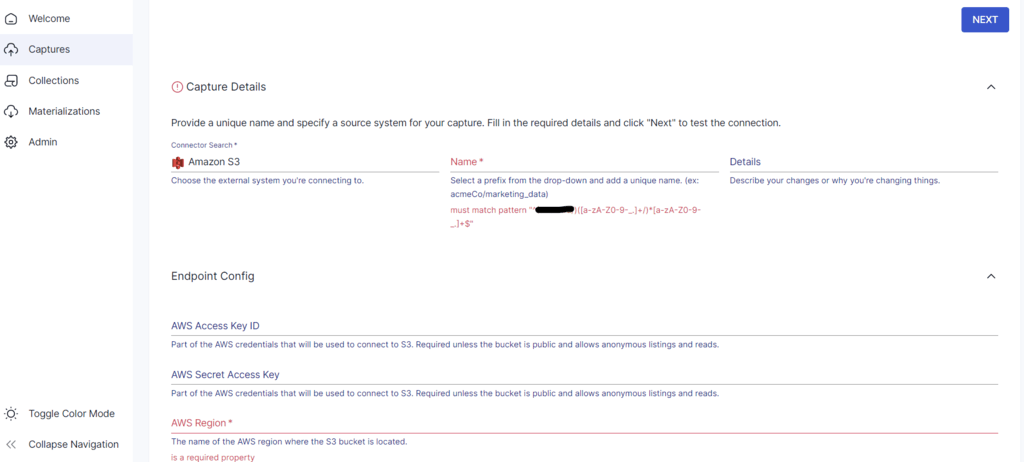

After you’ve logged in, you’ll be redirected to the Estuary dashboard. Click on Captures and then click on the New Capture button.

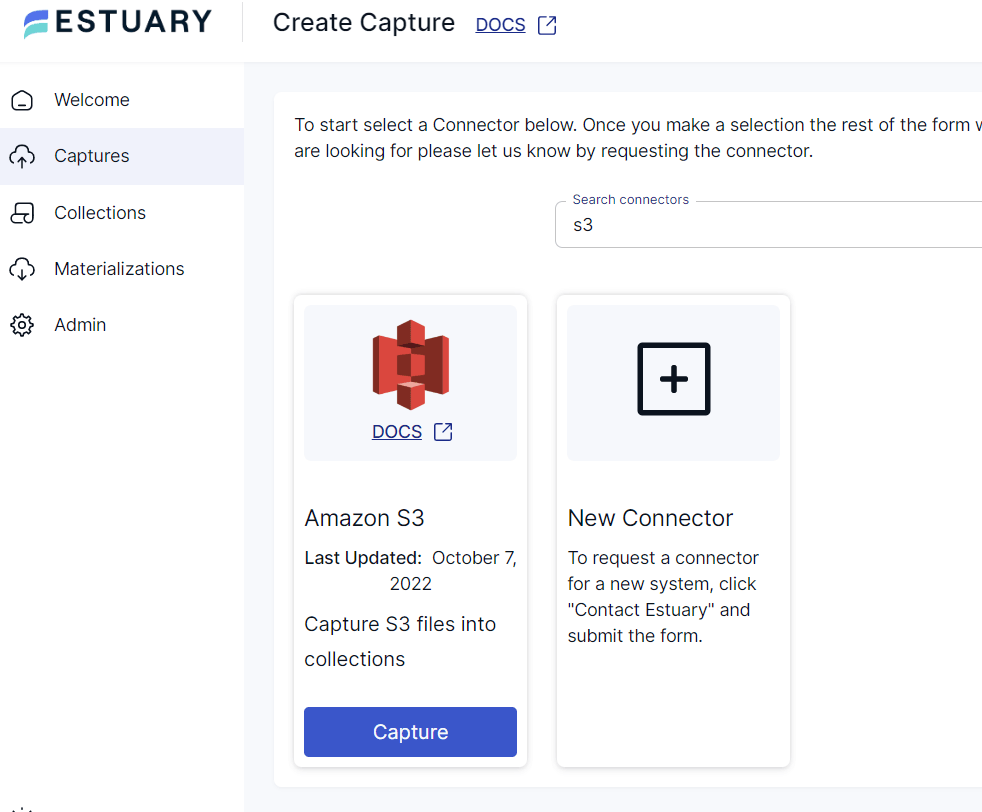

In the Search Connectors box, search for S3.

Click on the Capture button of the Amazon S3 connector option. This will redirect you to the Create Capture page of the S3 connector. You can use the connector to capture data from an S3 bucket, provided it is publicly accessible and allows anonymous reads or the S3 bucket must be accessible via a root or IAM user. Fill in the required fields like a Name for the connector, the AWS Region location of the S3 bucket, and the name of the S3 bucket.

After providing the details, click on the Next button. Flow connects to your S3 bucket and detects all the data sources. Then, click on Save and Publish.

Step 2: Set up Postgres as the Destination

Before you continue, make sure your Postgres instance is ready to receive data from Flow. See the prerequisites.

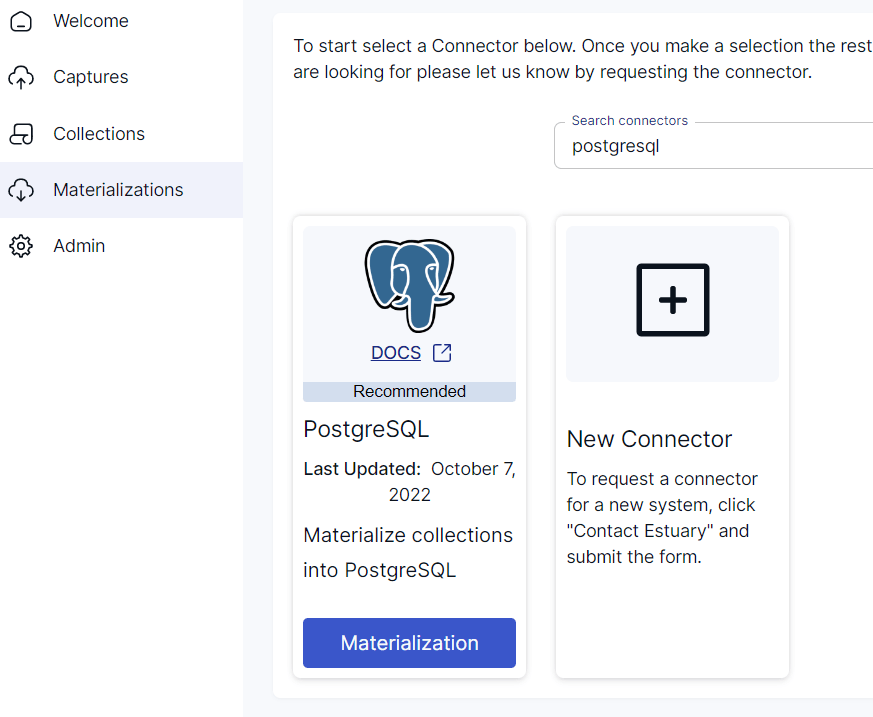

You can set up Postgres as your data destination by clicking on Materialize Connections in the pop-up that follows after a successful capture. Alternatively, you can navigate to the Estuary dashboard and click on Materializations. Then, click on the New Materialization button.

Search for PostgreSQL in the Search Connectors box. You’ll see the PostgreSQL connector option in the search results. Click on the Materialization button to proceed with setting up your data destination.

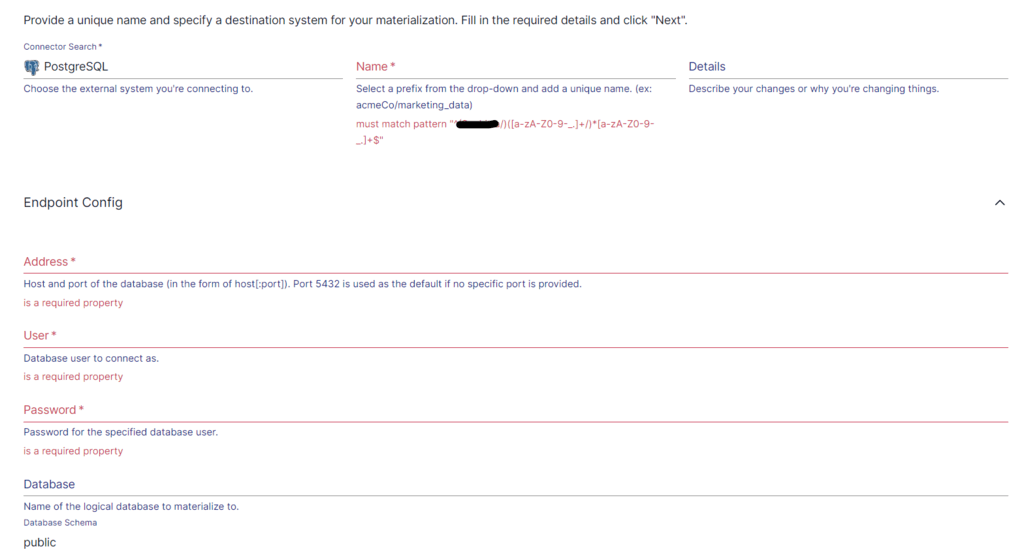

On the Postgres materialization connector page, you must fill in the required details; apart from a Name for the connector, Endpoint Config details like Username, Password, and Address of the database must be provided.

Now, click on Next.

Use the Collection Selector to choose the data collections captured from S3, if not automatically selected.

Then, click on Save and Publish. The connector will create new tables in your database based on your specifications.

If you’d like more information on the process, here’s the Estuary documentation to help out:

Unlock the power of real-time data replication from S3 to Postgres with Estuary. Register for free and start building your first pipeline today, or contact us for support with enterprise solutions.

Conclusion

Copying from Amazon S3 to PostgreSQL offers several benefits, including enhanced querying capabilities, data consistency, and data backup. When you combine the durability and scalability of S3 with the relational database features of PostgreSQL, you can unlock the full potential of your data.

While you can use Python to load data from S3 to Postgres, it has certain drawbacks, including development complexity, maintenance, scalability, and monitoring. On the other hand, using AWS DMS involves the complexity of initial setup, limited transformation capabilities, and network bandwidth. Estuary overcomes these challenges by automating the replication of S3 to Postgres in real time. With readily available connectors and an easy-to-use interface, it will only take a few minutes to set up and start loading data from S3 to Postgres.

Estuary supports multiple sources and destinations for data transfers. Register for an account and try your first pipeline for free!

About the author

With over 15 years in data engineering, a seasoned expert in driving growth for early-stage data companies, focusing on strategies that attract customers and users. Extensive writing provides insights to help companies scale efficiently and effectively in an evolving data landscape.