The amount of data being generated and stored has experienced exponential growth in the last decade – it has even been estimated that 90% of all data ever created was generated in the last 2 years.

Not only does more data exist than ever before, smart companies are aggressively spending to store and capitalize on it. As an interesting proxy for this, consider that AWS (most popular cloud data warehouse) has grown revenue by 3x in the last 3 years, from ~$12 billion in 2016 to ~$35 billion in 2019.

Recent developments in cloud computing have made it so that all companies should have access to orders of magnitude more scalable and accessible data science. For once we have the tools to sift through the exploding scale of data.

How we got here

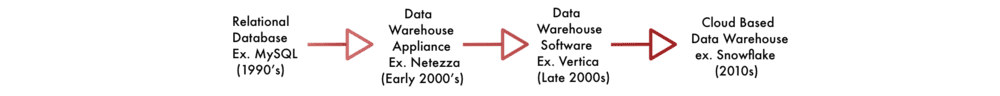

To do something with the ever-increasing data onslaught, we’ve seen technology adapt, and more recently, meet the challenge of big data.

The trend initially started with companies using bigger machines to vertically scale; eventually, limits were hit and data volumes have favored moving to a fully horizontal model, in which a larger quantity of machines is preferred over larger size. This has even been abstracted one more step since companies utilize “virtual machines” now instead of direct machines that need maintenance. This means they only pay for the time and machines used to answer their questions when they’re asked.

Currently, we have access to true, on demand, horizontally scaling computing resources that can divide our most complex queries into their smallest parts to give us on-demand answers quickly. It works so well that product misses by Amazon’s Redshift and Google’s usual inability to commercialize (Bigquery) opened the door for Snowflake to become a $12 Billion company in just 8 years.

The data stack everyone should use

Cloud data warehouses (CDW’s) have made it (relatively) affordable to answer any business or even engineering question that one could have, provided your data is in one place and in a format that can be queried. But CDW’s don’t provide any value unless your team has the tools to collaboratively build queries on it together. For the first time, all of this has come together and data scientists are truly empowered to dig in, but how?

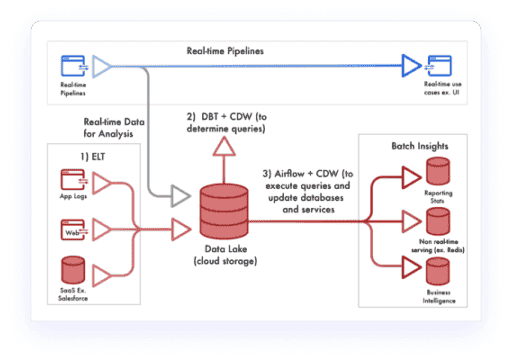

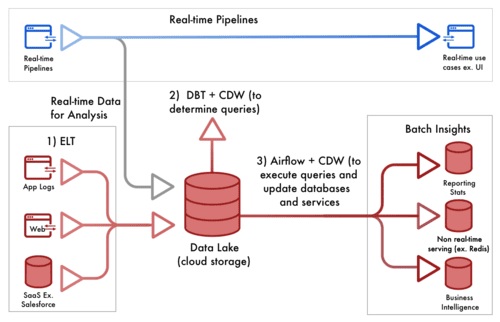

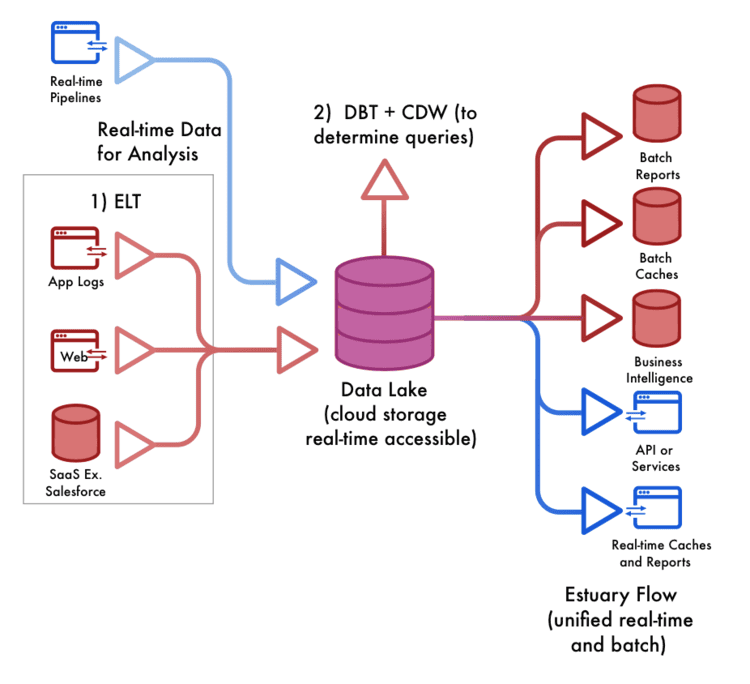

The first step is getting data into a queryable location and all the rage these days is around ELT (Extract Load Transform) tools like Alooma, Stitch and Fivetran. They’ve made it effortless to keep copies of your data from any SaaS up to date, in a place that your CDW can query. They have their downfalls versus standard ETL, like the fact that every time someone queries data, they need to transform it at query time. Doing so risks getting it wrong more than doing it up front, but they are simple and they work.

Once data is in a queryable environment, you need to be able to dig in and get insights. A product called Data Build Tool (DBT) emerged in this space to be the true hero. It’s seen exponential growth with thousands of companies using it in just a couple years. And with good reason — DBT has completely changed the way companies collaborate to get insights. It’s a slightly more complex concept for non-engineers, but DBT effectively provides a platform which data scientists can use to collaboratively author and share queries alongside engineers. Each query (which creates a pipeline) is published within an organization; therefore, there’s an easily accessible record of exactly how every pipeline is constructed. Doing so goes through standard engineering processes like version control giving some specific benefits:

- Collaboratively designing new pipelines is easy

- Metrics can be verified to be correct

- Queries can be built, referenced and re-used

Once these high quality pipelines are created, anyone can query them without needing to know how they were built.

Finally, once queries are created, they just need to be scheduled. This step is easy and the usual go-to is Airflow.

Business Value

The incredible part of this new setup is that data scientists are now empowered to access all data (internal or external) and collaboratively massage it to discover and deliver business value. They can work at an unprecedented scale to get insights which weren’t possible in the past…and since everything is in SQL, orders of magnitude more people have the right skills to ask and answer questions.

This truly is a huge turning point — A step change in the amount of data that can be queried, the number of sources that can be pulled in, and the group of people who can do it. We can analyze data thousands of times better than we could in the past. Imagine a world in which analysis could, for the first time, catch up or even surpass data growth rates.

Where should we go from here?

The setup described is arguably the best possible one given today’s technology options, but it’s not without its limitations, some of which can be solved through new frameworks. It was originally created to answer one-off questions. Every time a question is asked, the technology needs to crunch all of your data to answer it again. Obviously, not the most efficient method, and one that leads to higher than required costs and slower query times. Furthermore, since data has to be loaded on a schedule, there’s latency built into pipelines, so whenever you ask a question, the answer you get will be about the past. Unfortunately, an application that truly requires real-time user flows can’t be built like this — it requires fully separate pipelines.

You should be able to find the answer to complex questions with what we’ve covered above, but when you uncover a meaningful new insight you want it to be readily available.

We’re building Estuary Flow to make that a reality with efficient and real-time pipelines, which are designed to work well alongside existing architecture. As a result, companies can service all applications for the cheapest price tag, the least latency, and the added benefit of not having to build different pipelines.

About the author

David Yaffe is a co-founder and the CEO of Estuary. He previously served as the COO of LiveRamp and the co-founder / CEO of Arbor which was sold to LiveRamp in 2016. He has an extensive background in product management, serving as head of product for Doubleclick Bid Manager and Invite Media.