It’s graduation season. High schoolers are off to college, college students are off to the working world, and many data teams are ready to graduate from their current ELT tool.

If you’ve been in the tech or startup scene these past few years, you’re surely familiar with the explosion of ELT tools that have captured the conversation, for better or for worse.

The wave started with Fivetran. Many direct-to-consumer and tech companies in the late 2010s adopted this ELT platform, hooked up a data warehouse and a visualization tool, and were off to the races. Fivetran, and the many platforms that grew in its wake, have given businesses across sectors an easy way to get up and running with BI.

If that sounds familiar to you, maybe this will as well:

Several years later, you’re experiencing the pain of scaling and growing with your ELT tool.

If you use Fivetran specifically, you’ve been hit with multiple pricing changes. You’ve also been roadblocked in your attempts to build low-latency pipelines.

In general, you’ve realized being locked into ELT is problematic. You have to perform all transformations in your destination system: often Snowflake, BigQuery, or similar. That’s not the most cost-effective way to use a data warehouse.

You know there are cheaper ways to transform your data, but your tool doesn’t let you.

If that all sounds right, it’s time for your team to switch to a data integration framework that fits more intelligently — and efficiently — into the business you actually want to run today.

I’ve been in the tech and data space for a while now — 15+ years, to be exact. Like you, I’ve seen a lot of trends come and go but without a doubt, we are moving away from predatory SaaS pricing and more towards a model where we pay for what we use – resources like compute and storage.

As you’ve already guessed, I want to offer you Estuary for consideration as a replacement for your old ELT tool.

Since I’m a co-founder at Estuary, you’re right to be skeptical. I’ll break down my reasoning, but ultimately it’s pretty straightforward:

We didn’t design Flow to ride the wave of most ELT tools, which would’ve allowed us to turn a high profit short-term.

We designed Flow to deliver the kind of value you actually need from an ELT/ETL tool, and we designed the pricing structure to be proportionate to the value you actually derive.

In other words, we’re staking our long-term bets on the savviness of data teams. Price-value mismatches can only exist so long as there’s no better alternative.

Pricing ELT: The Fivetran Case Study

I can’t illustrate this point without a specific example, so let’s look at Fivetran, one of the highest-grossing companies in data integration.

Fivetran owes some of its financial success to a pricing structure that appears very fair, but doesn’t scale well and comes with surprise upsells down the line.

Fivetran charges on what they refer to as Monthly Active Rows, or the number of new or changed rows in the destination each month.

While this pricing model does decay with volume, it’s not ideal for several reasons.

First, Monthly Active Rows depend on Fivetran’s definition of a “row.” Because Fivetran owns the destination schema for their SaaS application connectors, they may generate multiple rows for each real-world event that occurs.

For example, say you’re a Shopify store and a customer places an order with one item in it. Immediately, ten or more rows can be filled for that one real-world activity. That’s because Fivetran has highly normalized its schema to its specifications. You’re being charged for 10+ rows immediately off one order.

This ties Fivetran’s revenue collection less to your data infrastructure and more to the cycles and whims of your business.

Second, Fivetran treats low latency as an upsell charge, even after asking you to spend time configuring low-latency-specific features, like Write-Ahead-Logging for a Postgres source.

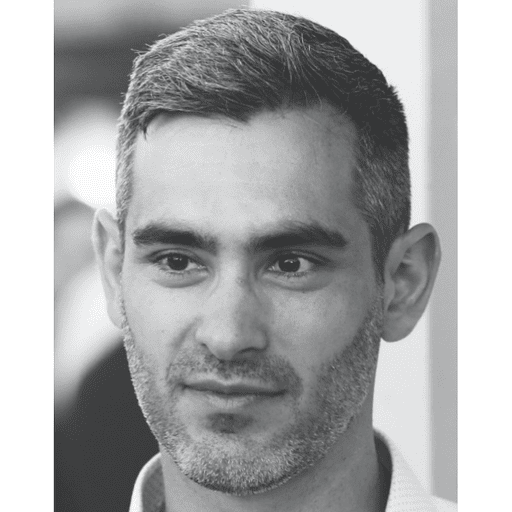

Let’s say you have about 60 million rows a month coming from Postgres that you want to land in Snowflake.

On the Fivetran Starter Plan, you can get one-hour latency from Fivetran for $4,580 a month, or $55,000 a year.

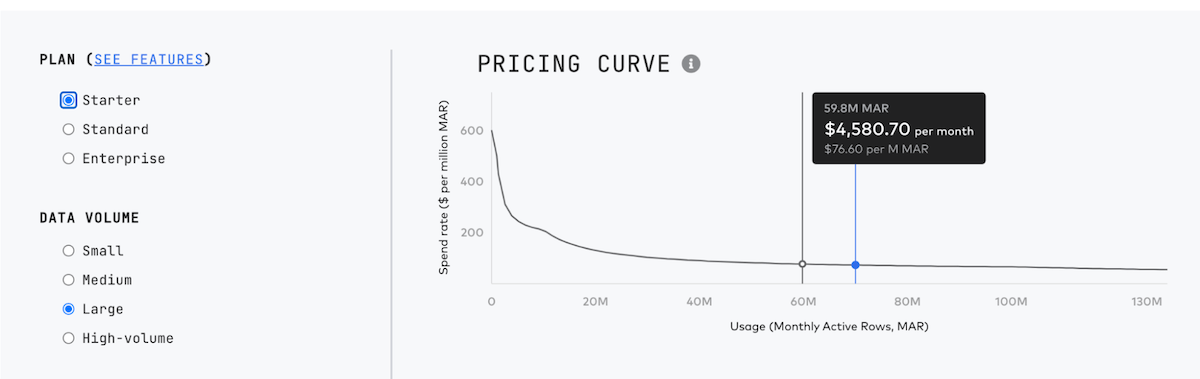

Now, if you want to shorten this to 15-minute latency for the same volume, you’ll pay $6,871 a month, or about $82,500 a year.

You’re being charged for an extra $27,500 for an arbitrary pricing plan gate. If you’ve enabled WAL in Postgres, there is absolutely no reason you should be paying extra — not to mention that even 15 minutes of latency is too high. (If you want real-time replication from Postgres, Estuary offers this right out of the box for far cheaper.)

This begs the question: What exactly is Fivetran doing with your data for the other 59 minutes and 59 seconds before they land it in your destination?

If you’re using WAL anyway (as they encourage), there’s no cost on Fivetran’s end to simply pass on the benefits of CDC to you within seconds or milliseconds.

It’s strictly a business choice: they hold the data and gate the better performance in the Standard tier.

Even if you make that upgrade, you still have to wait 15 minutes or pay for yet another upgrade. And your overall MAR price has gone up across the board.

ELT Price as a Function of Your Marketing Spend

For most companies, the largest spend on Fivetran MARs comes from marketing systems like Braze and Google Ads. Marketing actions cause many unique events, like website visits or in-app clicks. You store all of these as events through something like Snowplow or in a Postgres production database replica.

This means Fivetran’s pricing is decoupled from the actual data infrastructure. It’s more of a function of month-to-month changes in marketing or product releases that have nothing to do with the data team.

Of course, it’s important for the data team to have strong relationships with partners throughout the business, but that’s not enough to solve this problem. Ultimately, marketing or product activity puts the data teams owning the Fivetran line item at a disadvantage — it makes it near impossible for them to predict Fivetran spend.

For seasonal businesses like retail, run-of-the-mill events can impact Fivetran spend dramatically. For example, you might hire a new agency to try out a new campaign strategy right before the winter holiday season. This will almost certainly cause a huge increase in Monthly Active Rows used.

And those credits you’d planned out for a year? They’re burned up, and you’re on the phone again in the middle of December trying to negotiate extra credits with a Fivetran rep.

Data Integration Priced on Volume

Estuary is priced strictly on monthly data volume. This does a couple of things:

- It makes it easier for you to predict how much you’ll spend all year.

- It relieves us of any temptation to manipulate your schemas to stealthily raise your bill.

Flow is also real-time by default, and we always pass that performance on to you. If you ever want to slow things down (say, to reduce active time in your warehouse) those knobs are controlled by you.

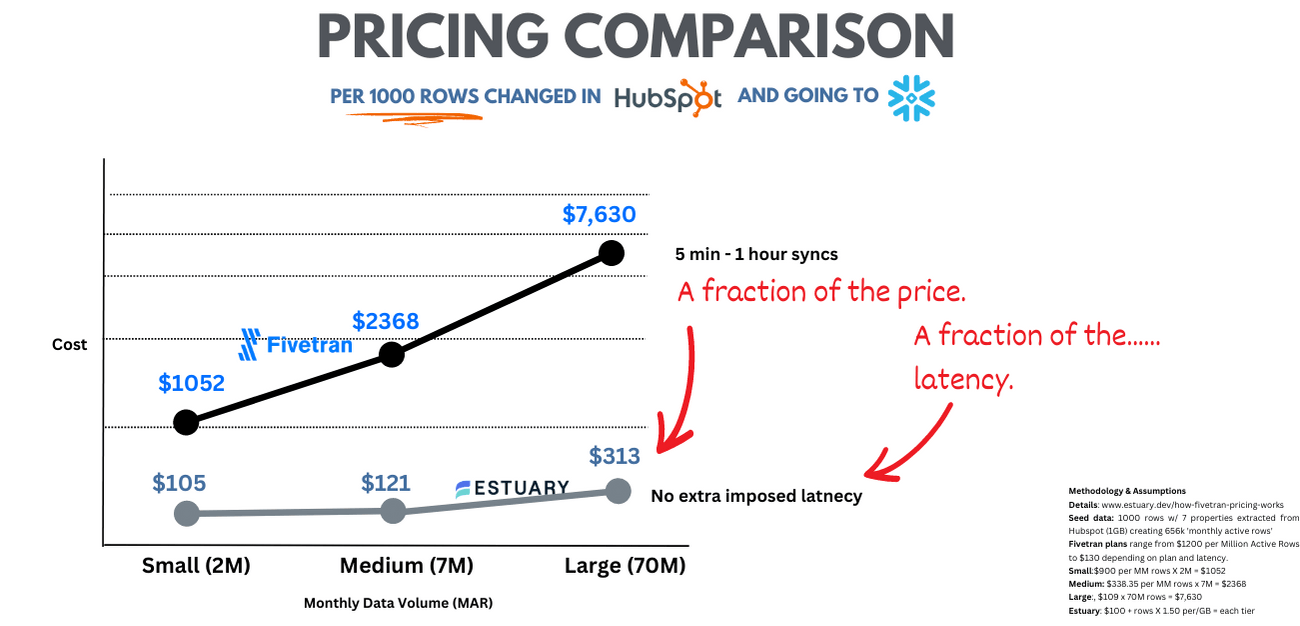

It’s a pricing structure that starts lower and also scales better. Let’s assume you’ve got a pipeline from Hubspot to Snowflake that's what we'd call a "medium" deployment: 7 million Monthly Active Rows. That'd cost you about $2368 in Fivetran and $121 with Flow.

The graph below covers some variations and methodology:

ETLTLTLT Alphabet Soup

Recently, we’ve seen a lot of pushback against the strict application of the ELT design pattern promoted by many of today’s data integration vendors.

If you’re in year two, three, or four of a “modern” data investment, chances are you’ve started to feel pain around latency, cost, or both.

The source of the pain is often a growing stack of SQL transformations in the data warehouse — between sources and destinations. Some organizations are simply finding that they have layered too much intermediary SQL.

When you use Estuary, you have the power to decide where and how transformations occur.

Flow comes with in-flight transformations out of the box, so you can design and define data schemas before the data lands in the destination. Later, you can add more transformations downstream in the warehouse with SQL, Airflow, or dbt as you desire.

Strategically placing different types of transformations at different stages of the data lifecycle can have significant performance and cost savings — and reduce the need to recompute data on the CPU cycles of the data warehouse.

We don’t care if you call this ETL or ELT or ETLT or anything else as long as we can help you strategically navigate this complexity.

All Common Destinations, All Common Sources

Over the last few years, we’ve entered a landscape of “connector shopping.” It’s become commonplace for teams to cobble the connectors they need from several places; for example, you might get most of your connectors from Fivetran, a couple from Stitch, and use a Google Cloud Function for one very niche long-tail system that no one else supports.

Of course, it’s hard for one solution to provide all the integrations you need, so this flexibility is important. Still, it’s any good data integration platform’s duty to consistently deliver the integrations their customers actually need.

We designed Estuary to meet you where you’re at. Our development team prioritizes building connectors based on request, and because we don’t charge on rows, we have no reason to prioritize destination systems based on the number of rows they create (as other companies might). Our business model is simply delivering the integrations you need today so you can build what you need.

Better Data Integration for 2023 and Beyond

If you’re facing high or erratic ELT bills no matter how much optimization you try to perform, I’m willing to bet that your infrastructure is not the problem. More likely, you’re using a vendor that’s no longer optimized for your needs.

You’ve realized that data transfer within your organization could be more efficient while still granting you more control. And if you’ve long suspected it could also be more affordable, you’re spot on.

There’s no need for overly complex billing; no need to gatekeep features that are cheap for vendors to provide. Just like moving on from high school or college, maturing means your day-to-day data tasks should get easier, not harder. We agree.

About the author

David Yaffe is a co-founder and the CEO of Estuary. He previously served as the COO of LiveRamp and the co-founder / CEO of Arbor which was sold to LiveRamp in 2016. He has an extensive background in product management, serving as head of product for Doubleclick Bid Manager and Invite Media.