Organizations use data-driven strategies with Braze to improve customer engagement through customized marketing campaigns. Although Braze is a powerful marketing tool for businesses to deliver targeted messaging, it is not ideal for handling large amounts of data or performing complex analytics.

However, by moving data from Braze to BigQuery, you can stay ahead in the modern competitive landscape by turning your marketing data assets into strategic fuel. This cloud-based data warehouse platform can store large amounts of data and perform analysis through advanced querying. Therefore, migrating data from Braze to BigQuery can help you centralize your customer data and optimize your marketing strategies using BigQuery’s data analytics capabilities.

Let’s jump right in to explore the different methods for integrating Braze to BigQuery.

Braze: An Overview

Braze is a user-friendly customer engagement platform that helps businesses understand customers' needs and optimize customer experience by generating engagement through different marketing channels such as email, Whatsapp, SMS, etc. It’s a suitable platform to integrate customer data and divide your users into segments to generate a more personalized experience for your customers.

Through its promotional activities and performance management functions, Braze helps market your products better and boosts customer retention.

Let’s look at some of the key features of Braze:

- Personalized Campaign: Braze provides customized campaigns based on user preferences and behavioral history, such as how much time users spend on your website and how many times they purchase your product.

- Cross-channel Messaging: With Braze, you can reach out to your potential customers through various mediums, such as emails, SMS, push notifications on web applications, in-app messages, etc.

- Experimentation: You can experiment with your campaign strategies through different content formats, call-to-actions, and message variations that suit your audience's needs.

What Is BigQuery?

Developed by Google, BigQuery is a cloud-based serverless solution platform that can handle multiple datasets for integration and analysis. It has several built-in functionalities and services, including capabilities that support machine learning and AI, to help you perform predictive analytics on real-time data to gain significant business insights.

Here are some of the impressive features of BigQuery:

- Scalability: BigQuery’s cloud-based architecture allows you to store, manage, and analyze large datasets ranging from terabytes to petabytes.

- SQL Querying: Developers and analysts familiar with SQL queries and commands such as select, insert, update, etc., can execute data queries without learning a new language.

- Advanced Data Analysis: BigQuery offers advanced analytics with built-in machine learning and AI features. These techniques include linear regression, k-means clustering, and others. You can use them to predict customer behavior patterns, segment groups, or forecast sales.

Why Migrate Data From Braze to BigQuery?

- Data Centralization: BigQuery helps centralize customer data from various sources, including Braze, providing a 360-degree customer behavior view for in-depth analysis.

- Profound Analysis: By accessing BigQuery’s advanced analytic functions, you can get a clear insight into customer engagement, trends, and preferences.

- Generating Customized Reports: BigQuery can integrate with data visualization tools, helping you generate customized reports for your SQL queries about your data. These tools provide an in-depth analysis of customer data present on Braze.

How to Integrate Braze to BigQuery

The two best methods to transfer Braze data to BigQuery are:

- The No-Code Solution: Using Estuary Flow to Connect Braze to BigQuery

- Manual Configuration: Using CSV Export/Import to Move Data From Braze to BigQuery

The No-Code Solution: Using Estuary Flow to Connect Braze to BigQuery

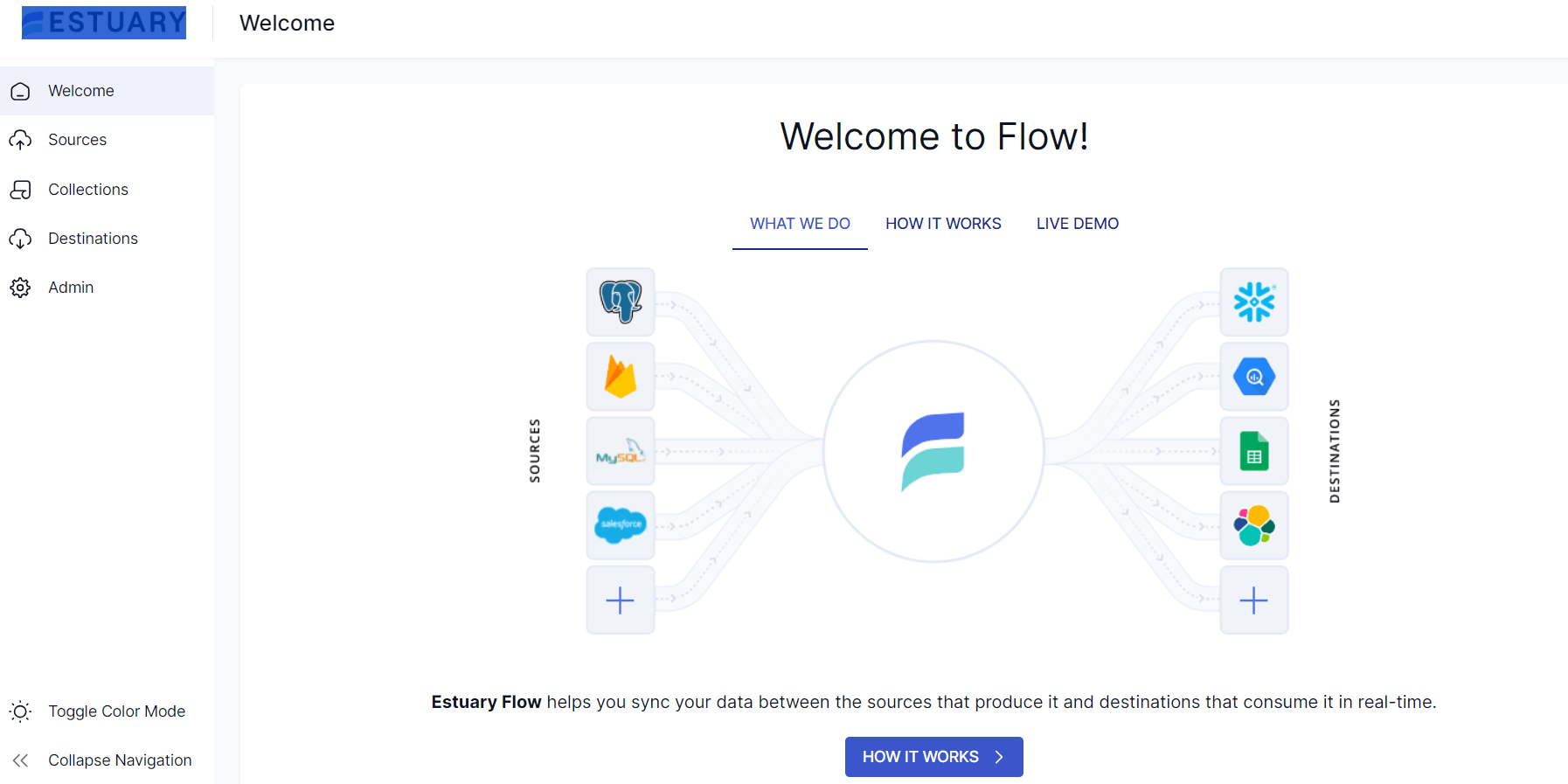

Estuary Flow is a user-friendly Extract, Transform, Load (ETL) platform that helps you integrate data between various sources and destinations in near real time. Whether dealing with cloud storage, databases, or APIs, Flow allows you to transfer your data to your desired destination with automated data pipelines.

Here’s a detailed rundown on using Estuary Flow to integrate Braze to BigQuery.

Prerequisites

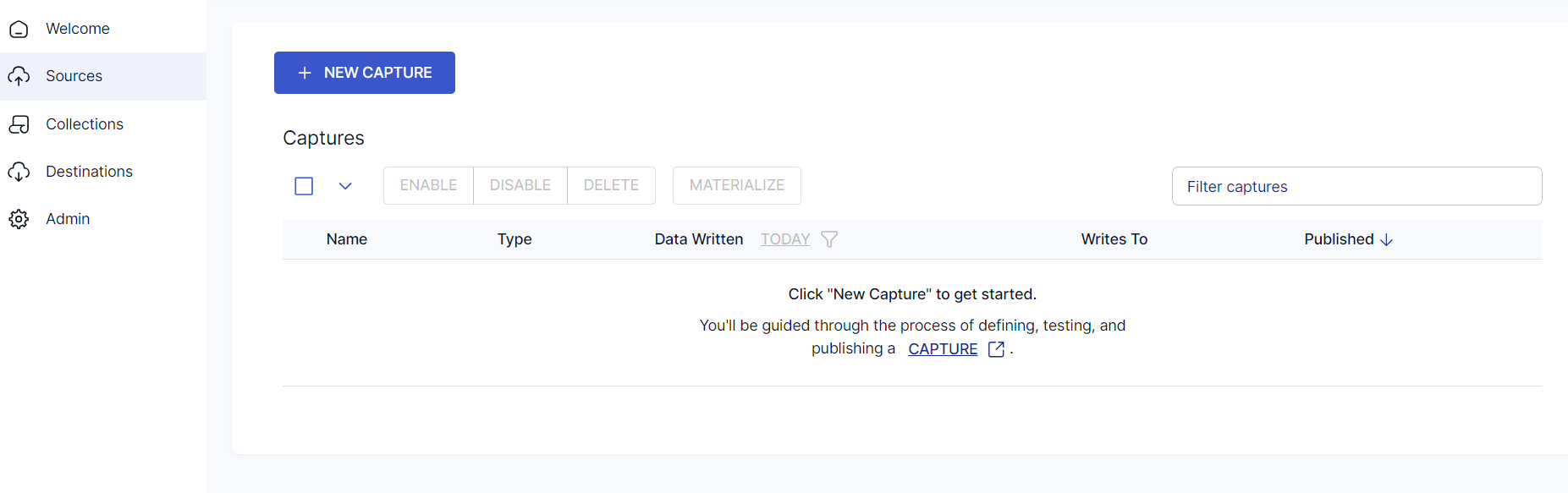

Step 1: Configure Braze as your Source

- Sign in to your Estuary account.

- Select Sources from the sidebar and click on the + NEW CAPTURE button.

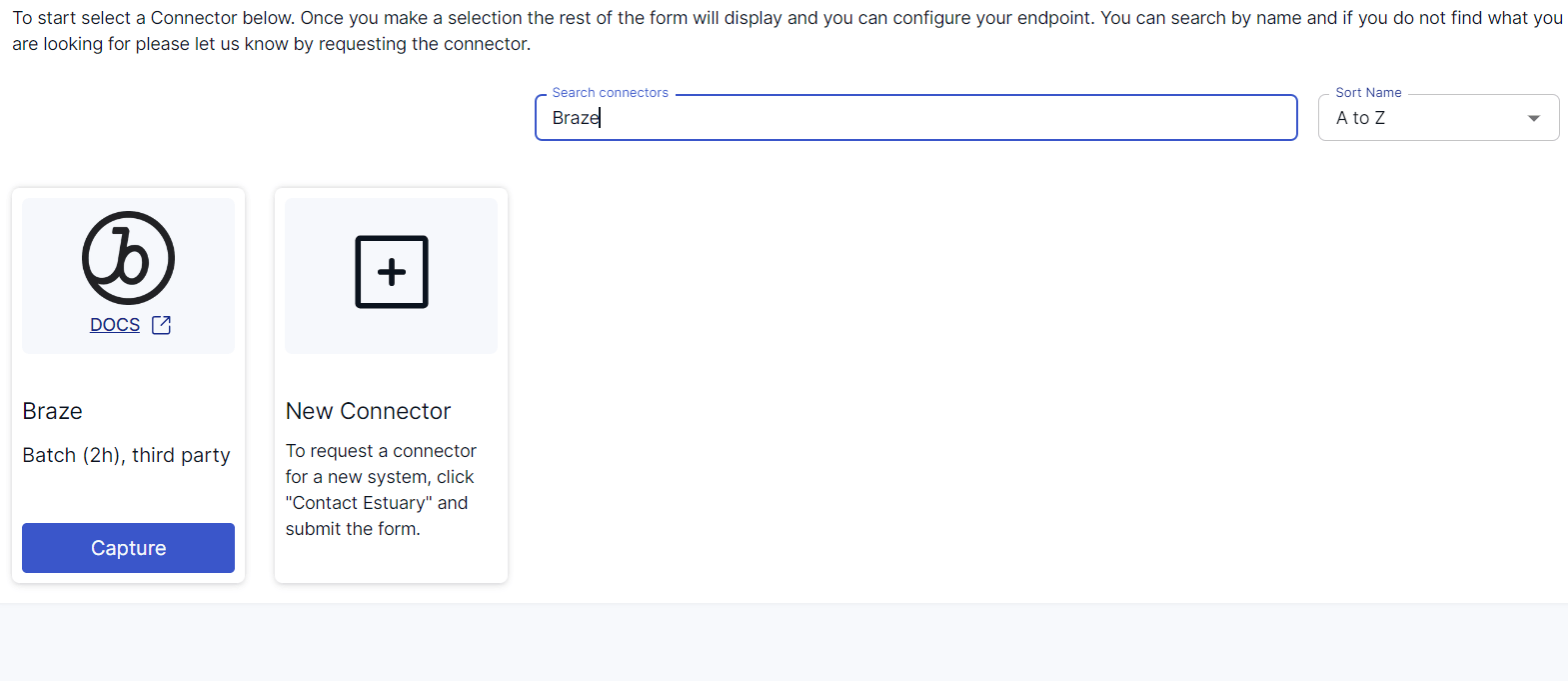

- Use the Search connectors field to search for Braze. When you see the connector in the search results, click its Capture button.

- On the Braze connector configuration page, specify the following:

- Insert a unique Name for your capture.

- Enter the Rest API Key and URL from your Braze dashboard window.

- The Start date from which the rows will be synced.

- Click on NEXT. Then click on SAVE AND PUBLISH. The connector will capture the data from Braze into Flow collections.

Step 2: Configure BigQuery as your Destination

- Select Destinations from the sidebar on the dashboard. Then, click + NEW MATERIALIZATION.

- Search for BigQuery using the Search connectors bar and select Google BigQuery as your destination by clicking its Materialization button.

- You will be redirected to a BigQuery connector configuration page, where you must specify all the mandatory fields.

- Specify a unique name for your materialization.

- Specify the following fields in the Endpoint Config section:

- Project ID: The Google Cloud Project ID that contains the BigQuery dataset.

- Service Account JSON: Enter the JSON credentials for your service account, which will be used for authorization.

- Region: Region of the bucket and BigQuery dataset (they should be in the same area).

- Dataset: Specify the dataset and associated materialization metadata tables where the data will be stored.

- Bucket: Specify the GCS bucket where the data will be temporarily stored.

- Even though your capture Flow collection is automatically linked to the materialization, you can manually add a capture to your materialization through the Source Collections section.

- Click on NEXT > SAVE AND PUBLISH to complete the configuration process. The connector will materialize Flow collections of your Braze data into BigQuery tables.

Benefits of Using Estuary Flow

- Wide Variety of Connectors: Estuary Flow has an in-built library of more than 300+ connectors, including databases, data lakes, SaaS platforms, and data warehouses. These connectors allow you to easily migrate your data from a source to a destination through an efficient data pipeline.

- Change Data Capture (CDC) Technology: Estuary Flow supports CDC, which allows you to integrate your data in real time. This is especially beneficial if you seek real-time analytics for improved operational efficiency.

- Efficient Data Transformation: Estuary Flow supports SQL and TypeScript transformations. TypeScript helps enhance data pipeline reliability by enabling type-check during data transformation. Meanwhile, SQL queries help filter data to ensure data integrity and consistency.

Manual Configuration: Using CSV File Export/Import to Load Data From Braze Into BigQuery

This method involves exporting Braze data as CSV files and then loading CSV into BigQuery. Let’s look into the details of this method.

Step 1: Export Braze Data to CSV Files

Braze allows you to export your data using different data structures and store it in CSV format. Consider exporting Campaign data from Braze to a CSV file for this tutorial. Here are the steps for this:

- Login to your Braze Account.

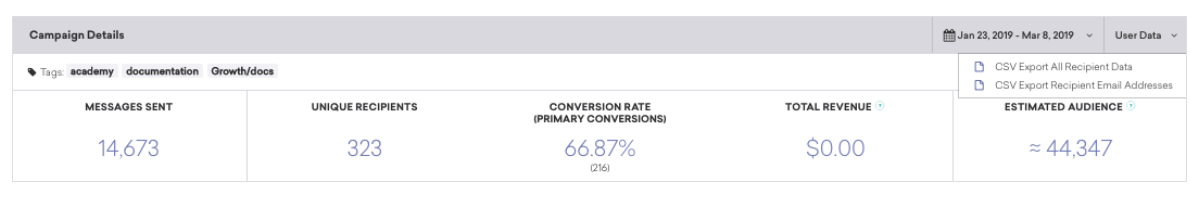

- Navigate to the Campaign Details section.

- Click the User data button (To export your data, you need User Data Permissions in your Braze Workspace).

- Click on CSV Export Recipient Email Addresses.

Braze will generate a report in the background or mail it to the email address linked to the user account. This report will contain all the user profile details for campaign data. If your Amazon S3 credentials are linked to the Braze account, your downloaded CSV file will be uploaded to S3.

Step 2: Load CSV Files to BigQuery

To export the CSV files containing your exported Braze data into BigQuery, you will need to ensure the following prerequisites.

Prerequisites

- Grant the IAM (Identity Access Management) role to allow user to execute a particular task in a dataset and document you created.

- If you are loading data through Google Cloud, provide permission to access the bucket that contains your data.

- Create a dataset for BigQuery in the Google Cloud.

To load your CSV file from Cloud Storage, you can use the explicit schema given below:

plaintextusing Google.Cloud.BigQuery.V2;

using System;

public class BigQueryLoadTableGcsCsv

{

public void LoadTableGcsCsv(

string projectId = "your-project-id",

string datasetId= "your_dataset_id"

)

{

BigQueryClient client = BigQueryClient.Create(projectId);

var gcsURI = "gs://cloud-samples-data/bigquery/us-states/us-states.csv";

var dataset = client.GetDataset(datasetId);

var schema = new TableSchemaBuilder {

{ "name", BigQueryDbType.String},

{ "post_abbr", BigQueryDbType.String}

}.Build();

var destinationTableRef = dataset.GetTableReference(

tableId : "us_states");

//Create Job Configuration

var jobOptions = new CreateLoadJobOptions()

{

// The Source Format Defaults to CSV; Line Below is Optional.

SourceFormat = FileFormat.Csv,

SkipLeadingRows = 1

};

// Create and Run Job

var loadJob = client.CreateLoadJob(

sourceUri : gcsURI, destination : destinationTableRef,

schema : schema, options : jobOptions);

loadJob = loadJob.PollUntilCompleted().ThrowOnAnyError(); // Wait for the job to complete

// Display the number of rows uploaded

BigQueryTable table = client.GetTable(destinationTableRef);

Console.WriteLine(

$"Loaded {table.Resource.NumRows} rows to {table.FullyQualifiedId}");

}

} Limitations of CSV Export/Import for Integrating Braze to BigQuery

- Exporting data from Braze to BigQuery using CSV files can be time-consuming, especially when you update your data sets frequently.

- Understanding the structure of data that is exported into a CSV file is important to ensure it matches the structure or format that BigQuery requires, or else it can cause inconsistencies or errors while generating the output.

- The technique lacks real-time integration capabilities due to delays in exporting Braze data and loading it into BigQuery. This hinders real-time analytics and decision-making.

The Takeaway

While Braze helps you collect and analyze your customer data, BigQuery assists you in centralizing the data for further data analytics. To integrate Braze to BigQuery, you can opt for the manual CSV export/import method. However, the associated limitations include a lack of real-time integration capabilities and the need for separate data transformation workflows.

An alternative to the manual method is using a user-friendly data integration platform like Estuary Flow. It automatically detects the file type, loads your data into BigQuery in parallel, and provides near real-time data synchronization. This allows you to simplify your workflows and leverage the most up-to-date data to make data-driven decisions.

Estuary Flow provides a wide range of connectors and an intuitive interface that supports smooth integration operations. Sign up for free today for quick and easy Braze to BigQuery integration.

FAQs (Frequently Asked Questions)

Q. What is the difference between the CSV export E-mail address and the CSV export user data options?

If you select the CSV export user data option, all user data will be downloaded to your CSV file. On the other hand, CSV export E-mail will download the data of only those users who have email addresses.

Q. What kind of data can you transfer to BigQuery?

BigQuery supports structured and semi-structured data such as CSV, JSON, log files, etc.

About the author

With over 15 years in data engineering, a seasoned expert in driving growth for early-stage data companies, focusing on strategies that attract customers and users. Extensive writing provides insights to help companies scale efficiently and effectively in an evolving data landscape.