Migrating from Oracle to PostgreSQL is one of the most technically demanding database transitions an engineering team can undertake. Oracle and PostgreSQL share relational foundations but diverge significantly in proprietary features, procedural language syntax, data type behavior, and licensing architecture.

This guide covers the full technical path: pre-migration assessment, data type mapping, handling Oracle-specific features that have no direct PostgreSQL equivalent, and three migration methods suited to different database sizes and downtime tolerances.

⚡ Quick Summary: Oracle to PostgreSQL Migration

The PL/SQL conflict: Oracle uses PL/SQL; PostgreSQL uses PL/pgSQL. While syntactically similar, Oracle features like Packages, Autonomous Transactions, and Global Temporary Tables have no direct equivalents and require code rewriting before migration.

The schema strategy: Always separate schema conversion from data migration. Apply your full target DDL in PostgreSQL and validate it before moving a single row of data.

The NUMBER type trap: Mapping Oracle's flexible NUMBER type globally to PostgreSQL NUMERIC introduces significant performance penalties. Identify true integers and map them to INTEGER or BIGINT to protect query performance.

Downtime minimization: Large enterprise databases (1TB+) cannot tolerate big-bang weekend cutovers. A CDC pipeline reading Oracle Redo Logs keeps PostgreSQL continuously synced during testing and validation, reducing cutover to a short application switchover window rather than a long write freeze.

Which Migration Method Fits Your Situation

Choose your approach based on three factors: downtime tolerance, database size, and whether you need ongoing sync during the cutover period.

| Scenario | Best method | Why |

|---|---|---|

| Live production app, no downtime allowed | CDC with Estuary | Streams inserts, updates, and deletes from Oracle Redo Logs; Oracle and PostgreSQL stay in sync while you validate |

| Small database (<100GB), scheduled maintenance window | CSV export and import | Simplest path, no tooling overhead, predictable |

| Querying Oracle from PostgreSQL during phased rewrite | Foreign Data Wrappers (FDW) | Not a full migration; lets PostgreSQL read Oracle tables directly during incremental rewrites |

| Large schema with thousands of PL/SQL objects | Ora2Pg for DDL + Estuary CDC for data | Ora2Pg generates the PostgreSQL DDL and conversion report; Estuary handles continuous data sync |

| Multi-terabyte database, enterprise-critical, minimal downtime needed | Estuary CDC from Oracle Redo Logs | Initialize schema with Ora2Pg, stream live changes with Estuary, validate in parallel, reduce cutover to a short switchover window |

Pre-Migration Assessment: What to Inventory First

Oracle to PostgreSQL migrations fail most often because teams underestimate hidden dependencies. Complete this assessment before writing a single line of DDL.

Database object inventory

Run this query in Oracle to count every object type you need to migrate:

sqlSELECT object_type, COUNT(*) AS object_count

FROM all_objects

WHERE owner = 'YOUR_SCHEMA'

GROUP BY object_type

ORDER BY object_count DESC;

Pay close attention to the counts for: TABLE, VIEW, PROCEDURE, FUNCTION, PACKAGE, PACKAGE BODY, TRIGGER, SEQUENCE, TYPE, and SYNONYM. Each object type has a different migration path and effort level.

Identify Oracle-specific blockers

These are the items that cannot be automatically converted and require manual engineering effort:

- Packages: Oracle groups related procedures and functions into Packages. PostgreSQL has no Package concept. Each package must be decomposed into individual functions and procedures within a schema.

- Autonomous Transactions: Oracle allows a transaction to commit independently of its parent transaction using

PRAGMA AUTONOMOUS_TRANSACTION. PostgreSQL has no native equivalent; the workaround requiresdblinkor thepg_backgroundextension. - Global Temporary Tables: Oracle GTTs persist across sessions. PostgreSQL temporary tables are session-scoped. Behavior differences must be tested with the application.

- Hierarchical queries (

CONNECT BY): Must be rewritten as recursive CTEs in PostgreSQL. - Oracle-specific SQL functions:

NVL,DECODE,SYSDATE,ROWNUM,DUALtable references, andTO_DATEwith Oracle-specific format masks all require rewriting. - Deferred constraints: Oracle and PostgreSQL handle these differently; audit all

DEFERRABLEconstraints carefully.

Cutover strategy decision

Decide before you start:

- Big bang: migrate schema and data once, switch everything at the same time. Higher risk, simpler process. Works for databases under 100GB with a maintenance window.

- Phased with CDC: keep Oracle and PostgreSQL synchronized during a validation period, then cut over by switching the application connection string. Required for production systems that cannot go offline.

Rollback plan

Define explicitly: what triggers a rollback, how long Oracle stays live as a fallback after cutover, and who owns the decision.

Oracle to PostgreSQL Data Type Mapping

This is the most common source of silent data corruption and failed imports. Map every column type before migrating.

| Oracle Type | PostgreSQL Equivalent | Critical Notes |

|---|---|---|

NUMBER(p,s) | NUMERIC(p,s) | Direct equivalent when precision and scale are defined |

NUMBER (no p/s) | INTEGER, BIGINT, or NUMERIC | Do not globally map to NUMERIC. Inspect actual values: if the column only contains integers, map to INTEGER or BIGINT. NUMERIC forces arbitrary-precision arithmetic on every row operation, which degrades query performance significantly on large tables. |

NUMBER(p,0) | INTEGER or BIGINT | No decimal component; use integer types for best performance |

VARCHAR2(n) | VARCHAR(n) or TEXT | If strict length enforcement is not needed, TEXT is simpler and equally performant |

CHAR(n) | CHAR(n) | Oracle pads with spaces to fixed length; PostgreSQL behavior is identical but confirm application-layer string comparisons |

NVARCHAR2(n) | VARCHAR(n) | PostgreSQL stores all text as UTF-8 natively; no separate N-prefixed types needed |

DATE | TIMESTAMP | Oracle DATE stores both date and time. PostgreSQL DATE stores date only. Always map Oracle DATE to TIMESTAMP unless you have confirmed no time component exists in the data. |

TIMESTAMP | TIMESTAMP | Direct equivalent |

TIMESTAMP WITH TIME ZONE | TIMESTAMPTZ | Direct equivalent |

TIMESTAMP WITH LOCAL TIME ZONE | TIMESTAMPTZ | PostgreSQL normalizes to UTC; confirm application timezone handling |

CLOB | TEXT | PostgreSQL TEXT has no size limit; test indexing strategy for very large values |

BLOB | BYTEA | Confirm encoding and transfer method; large objects impact replication |

RAW(n) | BYTEA | Binary data |

LONG RAW | BYTEA | Deprecated in Oracle; migrate away from this type |

XMLTYPE | XML | PostgreSQL has native XML support |

SDO_GEOMETRY | PostGIS geometry | Requires PostGIS extension |

ROWID | No equivalent | Drop or store as TEXT if used in application logic |

INTERVAL YEAR TO MONTH | INTERVAL | PostgreSQL supports interval types natively |

INTERVAL DAY TO SECOND | INTERVAL | Direct equivalent |

FLOAT(p) | DOUBLE PRECISION | Minor precision differences possible |

BINARY_FLOAT | REAL | Direct equivalent |

BINARY_DOUBLE | DOUBLE PRECISION | Direct equivalent |

The NUMBER performance trap in detail:

Oracle's NUMBER without precision or scale is used loosely across many schemas, sometimes for columns that only ever hold small integers. If you run a global NUMBER to NUMERIC mapping, every arithmetic operation on those columns forces PostgreSQL's arbitrary-precision engine, bypassing the faster native integer arithmetic. For tables with millions of rows and frequent aggregation queries, this is measurable. Run this before mapping:

sql-- Oracle: check actual max values in NUMBER columns to determine correct Postgres type

SELECT column_name,

MAX(LENGTH(TO_CHAR(ABS(column_value)))) AS max_digits,

MAX(column_value) AS max_value,

MIN(column_value) AS min_value,

SUM(CASE WHEN column_value != TRUNC(column_value) THEN 1 ELSE 0 END) AS decimal_count

FROM your_table

UNPIVOT (column_value FOR column_name IN (col1, col2, col3))

GROUP BY column_name;Handling Oracle-Specific Features

These Oracle features require manual rewriting; no automated tool reliably handles them.

Oracle feature compatibility matrix

| Oracle Feature | PostgreSQL Equivalent | Implementation Complexity |

|---|---|---|

| Packages | Group functions and procedures inside a PostgreSQL schema | Medium |

| Global Temporary Tables | CREATE TEMPORARY TABLE ... ON COMMIT PRESERVE ROWS | Low |

| Autonomous Transactions | Isolate logic and invoke via dblink or pg_background extension | High (requires architecture change) |

DUAL table | Remove entirely. PostgreSQL supports SELECT NOW(); without a FROM clause | Low |

CONNECT BY hierarchical queries | Rewrite using recursive CTEs (WITH RECURSIVE) | Medium |

SEQUENCE.NEXTVAL | Replace with PostgreSQL nextval('sequence_name') | Low |

ROWNUM | Replace with ROW_NUMBER() OVER () or LIMIT | Low |

NVL(a, b) | Replace with COALESCE(a, b) | Low |

DECODE(col, v1, r1, v2, r2) | Replace with CASE WHEN col = v1 THEN r1 WHEN col = v2 THEN r2 END | Low |

SYSDATE | Replace with NOW() or CURRENT_TIMESTAMP | Low |

ADD_MONTHS(date, n) | Replace with date + INTERVAL 'n months' | Low |

Oracle MERGE | PostgreSQL INSERT ... ON CONFLICT DO UPDATE | Medium |

PRAGMA SERIALLY_REUSABLE | No equivalent; refactor package state management | High |

Autonomous Transaction workaround

Oracle's Autonomous Transaction lets a procedure commit independently of its parent transaction. The most common use case is audit logging, where the log entry should persist even if the parent transaction rolls back.

Oracle syntax:

sqlCREATE OR REPLACE PROCEDURE log_action (msg VARCHAR2) AS

PRAGMA AUTONOMOUS_TRANSACTION;

BEGIN

INSERT INTO audit_logs VALUES (msg, SYSDATE);

COMMIT;

END;PostgreSQL workaround using dblink:

sql-- First install the extension

CREATE EXTENSION IF NOT EXISTS dblink;

CREATE OR REPLACE PROCEDURE log_action(msg TEXT) AS $$

BEGIN

PERFORM dblink_connect('log_conn', 'dbname=target_db user=migration_user');

PERFORM dblink_exec('log_conn',

format('INSERT INTO audit_logs VALUES (%L, NOW())', msg));

PERFORM dblink_disconnect('log_conn');

END;

$$ LANGUAGE plpgsql;The dblink call opens a separate database connection, inserts and commits within that connection, then closes it. The insert commits independently of the calling transaction, replicating the Autonomous Transaction behavior.

Rewriting CONNECT BY hierarchical queries

Oracle's CONNECT BY syntax is commonly used for organizational hierarchies, bill-of-materials, and tree structures.

Oracle syntax:

sqlSELECT employee_id, manager_id, name, LEVEL

FROM employees

START WITH manager_id IS NULL

CONNECT BY PRIOR employee_id = manager_id;

PostgreSQL equivalent using recursive CTE:

sqlWITH RECURSIVE org_hierarchy AS (

-- Anchor: top-level rows

SELECT employee_id, manager_id, name, 1 AS level

FROM employees

WHERE manager_id IS NULL

UNION ALL

-- Recursive: join children to parents

SELECT e.employee_id, e.manager_id, e.name, oh.level + 1

FROM employees e

INNER JOIN org_hierarchy oh ON e.manager_id = oh.employee_id

)

SELECT employee_id, manager_id, name, level

FROM org_hierarchy

ORDER BY level, employee_id;

Oracle to PostgreSQL Migration Tools Compared

No single tool handles the full Oracle to PostgreSQL migration end-to-end. Most teams combine two or three tools: one for schema conversion, one for data transfer, and optionally one for ongoing sync during validation. Here is how the major options compare.

| Tool | Best for | Key limitations |

|---|---|---|

| Ora2Pg | Schema DDL conversion, migration assessment reports, data export for one-time moves | Does not solve live CDC cutover; PL/SQL conversion output requires manual review and rewriting |

| AWS Schema Conversion Tool (SCT) | Schema conversion when migrating into AWS-hosted PostgreSQL (RDS, Aurora) | AWS-centric; still requires manual review of converted PL/SQL; not useful outside AWS target environments |

| AWS Database Migration Service (DMS) | Data migration and basic CDC into AWS targets (RDS PostgreSQL, Aurora) | Requires task tuning and careful LOB handling; validation is your responsibility; AWS infrastructure setup overhead |

| Estuary | CDC-based continuous sync from Oracle using LogMiner and redo logs; helps minimize cutover downtime by keeping PostgreSQL updated during the validation period | Does not convert Oracle PL/SQL, packages, stored procedures, or application logic; schema and type mapping still need to be planned separately, often with Ora2Pg or manual review |

| Debezium | Open-source CDC from Oracle LogMiner; Kafka-based streaming | Requires Kafka infrastructure; significant operational complexity to run and maintain in production |

| pgLoader | Automated data loading from Oracle to PostgreSQL; handles some type coercion | Limited Oracle support compared to Ora2Pg; no CDC capability; best for small to medium one-time migrations |

| Foreign Data Wrappers (oracle_fdw) | Querying Oracle tables directly from PostgreSQL during phased application rewrites | Not a migration tool; no data is copied; performance depends on Oracle query latency; requires oracle_fdw extension |

| CSV export and COPY | Small one-time migrations with a maintenance window | Requires full downtime during import; manual validation required; not suitable for large databases |

Recommended combinations by scenario

- Small database, one-time move: Ora2Pg for schema and data export, PostgreSQL

COPYfor import. - Medium database, AWS target: AWS SCT for schema conversion review, AWS DMS for data migration and initial CDC.

- Enterprise database, any target, minimal downtime: Ora2Pg to generate and review the target DDL, Estuary CDC to stream live changes during the validation period and reduce cutover to a short switchover window.

- Phased application rewrite:

oracle_fdwto bridge Oracle and PostgreSQL while services are rewritten incrementally, then Estuary CDC when ready for full cutover.

Method 1: Near-Zero Downtime Migration with Estuary CDC

Estuary reads Oracle's Redo Logs via LogMiner and streams every insert, update, and delete into PostgreSQL continuously. Oracle and PostgreSQL stay synchronized during the validation period, allowing the cutover to be reduced to a short application switchover rather than a long write freeze.

Note on "zero downtime": Even with CDC, production cutover involves application connection draining, final validation, rollback readiness, and sometimes a brief write pause depending on application architecture. CDC minimizes this window significantly but does not eliminate coordination overhead entirely.

When to use: live production databases, enterprise systems, large databases where extended downtime is not acceptable.

Prerequisites

- Oracle 11g or later

- An Estuary account at dashboard.estuary.dev

- PostgreSQL target database with a user that has

CREATE TABLEprivileges - Network access from Estuary to Oracle (IP allowlist or SSH tunnel)

Step 1: Create the Estuary user in Oracle

Run these commands against your Oracle database. The exact SQL depends on whether you are using a standard database or a Container Database (CDB/PDB).

For standard (non-container) databases:

sql-- Create the user

CREATE USER estuary_flow_user IDENTIFIED BY <strong_password>;

GRANT CREATE SESSION TO estuary_flow_user;

-- Grant read access to source tables

GRANT SELECT ANY TABLE TO estuary_flow_user;

-- Create the watermarks table (required by the connector)

CREATE TABLE estuary_flow_user.FLOW_WATERMARKS(

SLOT VARCHAR(1000) PRIMARY KEY,

WATERMARK VARCHAR(4000)

);

-- Grant LogMiner and metadata permissions

GRANT SELECT_CATALOG_ROLE TO estuary_flow_user;

GRANT EXECUTE_CATALOG_ROLE TO estuary_flow_user;

GRANT SELECT ON V$DATABASE TO estuary_flow_user;

GRANT SELECT ON V$LOG TO estuary_flow_user;

GRANT LOGMINING TO estuary_flow_user;

GRANT INSERT, UPDATE ON estuary_flow_user.FLOW_WATERMARKS TO estuary_flow_user;

-- Ensure quota on USERS tablespace

ALTER USER estuary_flow_user QUOTA UNLIMITED ON USERS;

-- Enable supplemental logging (required for LogMiner CDC)

ALTER DATABASE ADD SUPPLEMENTAL LOG DATA (ALL) COLUMNS;

For Amazon RDS Oracle instances, replace the supplemental logging command with:

sqlBEGIN

rdsadmin.rdsadmin_util.alter_supplemental_logging(

p_action => 'ADD',

p_type => 'ALL'

);

END;For Container Databases (CDB/PDB): the user must have the c## prefix and CONTAINER=ALL on all grants. Note that Amazon RDS Oracle does not support access to the root container and therefore does not work with CDB multi-tenant architecture for CDC.

sqlCREATE USER c##estuary_flow_user IDENTIFIED BY <strong_password> CONTAINER=ALL;

GRANT CREATE SESSION TO c##estuary_flow_user CONTAINER=ALL;

GRANT SELECT ANY TABLE TO c##estuary_flow_user CONTAINER=ALL;

CREATE TABLE c##estuary_flow_user.FLOW_WATERMARKS(

SLOT VARCHAR(1000) PRIMARY KEY,

WATERMARK VARCHAR(4000)

);

GRANT INSERT, UPDATE ON c##estuary_flow_user.FLOW_WATERMARKS

TO c##estuary_flow_user CONTAINER=ALL;

GRANT SELECT_CATALOG_ROLE TO c##estuary_flow_user CONTAINER=ALL;

GRANT EXECUTE_CATALOG_ROLE TO c##estuary_flow_user CONTAINER=ALL;

GRANT LOGMINING TO c##estuary_flow_user CONTAINER=ALL;

GRANT ALTER SESSION TO c##estuary_flow_user CONTAINER=ALL;

GRANT SET CONTAINER TO c##estuary_flow_user CONTAINER=ALL;

ALTER DATABASE ADD SUPPLEMENTAL LOG DATA (ALL) COLUMNS;Common failure point: If the capture connects but CDC fails immediately after, the cause is almost always one of three things: supplemental logging not enabled, missing watermarks table, or missing LOGMINING grant. Check all three before investigating further.

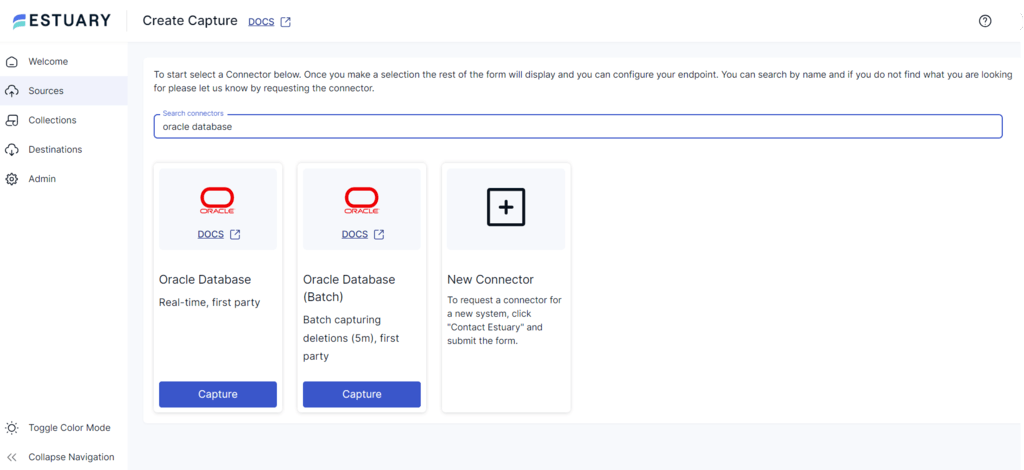

Step 2: Create the Oracle capture in Estuary

- Log in to dashboard.estuary.dev

- Navigate to Sources and click + New Capture

- Search for Oracle and select the Oracle Database (Real-time) connector

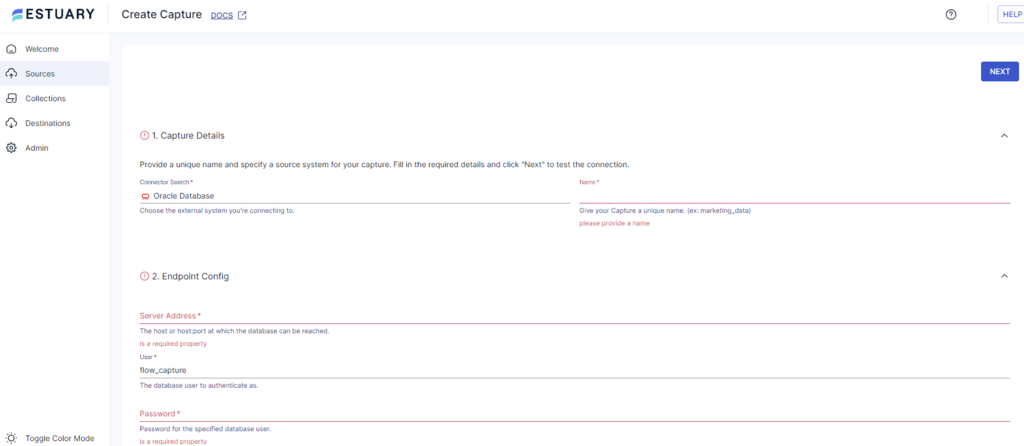

- Fill in the endpoint configuration:

- Address:

your-oracle-host:1521 - Username:

estuary_flow_user(orc##estuary_flow_userfor CDB) - Password: the password set above

- Database: your Oracle database name (defaults to

ORCL; for CDB use the PDB name)

- Address:

- Click Next. Estuary tests the connection and discovers available schemas and tables.

- Select the tables you want to replicate

- Click Save and Publish. The initial backfill begins immediately.

Dictionary Mode note: The connector defaults to extract mode, which handles schema changes gracefully by extracting the dictionary from the redo logs. Online mode uses less disk but may break if schema changes occur during capture. For production migrations, keep the default extract mode.

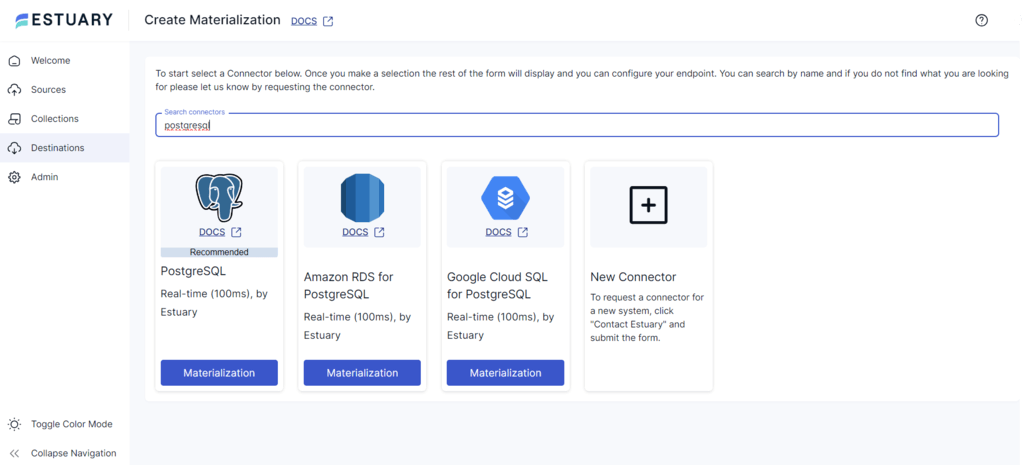

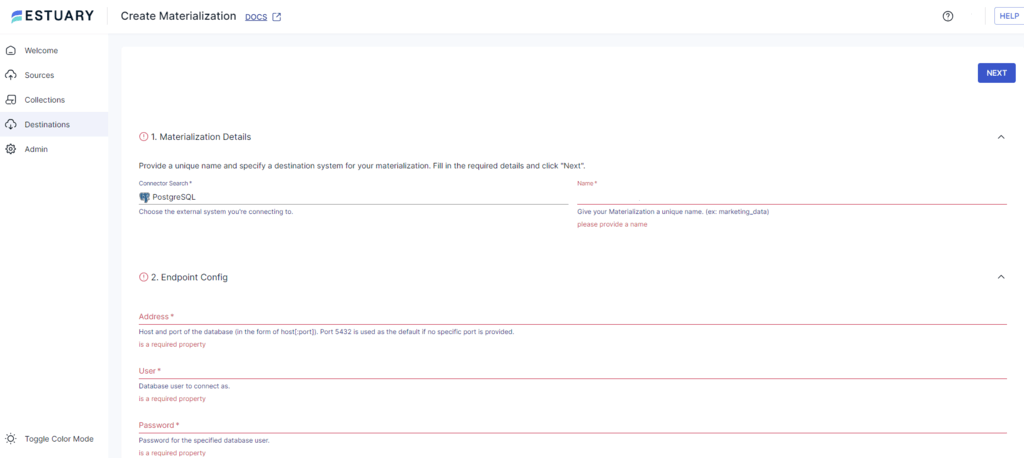

Step 3: Create the PostgreSQL materialization

- After the capture publishes, navigate to Destinations and click + New Materialization

- Select the PostgreSQL connector

- Fill in the endpoint configuration:

- Address:

your-postgres-host:5432 - User: a PostgreSQL user with

CREATE TABLEandINSERTprivileges - Password

- Database: target database name

- Database Schema: target schema (default:

public)

- Address:

- Click Next. Estuary maps Oracle collections to PostgreSQL tables.

- Click Save and Publish. Estuary performs the initial full load, then switches to streaming CDC.

From this point, every change in Oracle appears in PostgreSQL within milliseconds. Run your validation queries while both databases are live, then cut over by updating the application connection string.

Testing the Oracle Connector with a Local Docker Instance

If you want to validate the Estuary Oracle connector setup before connecting to a production database, Oracle's free container image lets you spin up a local Oracle instance for testing.

For a full video walkthrough of this Docker setup, see the Estuary Oracle CDC tutorial on YouTube.

The key steps specific to a Docker-based Oracle setup that differ from production:

1. Enable the archive log in Docker compose

The archive log must be explicitly enabled in your compose environment. Add ORACLE_ENABLE_ARCHIVELOG=true (or equivalent for your chosen image) to the environment block before running docker compose up.

2. Set the redo log retention policy using RMAN

By default, Oracle's retention policy is based on backup redundancy count, not time. For CDC, you need time-based retention to ensure archived logs are available if the capture is interrupted and needs to resume.

After the container is running, open a bash session inside it:

bashdocker exec -it <container_name> bashStart Oracle Recovery Manager and log in as a database administrator:

bashrman target /Set a time-based retention policy and create the first archived log backup:

sql-- Set 7-day retention window for archived logs

CONFIGURE RETENTION POLICY TO RECOVERY WINDOW OF 7 DAYS;

-- Create the first archived log backup to activate the policy

BACKUP DATABASE PLUS ARCHIVELOG;A longer retention window provides more recovery leeway if the CDC capture is interrupted. For production databases, align this value with your RTO requirements.

3. Oracle FREE database name for container databases

When using Oracle's free edition image (version 21c+), the default PDB name is FREE, not ORCL. In the Estuary connector configuration, set the Database field to FREE rather than the default ORCL.

4. Username format for container databases

As covered in the prerequisites above, CDB users require the c## prefix. In the Estuary dashboard, enter c##estuary_flow_user as the username, not estuary_flow_user.

Once the container is running with supplemental logging enabled, the watermarks table created, and RMAN retention configured, the Estuary capture setup steps are identical to a production Oracle instance.

Method 2: One-Time Migration Using CSV Export

For databases under 100GB where a maintenance window is acceptable, CSV export from Oracle and import into PostgreSQL is the simplest and most predictable path.

Step 1: Export from Oracle using SQL*Plus or Data Pump

Using SQL*Plus spool for individual tables:

sql-- Connect to Oracle and spool output

SET COLSEP ','

SET PAGESIZE 0

SET TRIMSPOOL ON

SET HEADSEP OFF

SET FEEDBACK OFF

SPOOL /export/your_table.csv

SELECT * FROM your_schema.your_table;

SPOOL OFF

Using Oracle Data Pump (expdp) for full schema exports is faster for large tables but produces Oracle-proprietary format. Use impdp to re-export as CSV if needed, or use a third-party tool like Ora2Pg for format-aware export.

Step 2: Create the target schema in PostgreSQL

Apply your DDL, with all Oracle-to-PostgreSQL type mappings applied (see the type mapping table above). Drop indexes before loading data and recreate them after.

Step 3: Import using PostgreSQL COPY

sqlCOPY your_table (col1, col2, col3)

FROM '/export/your_table.csv'

WITH (FORMAT CSV, HEADER TRUE, ENCODING 'UTF8', NULL '');

Step 4: Validate and reset sequences

sql-- Row count check

SELECT COUNT(*) FROM your_table;

-- Reset sequence to current max ID after data load

SELECT setval('your_table_id_seq', (SELECT MAX(id) FROM your_table));

Method 3: Phased Migration Using Foreign Data Wrappers

Foreign Data Wrappers let PostgreSQL query Oracle tables directly without copying data. This is not a migration tool but a bridge for teams rewriting their application one service at a time.

sql-- Install the oracle_fdw extension

CREATE EXTENSION oracle_fdw;

-- Create the foreign server

CREATE SERVER oracle_server

FOREIGN DATA WRAPPER oracle_fdw

OPTIONS (dbserver '//oracle-host:1521/ORCL');

-- Create user mapping

CREATE USER MAPPING FOR current_user

SERVER oracle_server

OPTIONS (user 'read_only_user', password 'password');

-- Import a table from Oracle

IMPORT FOREIGN SCHEMA "ORACLE_SCHEMA"

LIMIT TO (your_table)

FROM SERVER oracle_server

INTO pg_schema;

PostgreSQL can now query pg_schema.your_table and Oracle serves the data in real time. As you rewrite each service to use native PostgreSQL tables, drop the corresponding foreign table.

Post-Migration Validation

Never close the migration until data integrity is confirmed. Run these checks in PostgreSQL after every method.

Row count comparison

sql-- Run this in Oracle

SELECT COUNT(*) FROM your_schema.your_table;

-- Run this in PostgreSQL

SELECT COUNT(*) FROM your_table;

MD5 checksum for data integrity

sql-- PostgreSQL MD5 aggregate validation

-- Compare the output against an equivalent hash from Oracle

SELECT md5(string_agg(row_hash, '' ORDER BY id)) AS table_checksum

FROM (

SELECT id,

md5(concat_ws('|', id::text, col1::text, col2::text, col3::text)) AS row_hash

FROM your_table

) subquery;

Run the equivalent string concatenation and hash in Oracle using STANDARD_HASH or DBMS_CRYPTO, then compare the outputs. A mismatch pinpoints which row window contains a discrepancy.

Sequence validation

sql-- Check that all sequences are set correctly after import

SELECT sequence_name, last_value

FROM information_schema.sequences

WHERE sequence_schema = 'public';

-- Compare last_value against the actual table maximum

SELECT MAX(id) FROM your_table;

Application query smoke tests

After cutover, run your 10 most critical application queries against PostgreSQL and compare execution plans and row counts against Oracle. Pay special attention to queries that used Oracle-specific functions, any query using ROWNUM, and any query over tables that had NUMBER columns remapped to INTEGER.

Common Oracle to PostgreSQL Migration Errors

| Error | Root Cause | Fix |

|---|---|---|

ERROR: operator does not exist: integer = text | Oracle implicit type coercion; PostgreSQL requires explicit casting | Add explicit cast: WHERE id = '123'::integer |

ERROR: column "rownum" does not exist | ROWNUM is Oracle-specific | Replace with ROW_NUMBER() OVER () or LIMIT n |

| Sequences generating duplicate key errors | Sequences not reset after bulk import | Run setval() for every identity column after load |

| Date columns showing wrong time | Oracle DATE includes time; mapped to PostgreSQL date (date only) | Change column type to TIMESTAMP |

| Performance regression on aggregation queries | NUMBER columns mapped to NUMERIC | Identify integer-only columns and alter to INTEGER or BIGINT |

ERROR: function nvl() does not exist | NVL is Oracle-specific | Replace with COALESCE() |

| Trigger logic producing wrong results | PL/SQL exception handling differs from PL/pgSQL | Rewrite EXCEPTION WHEN OTHERS blocks using PostgreSQL error codes |

ERROR: syntax error at or near "CONNECT" | CONNECT BY is Oracle-specific | Rewrite as recursive CTE |

Reference Documentation

The following official documentation sources were used in preparing this guide and are recommended reading for anyone implementing an Oracle-to-PostgreSQL migration.

Oracle:

- Oracle LogMiner documentation — official reference for LogMiner setup, supplemental logging, and redo log mining

- Oracle Data Pump (expdp/impdp) — for full schema and data exports

- Oracle supplemental logging — required for CDC-based capture

PostgreSQL:

- PostgreSQL COPY command — official reference for bulk data import

- PostgreSQL recursive CTEs (WITH RECURSIVE) — for rewriting Oracle

CONNECT BYqueries - PostgreSQL dblink extension — for implementing Autonomous Transaction workarounds

- PostgreSQL Foreign Data Wrappers — for phased migration bridging

Estuary:

- Estuary Oracle CDC connector documentation — prerequisites, setup, and configuration reference

- Estuary PostgreSQL materialization connector — destination configuration reference

Conclusion

Oracle to PostgreSQL migration has three distinct technical challenges: data type mapping (especially NUMBER and DATE), PL/SQL object conversion (especially Packages and Autonomous Transactions), and choosing a cutover strategy that matches your downtime tolerance.

For enterprise production databases where extended downtime is not acceptable, Estuary's LogMiner-based CDC can keep Oracle and PostgreSQL synchronized during the validation period, reducing cutover to a short application switchover window. For smaller databases with a maintenance window, CSV export and import via PostgreSQL COPY is the fastest and most predictable path.

The data type mapping table, Oracle feature compatibility matrix, and troubleshooting table above cover the issues responsible for the majority of migration failures.

Start your free Estuary migration or explore related guides:

FAQs

Can Ora2Pg handle the full migration automatically?

Does Estuary support Oracle RAC?

What Oracle version does Estuary support?

How do I handle Oracle Packages during migration?

About the author

Dani is a data professional with a rich background in data engineering and real-time data platforms. At Estuary, Daniel focuses on promoting cutting-edge streaming solutions, helping to bridge the gap between technical innovation and developer adoption. With deep expertise in cloud-native and streaming technologies, Dani has successfully supported startups and enterprises in building robust data solutions.