Nowadays, data pipeline failures are often subtle. Pipelines may slow down quietly, miss events, or exhibit deviations that are difficult to detect. These errors usually become apparent much later in dashboards or when AI systems make unexpected decisions. And that’s where Snowflake pipeline monitoring in real-time systems comes in. It doesn’t just focus on behavior over time; it also verifies that the tasks are actually running.

In architectures where Snowflake's analytics, machine learning, and AI workloads are central, these silent problems are far more costly than overt errors. By the time the problem has been noticed, it has already spread downstream. So, in order to actually trust real-time analytics and AI systems, we need pipeline observability. Of course, data freshness and reliability cannot be guaranteed without monitoring key metrics such as latency, error signals, and throughput.

Let’s take the Estuary platform as an example. It offers basic observability capabilities for data flows, such as viewing the overall status of connectors, tracking defined alerts for pipelines, and reviewing generated logs directly from the dashboard. These built-in features facilitate daily operational monitoring of pipelines.

If you’re looking for something that’s more advanced, Estuary also offers the OpenMetrics API, which can integrate with existing observability tools. By exposing pipeline health signals as metrics, teams can observe how data behaves while it’s in motion. This way, you can shift the focus from “Is the pipeline working?” to “Is the pipeline working correctly?”

Why “Running” Pipelines Aren’t Always Healthy

Traditional pipeline monitoring approaches generally focus only on whether jobs are running or not. In batch-based ETL systems, this was often considered sufficient: when a job failed, an alarm was triggered, and the team would intervene.

However, this model isn't enough in more robust batch pipelines or real-time systems, where data is continuously fed into Snowflake. While a pipeline may appear to be running, some problems may be occurring simultaneously in the background, such as delays in reflecting source system changes in Snowflake, silent data loss due to retrieval mechanisms hiding errors, and decreased throughput over time with no alarms.

These are usually noticed when dashboards become inconsistent, features grow outdated, or AI models start producing unexpected outputs. By this time, the erroneous data has already been used in business decisions. Therefore, what needs to be monitored in modern architectures isn't just the operation of the pipeline but also how its behavior changes over time. A healthy pipeline should be able to continue transporting data at the right speed, without errors and in a predictable manner.

| Traditional pipelines (batch ETL) | Modern pipelines (real-time) | |

|---|---|---|

| Focus | Operational - Is the job running? | Operational + data health |

| Issues | Processes and components that make the job successful | More downstream, like silent data loss, throughput degradation |

| Detection | Binary alarms (on/off) | Behavioral analysis and trends monitoring |

| Business risk | Delays in reporting and decision making until job is fixed | Dashboards and decisions based on poor-quality data |

| Success metric | Uptime and completion | Predictability, speed, and integrity over time |

What Really Matters When Monitoring Snowflake Pipelines

There are numerous metrics that can be checked when monitoring Snowflake pipelines. However, trying to look at all of them can lead to errors. CPU usage, worker count, or job statuses can be useful, but they can generate noise when examined from an operational perspective.

Effective Snowflake pipeline monitoring focuses on a few signals that accurately reflect business impact. Below, you’ll find three key metrics that stand out:

- Throughput: Throughput is one of the earliest warning signals for Snowflake pipelines. It indicates whether data is flowing into Snowflake and at what speed. A decrease in throughput is often the first sign of upstream slowdowns, downstream pressure, or write problems on the target side. This decrease may lead to delays in the downstream analysis and a loss of data freshness, even if the pipeline is still working.

- Error counts: Errors don't always cause the pipeline to stop. Systems can actually remain operational thanks to retry mechanisms. That’s why we often miss these errors and warnings. In practice, they can be related to exceeding rate limits or quotas on API-based sources, outdated or expired credentials, or configuration issues that do not immediately halt the pipeline. Even if your pipeline seems to be working properly, you need to monitor the number of errors that occur at specific time intervals in order to see if there’s anything going wrong. Intervention is possible when these issues start to accumulate, even if Snowflake hasn’t identified any just yet.

- Latency (behaviorally observed): End-to-end latency is best observed not as a single metric, but through pipeline behavior. When throughput starts to decrease, the number of errors or warnings spikes, or changes to the source system appear in Snowflake with a delay, you can safely assume the pipeline has started to lag.

| Metric | What it shows | Warning signs | Impact |

|---|---|---|---|

| Throughput | Whether data flows into Snowflake and how fast | Slowing down, usually means upstream slows down, downstream pressure, write problems | Delays in downstream analysis, loss of data freshness |

| Error counts | Stability of the configuration | Spikes in retries, exceeding rate limits | Pipeline stop, data lag, increased compute costs |

| Latency | Entire pipeline behavior | Throughput decrease, error spike, changes appear with a delay | Outdated dashboards and data for real-time decisions |

Hands-On Pipeline Observability

Up to this point, we've examined how Snowflake pipelines should be monitored in theory. Now, we'll put these into practice by implementing pipeline observability using Estuary’s OpenMetrics API. We'll establish a pipeline flowing from a simple source system to Snowflake, use Estuary’s OpenMetrics API to expose pipeline health signals, and visualize those metrics in Grafana in order to observe pipeline behavior in real time.

Our goal isn't to build a complex architecture but to clearly demonstrate how metrics like latency, error, and throughput are generated and interpreted in practice using the OpenMetrics API.

To follow along, you will need:

- Estuary account

- Credentials for your pipeline systems (in this demo, Neon and Snowflake)

- Local Docker installation to test Prometheus and Grafana integrations

Ready? Let's begin.

1. Setting Up a Minimal Snowflake Pipeline

In this section, our goal is to create a real data pipeline that produces observable behavior.

As pipeline complexity increases, so does the need for observability. But fundamental problems often arise even in the simplest flows. Therefore, we'll start with a source system that is easy to track and has clear behavior.

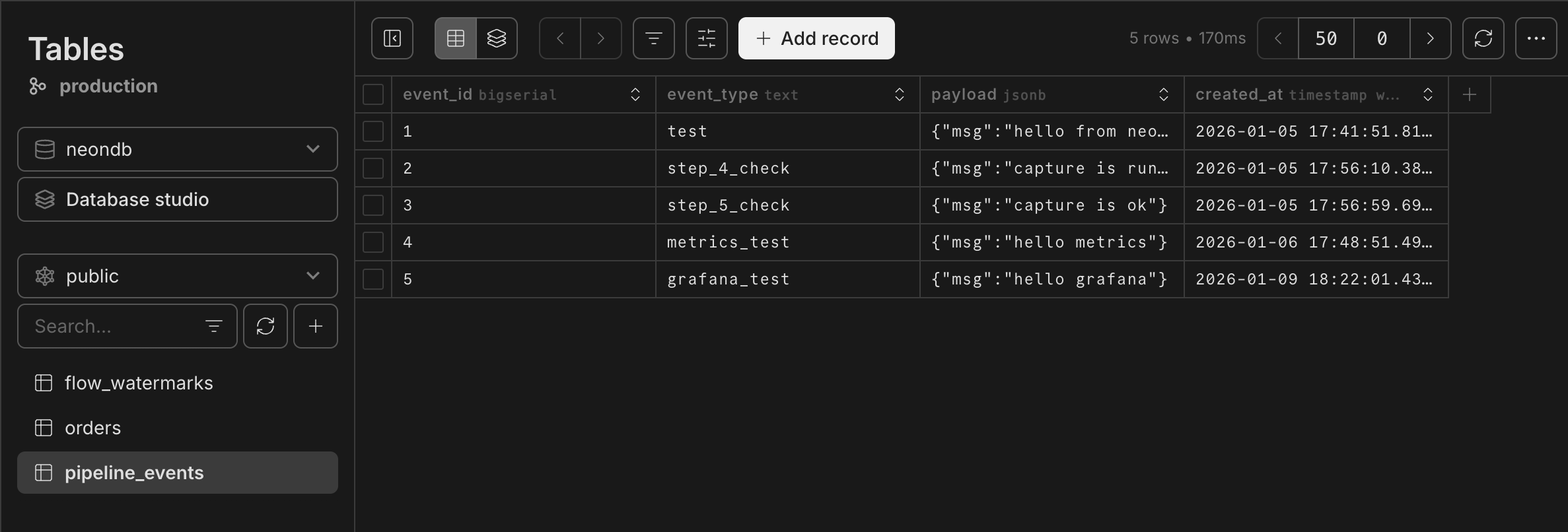

First, we'll create a simple PostgreSQL table on Neon to build the source system. Our table consists of a few basic columns representing the events to be carried along the pipeline. Here, our aim is to observe the flow and behavior of the data rather than the logic behind its processing.

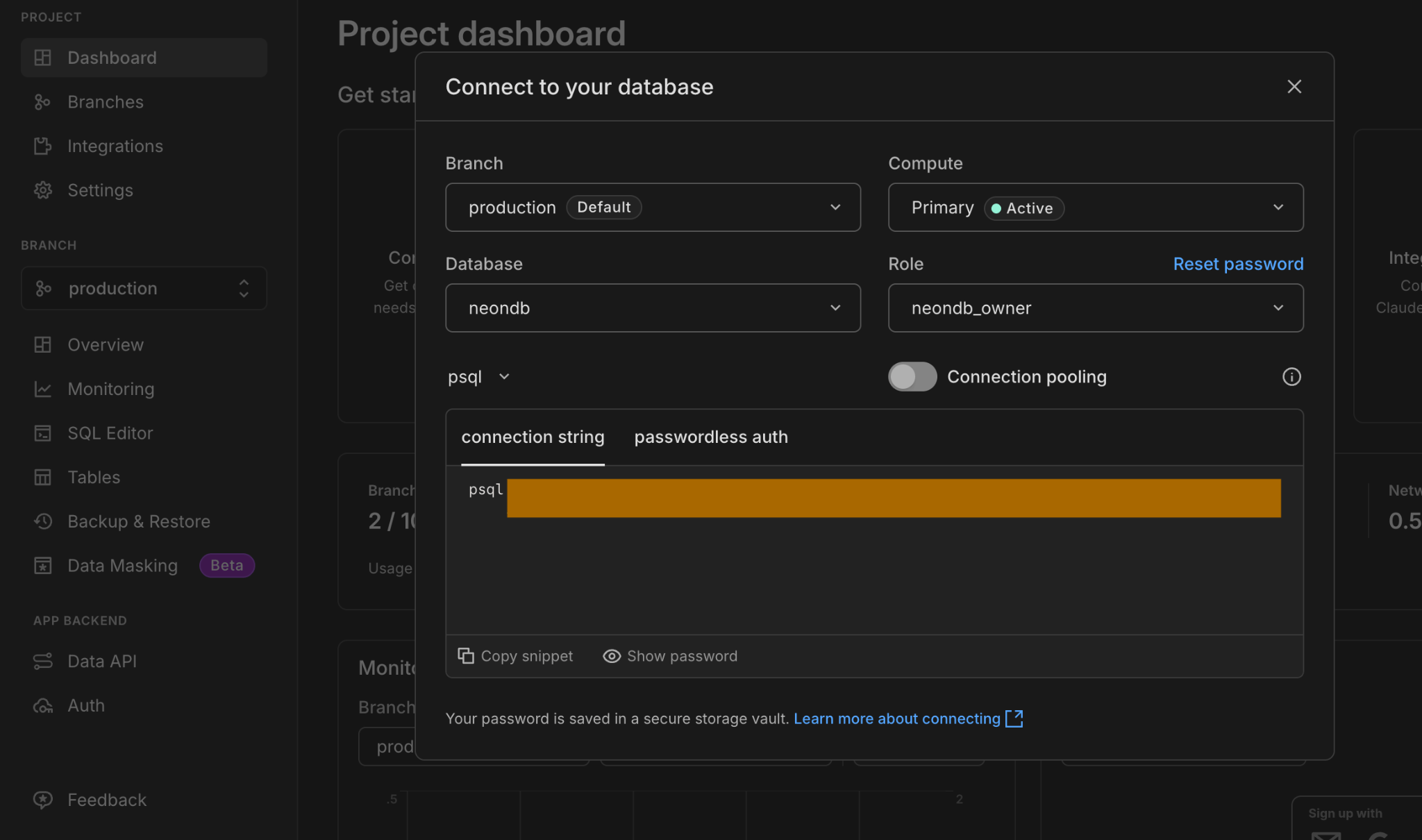

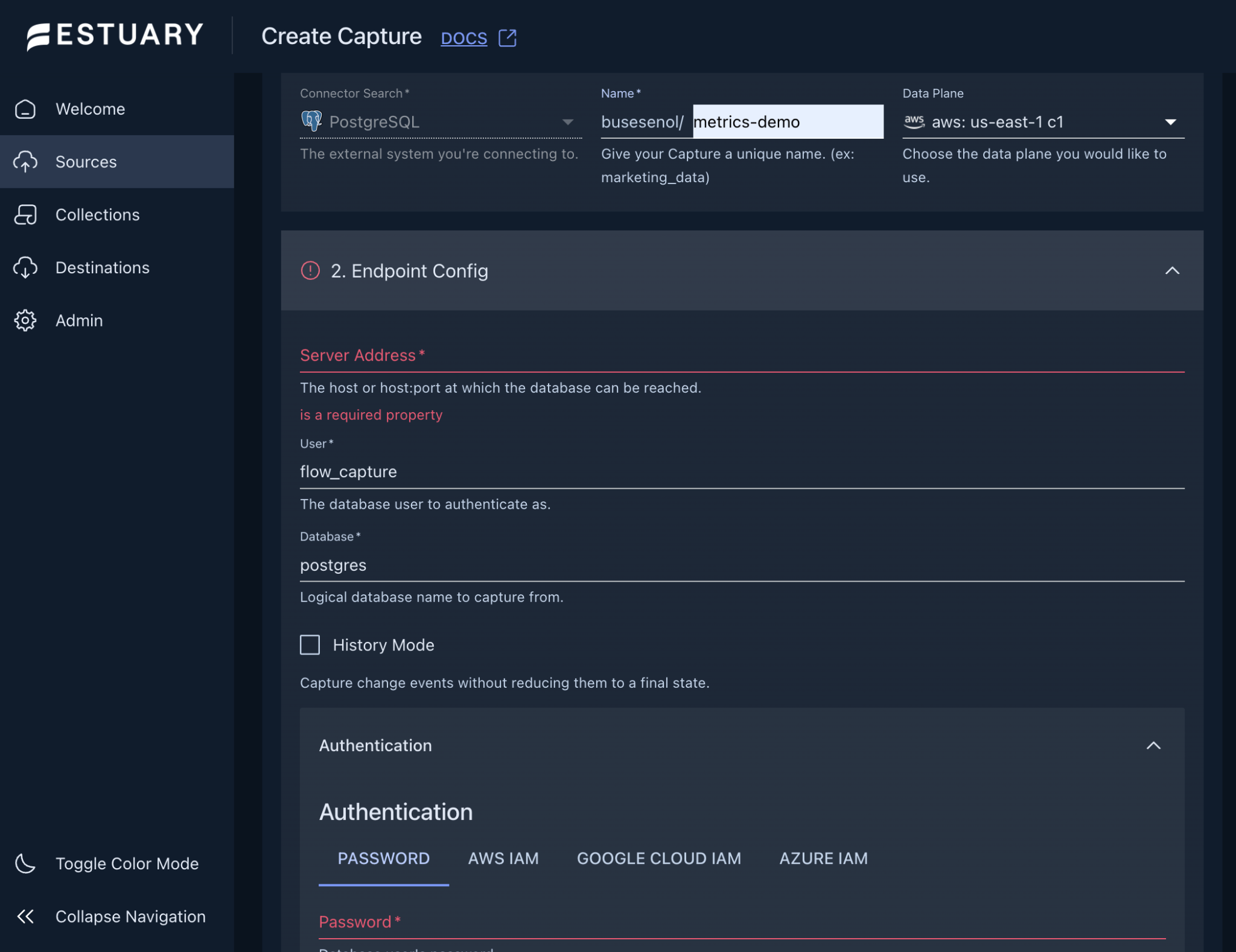

Next, we will create a capture so that the changes in this table can be monitored by Estuary using the CDC (Change Data Capture) method. Navigate to Estuary UI -> Sources -> New Capture and then select PostgreSQL. This will prompt you for connection information. You can obtain this info from the Neon Dashboard by selecting "Connect to your database."

Then, use this information to fill in the required details for the Estuary Capture. Create a data flow, and save it.

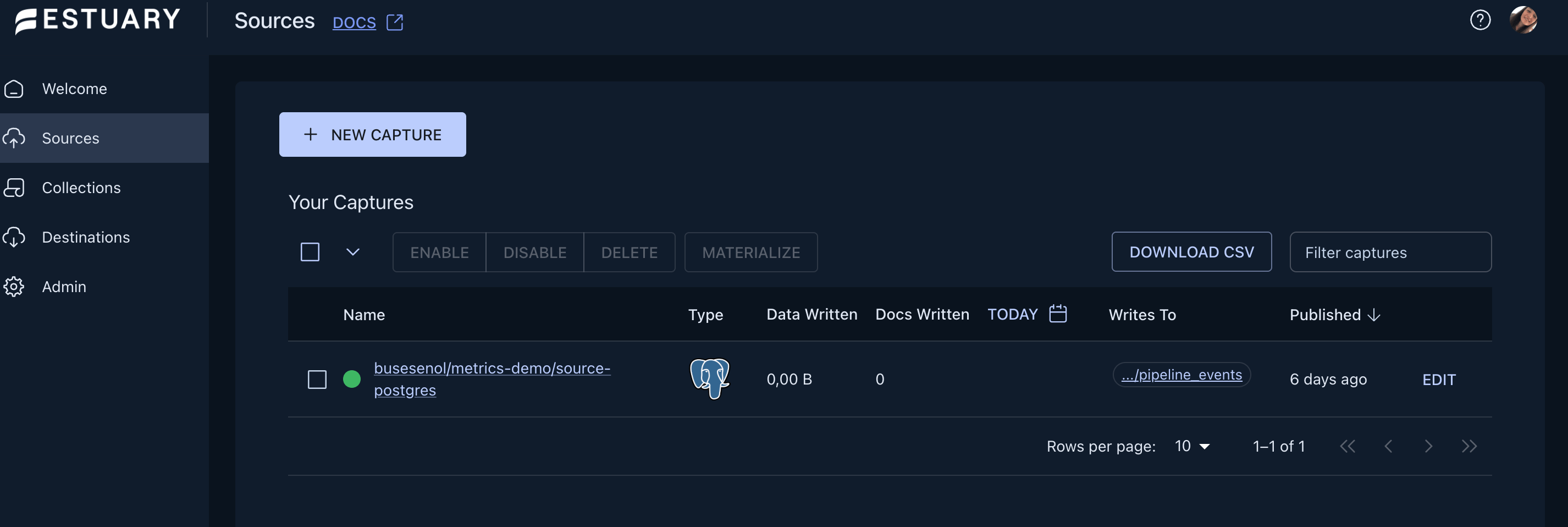

Once the process is complete, you should see the capture you’ve created in the Captures list with a green dot next to it.

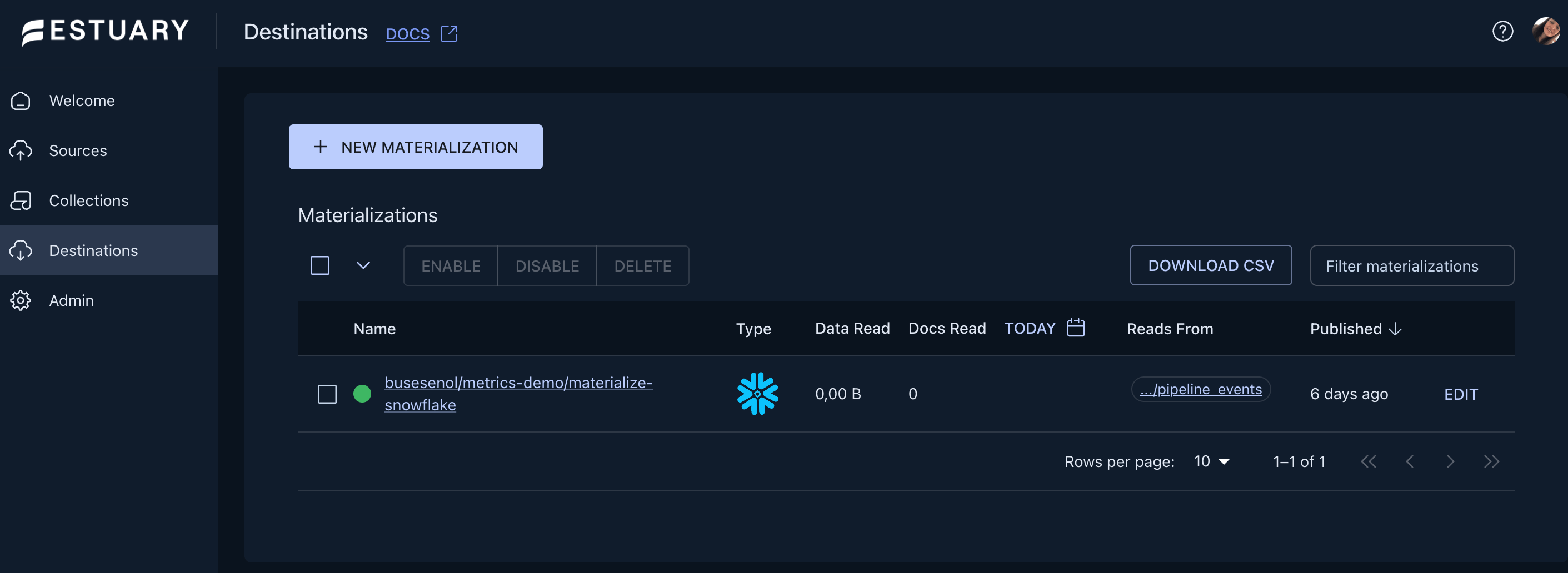

Our next step is to create the materialization. To do this, we need to enter the capture we’ve created, and click the "Materialize" button. Select Snowflake on the page that loads. While configuring the materialization, complete the write settings to the target table using the connection information you’ve received from your Snowflake account.

In this step, the Snowflake database and schema that'll receive the data are specified. After the configuration is complete, verify that the capture and materialization components appear in a green (healthy) status in the Estuary interface. This indicates that the data has been transferred from Postgres to Snowflake without any problems.

We've completed our core pipeline from Postgres to Snowflake. Now our data flows end-to-end, and we have a real system where we can observe the behavior of this flow.

2. Exposing Pipeline Metrics via the OpenMetrics API with Prometheus

Once the pipeline setup has been completed, the next step is to measure the health of the flow. Estuary exports pipeline health metrics via the OpenMetrics API. Prometheus then collects and converts them into meaningful signals, which allows us to assess how the pipeline is working.

OpenMetrics standardizes how metrics are exported. Estuary generates health signals along the pipeline in this format and provides direct access to metrics such as throughput, error counts, and task statuses. As a result, we can observe pipeline behavior without the need for custom integrations or vendor-specific solutions.

On their own, these metrics are just raw data. Collected consistently over time, though, they become meaningful and reveal trends. Prometheus complements the OpenMetrics API in this regard, as it scrapes the metrics provided by Estuary at specific intervals, stores them as time series, and allows us to monitor changes in the pipeline's behavior over longer periods.

In fact, the biggest advantage of using Prometheus is that we can evaluate metrics not only in real time but also during specific time windows. This makes it possible to differentiate between short-term fluctuations and long-term problems.

The OpenMetrics API uses one main endpoint to retrieve metrics.

plaintext language-bashhttps://agent-api-1084703453822.us-central1.run.app/api/v1/metrics/{prefix}/In order to enable Prometheus to collect metrics from the Estuary OpenMetrics endpoint, we first need a simple configuration file. Below, the prometheus.yml file that has been created locally defines which endpoint Prometheus will collect Estuary metrics from and at what intervals.

There are two places that need to be updated in this YAML file:

- REFRESH TOKEN: Token obtained from the authentication page via the Estuary UI.

- PREFIX: Applicable to your entire tenant or a subset (here’s how I used it in this demo:

“metrics_path: /api/v1/metrics/busesenol/metrics-demo/“)

plaintext language-bash#prometheus.yml

global:

scrape_interval: 1m

scrape_configs:

- job_name: estuary

scheme: https

bearer_token: REFRESH TOKEN

metrics_path: /api/v1/metrics/PREFIX/

static_configs:

- targets: [agent-api-1084703453822.us-central1.run.app]After editing this file, open a terminal in the same directory. Then, start Prometheus using Docker with the following command:

docker run --rm -it -p "9090:9090" -v $(pwd)/prometheus.yml:/etc/prometheus/prometheus.yml prom/prometheus:latest

Prometheus can then be accessed via http://localhost:9090/. If the target health state on the screen is shown as up, it means it's successfully scraping metrics from the Estuary OpenMetrics API.

3. Connecting Metrics to Grafana

The next step is to make these metrics readable and interpretable. This is where visualization tools like Grafana or Datadog come into play.

They allow us to monitor pipeline behavior in real time using the metrics collected by Prometheus. Throughput changes, error increases, or delays can be easily tracked via dashboards. Therefore, pipeline health can be monitored not only with numbers but also with visual signals.

In this demo, we'll use Grafana to visualize the metrics (though the same approach can be applied to other observability tools such as Datadog).

First, we'll run Grafana locally with the following command. We can access it using this link: http://localhost:3000.

plaintext language-bashdocker run -d \\

--name=grafana \\

-p 3000:3000 \\

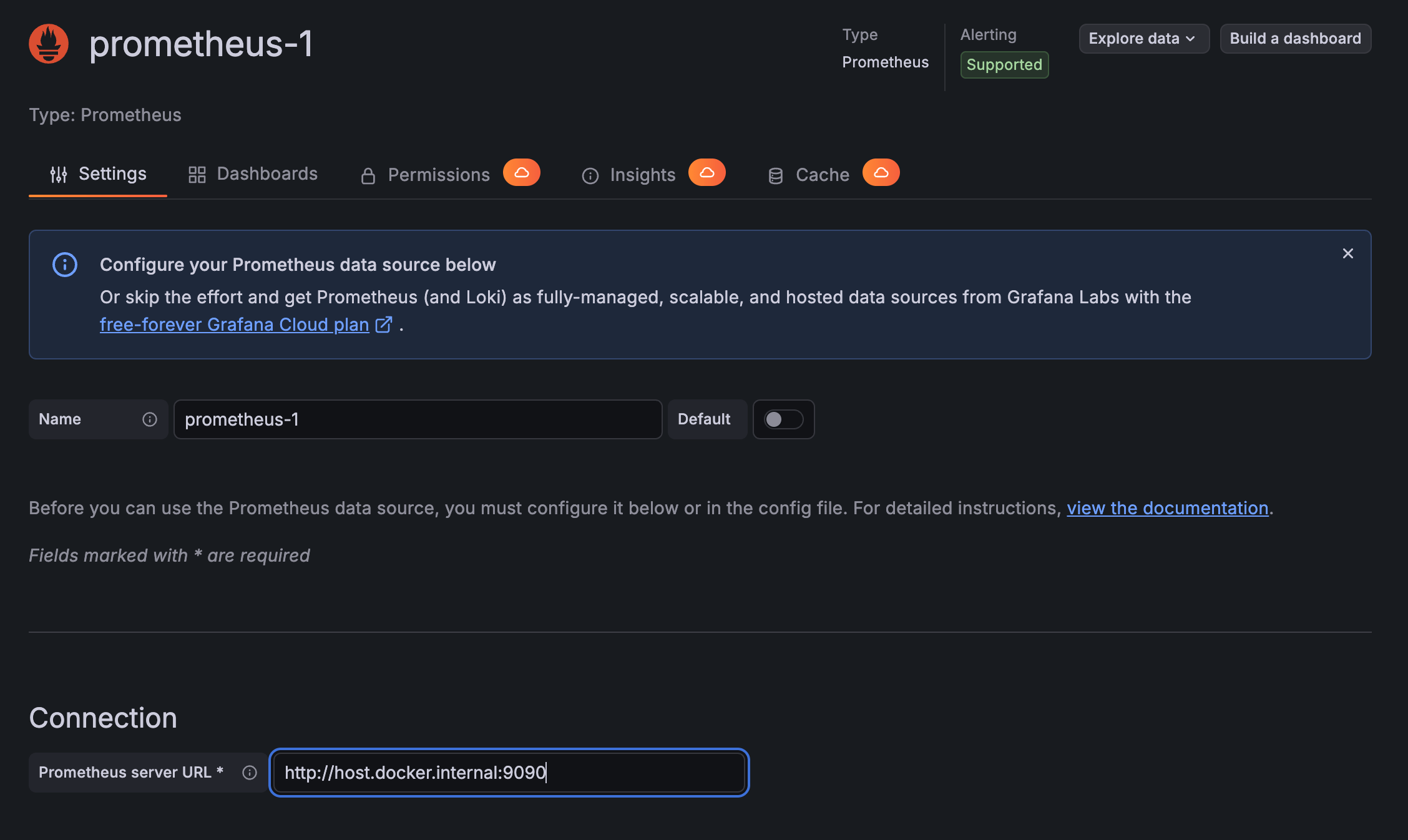

grafana/grafanaNext, we'll add Prometheus as a data source via Connections -> Data Sources in the Grafana interface. Since Prometheus runs locally through Docker, we should provide the connection URL as http://host.docker.internal:9090.

After connecting Grafana and Prometheus, we can create a simple dashboard to visualize pipeline metrics. This dashboard will allow us to observe the amount of data written to Snowflake over time.

In the Grafana interface, click Dashboards → New dashboard from the left menu and open a blank dashboard through “Add new panel.”

In the opened dashboard editing screen with Prometheus selected as the data source, add the following metric to the query field:

plaintext language-bashrate(materialized_out_docs_total[5m])This query shows the average speed of the records written to Snowflake over the last 5 minutes. Thanks to it, we can clearly monitor the changes in pipeline throughput over time. If the graph is successfully generated, we can save the panel with the “Save dashboard” option.

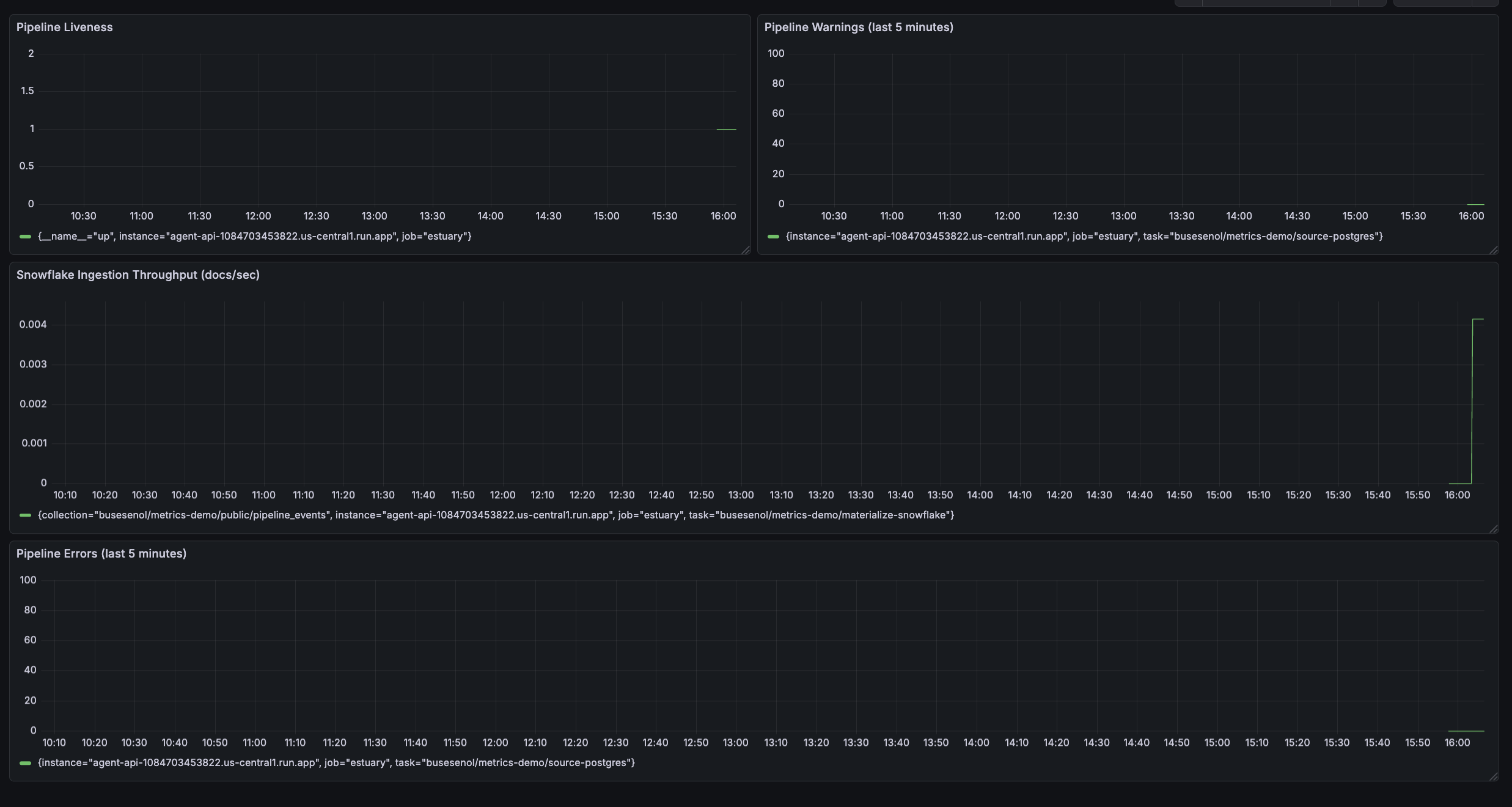

When we apply the same steps for other critical metrics, we obtain a dashboard like the one below, where we can monitor the overall health of the pipeline from a single screen.

This dashboard allows us to simultaneously monitor the pipeline's live status, the data flowing into Snowflake, and any potential errors or warnings. Throughput metrics, in particular, enable us to detect performance drops early, even if the pipeline is still running. That way, we can take action before downstream analytics are affected.

Conclusion

Pipelines can quietly slow down or lose data freshness, and this is often noticed too late. That’s why the reliability of real-time data pipelines depends not only on whether the flow is running, but also on its overall health.

In this article, we’ve demonstrated how you can export health signals of a Snowflake pipeline using Estuary’s OpenMetrics API and make these metrics observable with Prometheus and Grafana. Teams can also monitor pipeline behavior in real time by tracking throughput, error, and liveness metrics.

If you’re looking for more advanced observability scenarios, Estuary’s OpenMetrics documentation is a great place to start.

FAQs

Can I use Grafana or Datadog with Estuary?

Why isn't a "running" status enough to confirm a pipeline is healthy?

About the author

AI Engineer and Data Scientist with a passion for building scalable machine learning systems and robust data architectures. I combine technical expertise with a strategic mindset to solve complex data challenges, focusing on real-time integration and predictive analytics.

![12:25 AMGrafana dashboard editor showing a time series visualization panel. The query editor displays a Prometheus query "rate(materialized_out_docs_total[5m])" with the data source set to Prometheus.](/static/aca1ac480636d19429a084ed69535ba0/259f0/Grafana_Dashboad_Prometheus_ed9b4e46b3.png)