Product analytics platforms like Amplitude are crucial for understanding how users interact with your digital products. Such platforms capture a wealth of user behavioral data, revealing how they engage with your products. But to truly capitalize on these insights, you need the analytical power to dig deeper and ask complex questions.

By integrating Amplitude with BigQuery, you can utilize Google’s specialized data warehousing service, which is designed to store massive datasets and perform complex analyses. You can conduct deeper investigations, combining behavioral insights with other business data for a more comprehensive analysis.

This holistic view allows you to reveal insights that would be difficult to discover without this integration. This article covers two methods to sync Amplitude to BigQuery.

What is Amplitude?

Amplitude Analytics is a powerful digital analytics platform that enables you to gain insights into customer behavior and optimize your digital products for growth. The platform stands out for its ability to provide real-time access to customer insights at every step of your journey, allowing your business to rapidly respond to customer needs and preferences.

One of Amplitude’s core capabilities is its Event Segmentation analysis. This feature allows you to see what different segments of customers are doing with your product by tracking specific events. The platform’s dashboards also provide a quick visual overview of user engagement and conversion paths. With its robust user analytics and host of supported integrations, Amplitude is an indispensable tool for organizations looking to leverage their marketing.

Key Features of Amplitude Analytics:

- A/B Testing: Amplitude’s A/B testing features, also known as Amplitude Experiment, provide a framework for conducting controlled experiments within digital products. It allows teams to test variations of their product experiences to determine which changes lead to better user outcomes, such as increased engagement or higher conversion rates.

- Cohort Analysis: Amplitude's Cohort Analysis enables you to group your customers based on shared characteristics or behavior over time. This feature is crucial for understanding how specific user segments engage with a product, their retention patterns, and how these behaviors impact the business's key metrics.

- Custom Event Tracking: Amplitude allows you to define and track custom events relevant to your specific goals. This feature enables detailed monitoring of user actions such as clicks, conversions, and feature usage, which is crucial for accurately measuring success.

What is BigQuery?

BigQuery is Google Cloud’s fully managed, serverless data warehouse. It is designed to provide fast analytics, allowing you to store and analyze petabytes of data with incredible speed. The platform uses standard SQL language for querying data, making it easily accessible and allowing for complex analysis to uncover valuable insights.

One of the standout features of BigQuery is its separate storage and compute resources.

This feature enables an extremely flexible pricing model in which you are charged only for queries rather than reserved hardware resources.

The platform’s serverless nature also automates infrastructure management and maintenance, allowing teams to focus on analyzing data rather than setting up and maintaining servers. All these features make BigQuery a compelling choice for businesses seeking a scalable and flexible data warehousing solution.

Key features of Google BigQuery include:

- Columnar Storage: BigQuery stores data in a columnar format, unlike traditional row-based storage. As a result, querying involves BigQuery only reading the relevant data from a few columns, dramatically improving speed and reducing costs.

- Automated Data Optimization: The platform’s internal architecture continuously analyzes data usage patterns and automatically optimizes storage and query performance. This relieves you of manually tuning tasks, allowing you to focus on analysis.

- Advanced Caching: BigQuery uses sophisticated caching mechanisms to store frequently accessed results. These cached results significantly speed up recurring queries and reduce the amount of data to be scanned.

2 Easy Amplitude to BigQuery Integration Methods

You can connect Amplitude to BigQuery in two main ways:

- Method 1: Amplitude Export Function – Use Amplitude’s native export feature to send data into BigQuery. This works for scheduled exports but has latency and setup limitations.

- Method 2: Estuary (No-Code, Real-Time) – Use Estuary’s pre-built Amplitude and BigQuery connectors to create a streaming pipeline. This delivers real-time sync with no manual coding or ongoing maintenance.

👉 The best choice depends on your needs: use Amplitude Export for simple batch transfers, or Estuary for automated, real-time analytics at scale.

Method 1: Using Amplitude’s Export Function to Integrate Data From Amplitude to BigQuery

Exporting data from Amplitude to BigQuery is an excellent solution for leveraging advanced analytics and making more informed data-driven decisions. Let’s look into the detailed steps that use Amplitude’s built-in tool to export data to BigQuery. However, before setting up the data pipeline, it is necessary to ensure the following prerequisites are in place.

- A BigQuery project that has the BigQuery Transfer service enabled.

- An Amplitude service account that allows data transfer to your Google Cloud project. To facilitate this transfer, the service account must have the BigQuery User and Data Editor roles enabled. Additionally, a custom role with the following permissions is also necessary.

- bigquery.transfers.get

- bigquery.transfers.update

- bigquery.datasets.update

- Once the service account has been created, you need to generate and download its key file in JSON format and upload it to Amplitude.

Step 1: Selecting BigQuery as the Destination

In Amplitude Data, go to the Catalog tab and select Destinations. Next, locate BigQuery in the Warehouse Destination section and click on it. This will take you to the configuration for setting up BigQuery Integration.

Step 2: Choosing the Data to Export

On the Getting Started tab, select the Amplitude data you want to export. Available options include Export events ingested today and moving forward, Export all merged Amplitude IDs, or both.

Step 3: Starting the Export Process

Select the BigQuery dataset where you want the exported Amplitude data to be stored. Then, upload the JSON service account key file you downloaded earlier and click on Next to start the test upload procedure.

Amplitude performs this test to ensure that the provided credentials are accurate. Once the test is complete without errors, click Finish to complete the procedure.

By following the above steps, you can easily transfer data from Amplitude to BigQuery using Amplitude’s Export Tool. However, this method has several limitations, including:

- Latency: It takes 2 hours for new Amplitude event data to become available for export to BigQuery via the API. This means you cannot access real-time data in BigQuery for analysis.

- Cross-Project View Limitation: The Export API does not support cross-project views as the view does not own any data. To export data from a cross-project view, you need to call the Export API for each project that originally collected the data. This means you cannot export the data from different projects together in one go; instead, you need to do it separately for each project, which can be time-consuming.

- Technical Expertise: Manually migrating data from Amplitude to BigQuery requires an in-depth technical understanding of the two platforms and the nuances associated with transferring data, such as unsupported data formats and incorrect mapping.

- Performance Overhead: The Manual method of loading data Amplitude to BigQuery requires consistent monitoring. Any updates to any platform may stop the entire export process, leading to data loss or inconsistency.

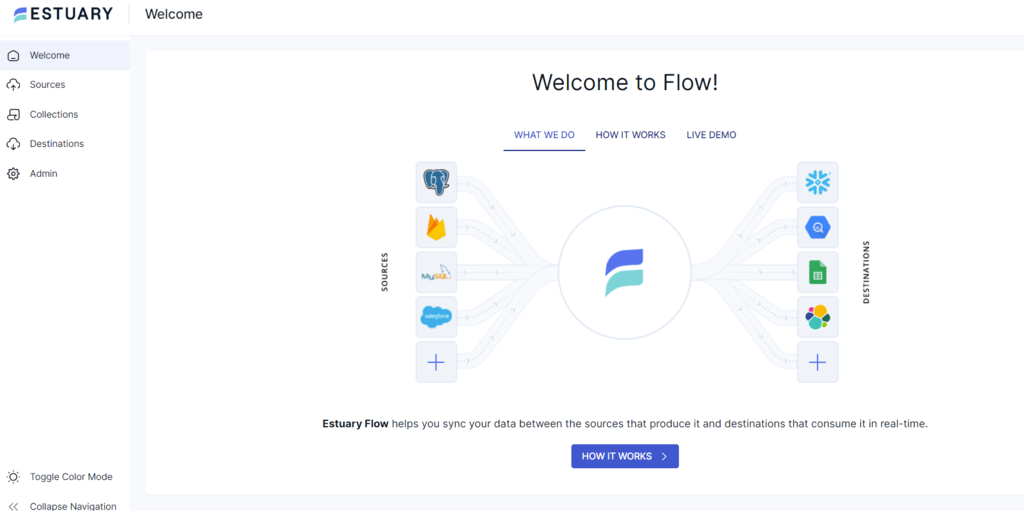

Method 2: Using an ETL Tool like Estuary for Amplitude to BigQuery Integration

No-code ETL (Extract, Transform, Load) tools are a great option for effortlessly integrating data from Amplitude to BigQuery without needing much technical expertise. Estuary is one such data integration tool that automates the data transfer process. However, before setting up the streaming pipeline, there are a few prerequisites that need to be in place.

Prerequisites

- An Amplitude project with an API Key and Secret Key

- BigQuery

- An Estuary account

Steps to Integrate Amplitude with BigQuery Using Estuary:

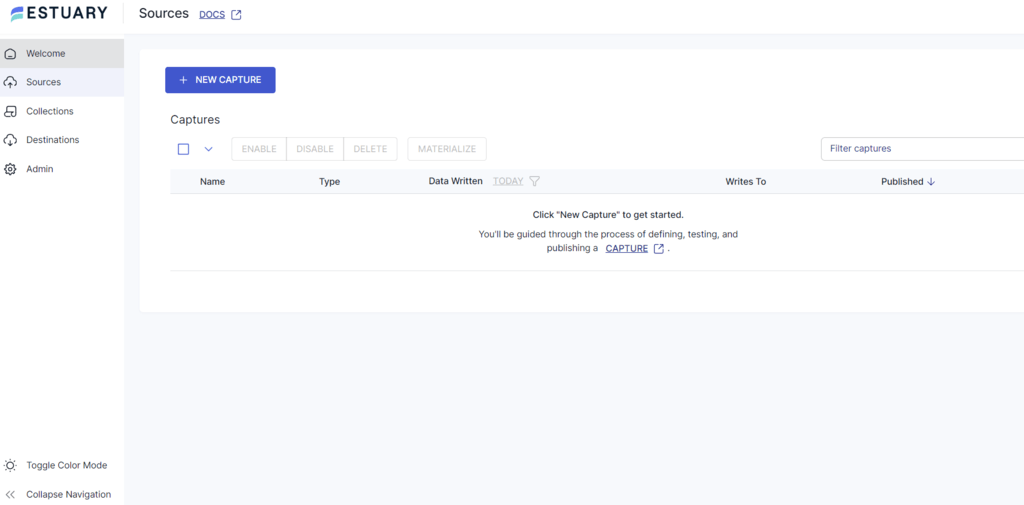

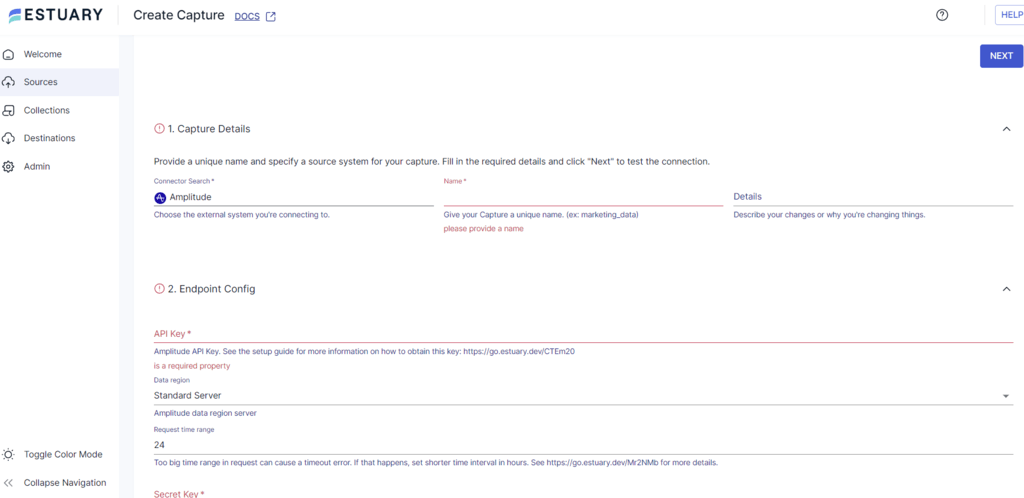

Step 1: Configure Amplitude as the Source

- Login to your Estuary account.

- Click on the Sources tab on the left navigation pane.

- Click the + NEW CAPTURE button.

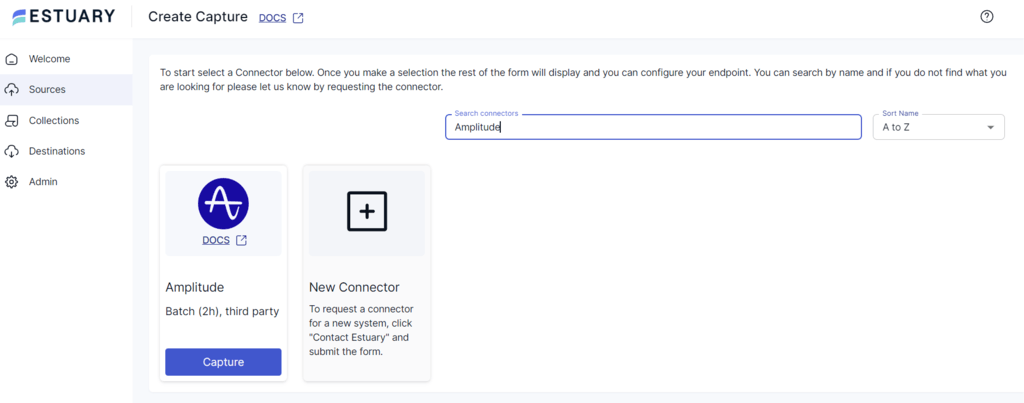

- Use the Search connectors field to find Amplitude connector and click its Capture button to configure it as a data source.

- On the Create Capture page, enter the mandatory details, such as Name, API Key, Secret Key, and Replication Start Date.

- Fill in the required fields and click NEXT > SAVE AND PUBLISH to transfer data from Amplitude to Flow collections.

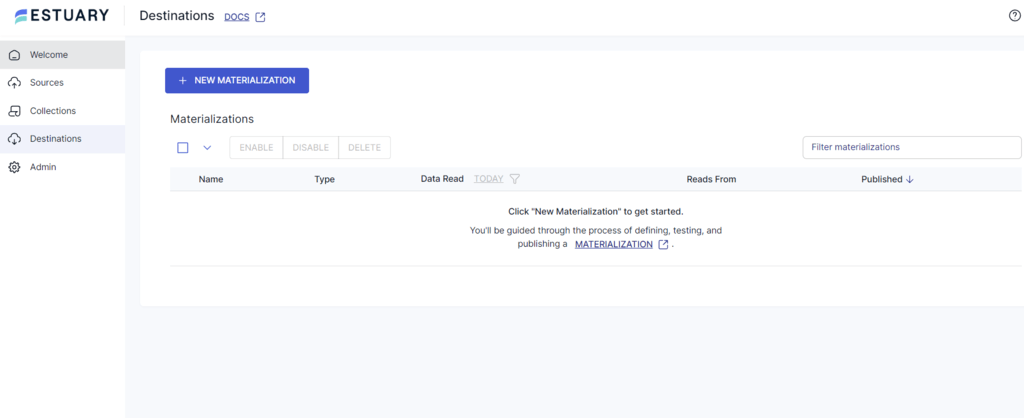

Step 2: Configure BigQuery as the Destination

- Once the source is set, click MATERIALIZE COLLECTIONS in the resulting pop-up window or the Destinations option on the dashboard.

- Click on the + NEW MATERIALIZATION button on the Destinations page.

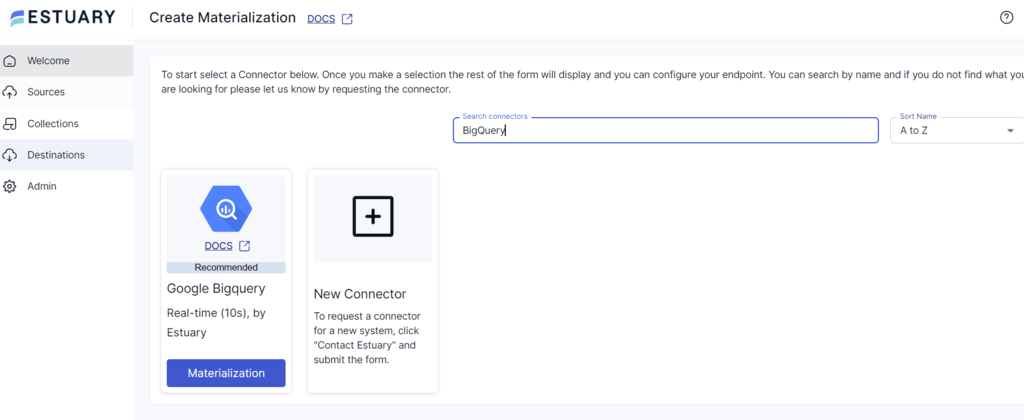

- Type BigQuery in the Search connectors box and click on the Materialization button of the connector when you see it in the search results.

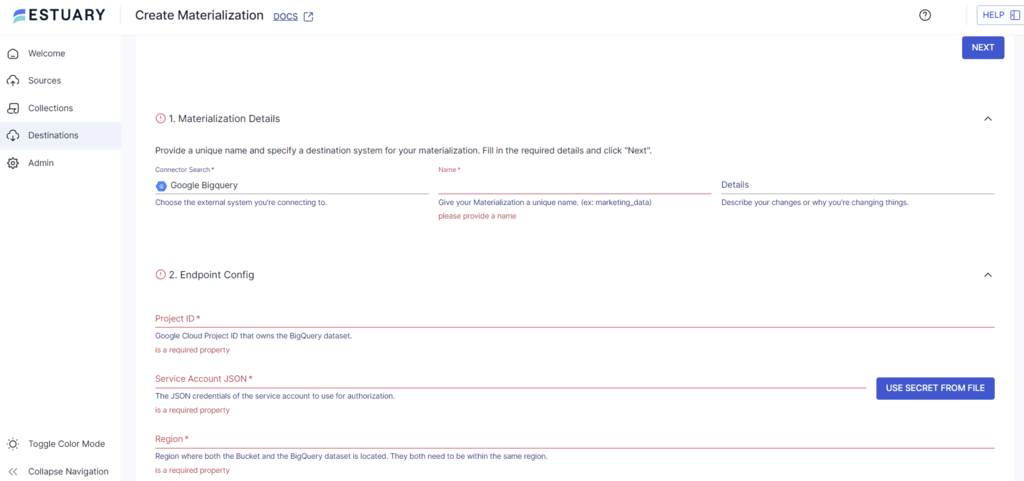

- On the Create Materialization page, enter the details like Name, Project ID, Service Account JSON, and Region.

- If your Flow collection of data from Amplitude isn’t filled automatically, you can add it manually using the Link Capture button in the Source Collections section.

- Click NEXT > SAVE AND PUBLISH. Estuary will start loading data from Flow collections into BigQuery tables.

Why Use Estuary for Amplitude to BigQuery?

- Multiple Data Sources and Destinations: Estuary provides 300+ ready-to-use connectors to extract data from various sources and load it into multiple destinations through a single data pipeline. This enhances productivity and simplifies the data transfer process.

- Real-time Data Processing with CDC: Flow leverages Change Data Capture (CDC) for real-time data processing. This helps maintain data integrity and reduces latency.

- Scalability: It can handle substantial data flows and support up to 7 GB/s. This attribute makes it highly scalable, which is especially useful when the data usage in Amplitude to BigQuery increases.

Use Cases: Amplitude and BigQuery Integration

The integration of Amplitude and BigQuery opens up a world of possibilities for data-driven decision-making. Here are some examples:

- Customer segmentation and personalization: Identify distinct user groups based on behavior and tailor experiences accordingly.

- Product performance analysis: Measure product usage, identify bottlenecks, and prioritize feature development.

- Marketing campaign optimization: Correlate marketing activities with user behavior to measure ROI and optimize campaigns.

- Churn prediction: Analyze user behavior patterns to identify at-risk customers and implement retention strategies.

By combining Amplitude's rich user behavior data with BigQuery's powerful analytics capabilities, businesses can uncover valuable insights, optimize operations, and drive growth. Depending on your stack, you may also want to explore syncing Amplitude with Snowflake or Redshift to support similar use cases with those warehouses.

Conclusion

Integrating data from Amplitude to BigQuery transforms how you leverage your product analytics for better conversions and sales. BigQuery's built-in analytical capabilities allow you to efficiently analyze petabytes of data to uncover insights that would otherwise be overlooked.

While Amplitude’s built-in export tool for transferring data is one migration approach, it comes with several limitations, such as the inability to perform real-time analytics and export limits.

A streamlined approach is to leverage no-code, real-time ETL tools like Estuary, which automate the migration process without the need for extensive technical knowledge. In the end, the method you opt for depends on your requirements and your level of expertise.

Estuary provides an extensive and growing list of connectors, robust functionalities, and a user-friendly interface. Sign up today to simplify and automate data migration from Amplitude to Bigquery.

FAQs

- Why should I integrate Amplitude with BigQuery?

Integrating data to BigQuery allows you to perform more complex analysis on your Amplitude data, combining it with other sources for deeper insights. BigQuery's scalability and SQL-based querying make it ideal for handling large datasets and analysis.

- Do I need coding experience to integrate data from Amplitude to BigQuery?

While some technical understanding is beneficial, you don't necessarily need to be a coder. There are several options available:

- Amplitude's export function: Requires basic technical knowledge to set up and manage.

- ETL tools like Estuary: Offer user-friendly interfaces and pre-built connectors, minimizing the need for coding.

- How long does the integration process usually take?

The time required for time depends on the amount of data and the chosen method. Using no-code ETL tools like Estuary can shorten the time required for migration compared to manual methods.

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.