Amazon S3 to Snowflake integration lets teams load files from S3 buckets into Snowflake tables for analytics, reporting, AI workflows, and data warehousing. You can do this with a managed data pipeline using Estuary or manually with Snowpipe and external stages.

For production workloads, Estuary is often the better fit when you need automated schema handling, repeatable file ingestion, support for multiple file formats, and low-latency movement into Snowflake. Snowpipe can work well when you already manage Snowflake ingestion directly and have the engineering resources to configure stages, pipes, permissions, file formats, monitoring, and error handling.

In this guide, we’ll compare both methods, explain when to use each, and show how to build an Amazon S3 to Snowflake pipeline with Estuary and Snowpipe.

Key Takeaways

You can load Amazon S3 data into Snowflake using a managed pipeline such as Estuary or a manual Snowpipe setup.

Estuary is better suited for teams that want a no-code or low-code pipeline with automated capture from S3 and materialization into Snowflake.

Snowpipe is useful when teams want to manage Snowflake-native ingestion directly, but it requires more configuration around stages, pipes, permissions, file formats, and monitoring.

Before production use, validate file format parsing, schema mapping, timestamp behavior, duplicate handling, delete behavior, and Snowflake warehouse cost settings.

Amazon S3 to Snowflake: What Changes During Ingestion?

Amazon S3 stores files as objects inside buckets, while Snowflake stores structured data in databases, schemas, and tables. Moving data from S3 to Snowflake is not just a file copy. You need to decide how files are discovered, parsed, loaded, deduplicated, and mapped into Snowflake tables.

Important ingestion decisions include:

| Area | What to decide |

|---|---|

| Bucket scope | Capture the full bucket or only a specific prefix |

| File formats | CSV, JSON, Avro, Parquet, Protobuf, or another supported format |

| Compression | gzip, zip, zstd, none, or automatic detection |

| Schema mapping | How file fields map to Snowflake columns |

| File ordering | Whether object keys are written in a predictable order |

| Duplicate handling | How repeated files or duplicate records should be handled |

| Updates/deletes | Whether the destination should use standard updates, delta updates, or soft-delete behavior |

| Cost controls | Snowflake warehouse sizing, auto-suspend, and sync schedule |

Amazon S3 to Snowflake Methods Compared

| Method | Best for | Setup effort | Latency | Operational burden | Notes |

|---|---|---|---|---|---|

| Estuary S3 to Snowflake pipeline | Teams that want managed ingestion, schema handling, repeatable syncs, and low-code setup | Low to medium | Low-latency or scheduled, depending on configuration | Lower | Uses S3 capture and Snowflake materialization connectors |

| Snowpipe | Teams that want Snowflake-native file ingestion and can manage stages, pipes, permissions, and monitoring | Medium to high | Near real time | Medium | Good Snowflake-native option, but requires more manual setup |

| COPY INTO | One-time or occasional bulk loads | Medium | Batch | Medium | Useful for manual backfills, not ideal for continuous ingestion |

| Custom scripts | Highly customized workflows | High | Depends on implementation | High | Requires ongoing maintenance, retries, logging, and monitoring |

How to Load Data From Amazon S3 to Snowflake

While there are several approaches for loading data from Amazon S3 to Snowflake, we will explore the more popular methods in this article.

- Automated Method: Using Estuary to Load Data from Amazon S3 to Snowflake

- Manual Method: Using Snowpipe for Amazon S3 to Snowflake Data Transfer

Automated Method: Using Estuary to Load Data from Amazon S3 to Snowflake

To reduce the operational work required by manual ingestion, you can use a managed data pipeline platform like Estuary. Estuary can capture files from Amazon S3, parse them into collections, and materialize those collections into Snowflake tables. It provides automated schema management, data deduplication, and integration capabilities for real-time data transfer. By using Estuary’s wide range of built-in connectors, you can also connect to data sources without writing a single line of code.

Here are some key features and benefits of Estuary:

- Simplified User-Interface: It offers a user-friendly and intuitive interface that automates data capture, extraction, and updates. It eliminates the need for writing complex manual scripts, saving time and effort.

- Real-Time Data Integration: Estuary supports real-time data movement, CDC, and batch pipelines, helping teams keep Snowflake tables updated from sources such as Amazon S3.

- Schema Management: It continuously monitors the source data for any changes in the schema and dynamically adapts the migration process to accommodate any changes.

- Flexibility and Scalability: Estuary is designed to adapt to varying data integration tasks while scaling efficiently to handle increasing data volumes. Its low-latency performance can help teams keep Snowflake tables updated quickly, even as data volumes grow.

Let's explore the step-by-step process to set up an Amazon S3 to Snowflake data pipeline.

Estuary Amazon S3 Connector Requirements

Before creating the S3 capture, decide whether Estuary should read from the full bucket or from a specific prefix. Estuary’s Amazon S3 connector can capture from an entire S3 bucket or from a prefix within a bucket. The bucket or prefix must be either publicly accessible for anonymous reads or accessible through an AWS root user, IAM user, or IAM role.

You’ll need:

- AWS region.

- S3 bucket name.

- Optional prefix to limit the capture scope.

- Optional match keys regex to filter object keys.

- Authentication method: AWS access keys, IAM role, or anonymous access.

- Parser configuration if automatic format detection is not enough.

- File format and compression settings, if needed.

Estuary can automatically detect file type and shape in many cases, but you can explicitly configure parser settings for formats such as CSV, JSON, Avro, Protobuf, and W3C Extended Log.

Important: If your S3 bucket contains multiple file types, use prefixes to separate datasets before capture. Estuary currently supports S3 captures with data of a single file type, so a prefix helps ensure each capture reads a consistent format.

Step 1: Log in to your Estuary account, and if you don’t have one, register to try it out for free.

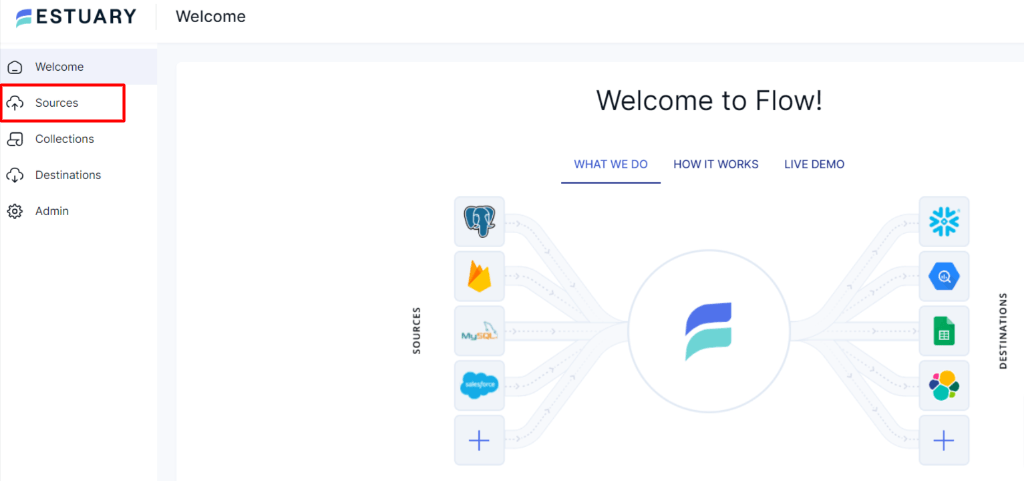

Step 2: After a successful login, you’ll be directed to Estuary’s dashboard. To set up the source end of the pipeline, simply navigate to the left-side pane of the dashboard and click on Sources.

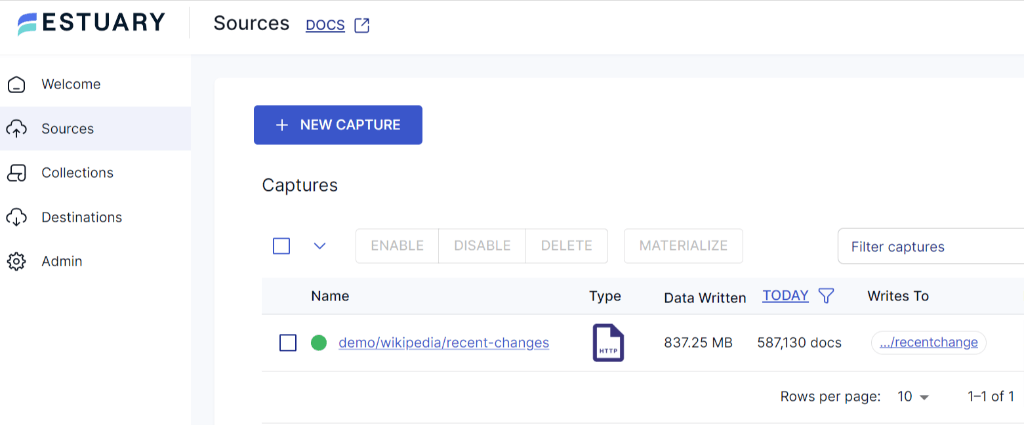

Step 3: On the Sources page, click on the + New Capture button.

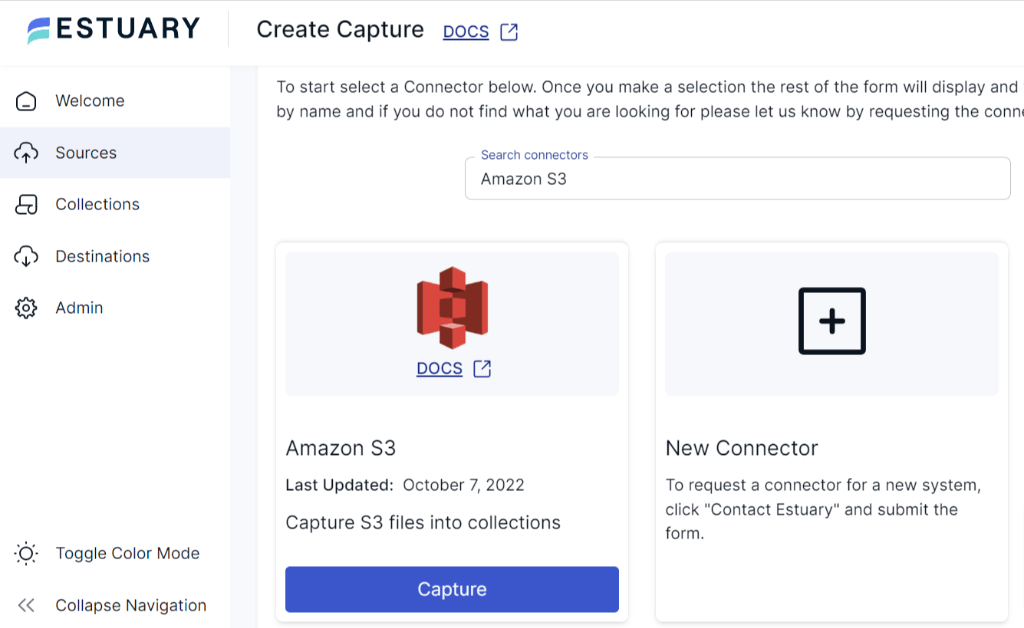

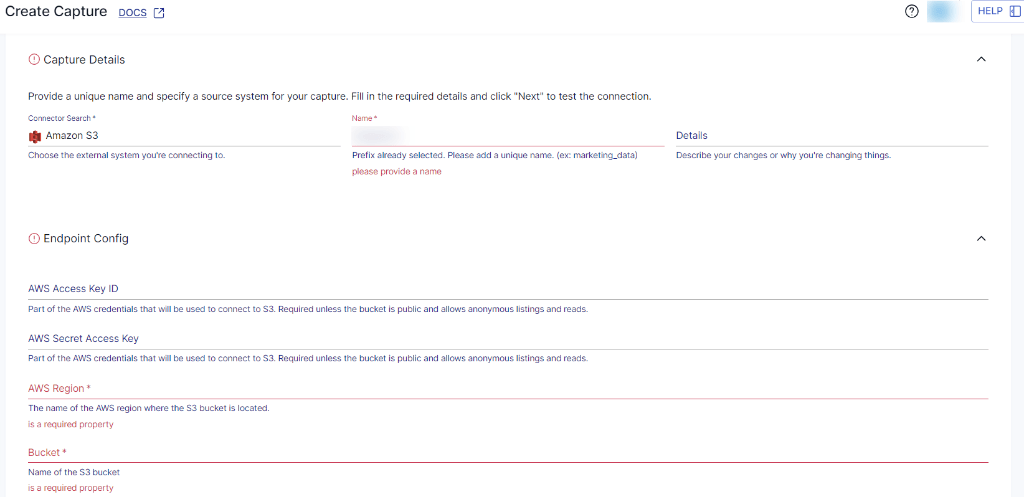

Step 4: Now, you’ll be redirected to the Create Capture page. Search and select the Amazon S3 connector in the Search Connector box. Click on the Capture button.

Step 5: On the Amazon S3 connector page, enter a Name for the connector and the endpoint config details like AWS access key ID, AWS secret access key, AWS region, and bucket name. Once you have filled in the details, click on Next > Save and Publish.

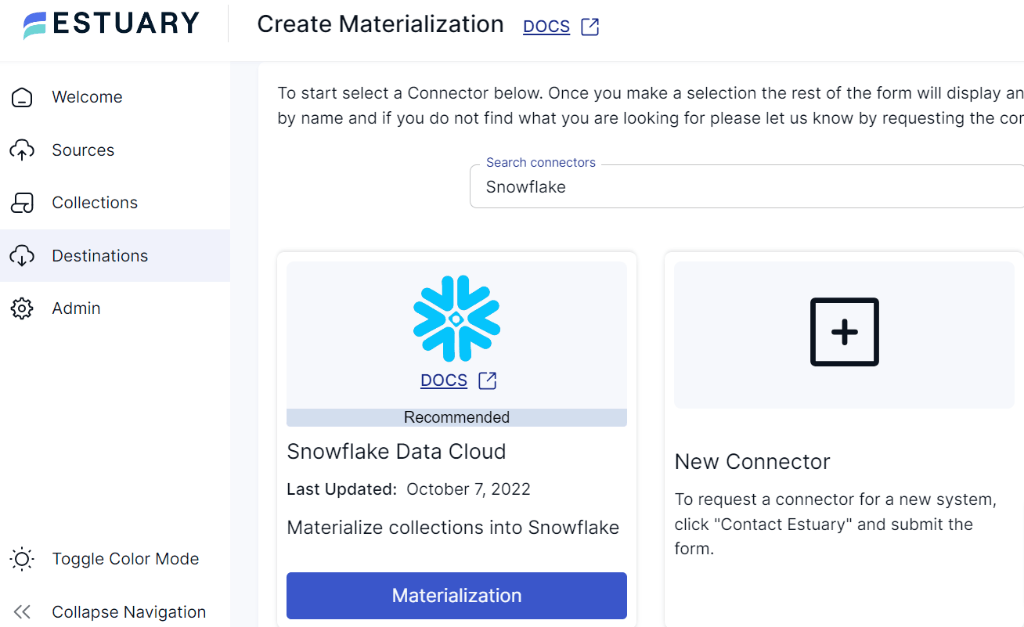

Step 6: Now, you need to configure Snowflake as the destination. On the left-side pane on the Estuary dashboard, click on Destinations. Then, click on the + New Materialization button.

Step 7: As you are transferring your data from Amazon S3 to Snowflake, locate Snowflake in the Search Connector box and proceed to click on the Materialization button. This will redirect you to the Snowflake materialization page.

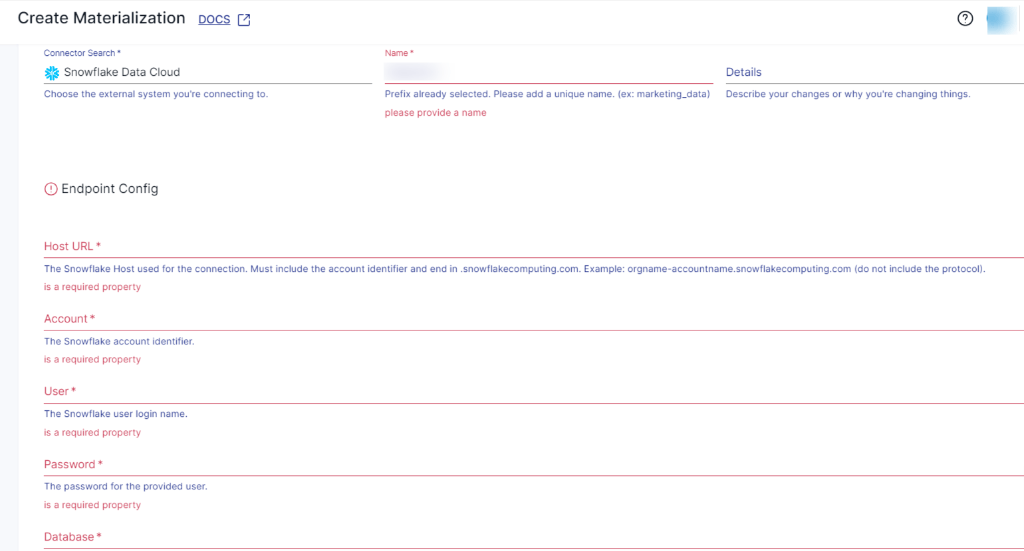

Step 8: On the Snowflake materialization connector page, enter a unique name for your materialization and provide the required Snowflake endpoint configuration.

You’ll need:

- Snowflake host URL, such as

orgname-accountname.snowflakecomputing.com - Database name

- Schema name

- Warehouse name

- Role, if required

- Timestamp type

- Snowflake user

- Private key for JWT/key-pair authentication

Estuary’s Snowflake connector currently uses JWT/key-pair authentication. Username and password authentication was deprecated in April 2025.

The Snowflake user must have

QUOTED_IDENTIFIERS_IGNORE_CASEset toFALSEbecause Estuary requires case-sensitive quoted identifiers.

Step 9: Once the required information is filled in, click on Next, followed by Save and Publish. By following these steps, Estuary will begin capturing data from Amazon S3 and materializing it into Snowflake based on your configuration.

With the source capture and Snowflake materialization configured, new matching S3 files can be parsed into Estuary collections and loaded into Snowflake tables.

Snowflake Cost and Performance Tips

When loading S3 data into Snowflake, consider both freshness and warehouse cost. Snowflake compute is billed per second with a minimum of 60 seconds, and inactive warehouses do not incur compute charges. Estuary recommends setting the warehouse to auto-suspend after 60 seconds and configuring the materialization sync schedule based on your freshness requirements.

For large datasets, choose collection keys carefully. Standard Snowflake updates can perform better when keys help Snowflake prune micro-partitions efficiently. Chronological keys, such as a date plus user ID, may perform better than using only a high-cardinality user ID.

Standard Updates vs Delta Updates in Snowflake

Estuary’s Snowflake materialization connector supports both standard updates and delta updates. Standard updates are the default. Delta updates can reduce latency and cost for large datasets because Estuary does not need to query existing documents in the Snowflake table. However, delta updates are best when events have unique keys and when the resulting table does not need to be fully reduced.

Snowpipe Streaming is available for delta update bindings and is the lowest-latency method for loading data into Snowflake through Estuary.

For detailed information on creating an end-to-end Data Flow, see Estuary's documentation:

Manual Method: Using Snowpipe for Amazon S3 to Snowflake Data Transfer

Before you load data from Amazon S3 to Snowflake using Snowpipe, you need to ensure that the following prerequisites are met:

- Access to Snowflake account.

- Snowpipe should be enabled in your Snowflake account.

- Active AWS S3 account.

- AWS Access Key ID and AWS Secret Key for the S3 bucket where the data files are located.

- To access files within a folder in an S3 bucket, you need to set the appropriate permissions on both the S3 bucket and the specific folder. Snowflake requires s3:GetBucketLocation, s3:GetObject, s3:GetObjectVersion, and s3:ListBucket permissions.

- Well-defined schema design and target table in Snowflake.

The method of moving data from Amazon S3 to Snowflake includes the following steps:

- Step1: Create an External Stage

- Step 2: Create a Pipe

- Step 3: Grant Permissions

- Step 4: Data Ingestion

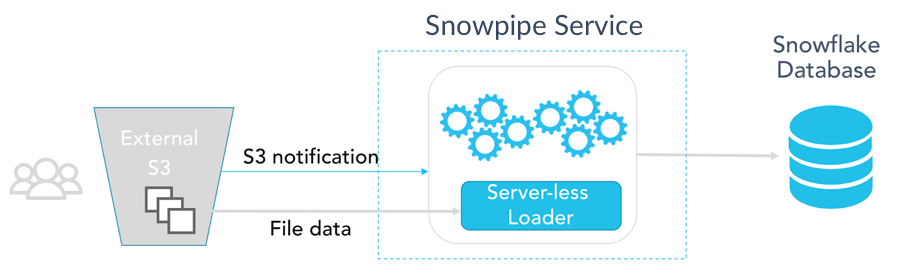

The below image represents the overall steps involved in this process.

Here's a high-level overview of the Amazon S3 to Snowflake migration process:

Step 1: Create an External Stage

- Log in to your Snowflake account using SnowSQL, which is a command-line tool provided by Snowflake. Next, create an external stage that serves as the source for Snowpipe to ingest data. A Snowpipe is a feature in Snowflake that enables real-time data ingestion from various sources into Snowflake tables within minimal latency. It automates the process of loading data into Snowflake tables, enabling near real-time data processing and analysis.

- To create an external stage that points to the location in Amazon S3 where your data files are stored, use the following syntax:

plaintextCREATE STAGE external_stage

URL = 's3://s3-bucket-name/folder-path/'

CREDENTIALS = (

AWS_KEY_ID = 'aws_access_key_id'

AWS_SECRET_KEY = 'aws_secret_access_key'

)

FILE_FORMAT = (TYPE = CSV);Replace s3-bucket-name, folder-path, AWS_KEY_ID, and AWS_SECRET_ACCESS_KEY, with your specific AWS S3 credentials.

Step 2: Create a Pipe

- Create a pipe in Snowflake that specifies how data will be ingested into the target table from the external stage.

plaintextCREATE OR REPLACE PIPE pipe_name

AUTO_INGEST = TRUE

AS

COPY INTO target_table

FROM @external_stage

FILE_FORMAT = (TYPE = CSV);Replace target_table with the name of your target table in Snowflake.

Step 3: Grant Permissions

- Ensure that the necessary permissions are granted that will be using Snowpipe to load data.

plaintextGRANT USAGE ON SCHEMA schema_name TO ROLE role;

GRANT USAGE ON STAGE external_stage TO ROLE role;

GRANT USAGE ON FILE FORMAT file_format TO ROLE role;

GRANT USAGE, MODIFY ON PIPE pipe_name TO ROLE role;

GRANT INSERT, SELECT ON TABLE target_table TO ROLE role;Replace schema_name, role, file_format, pipe_name, and target_table with the appropriate names in your Snowflake environment.

With AUTO_INGEST = TRUE, Snowpipe can load new files from the external stage as they arrive, using cloud event notifications when configured correctly.

Step 4: Data Ingestion

Once the data is loaded, you can monitor the ingestion process and view any potential errors by querying the Snowflake table SNOWPIPE_HISTORY and SNOWPIPE_ERRORS.

plaintextSELECT * FROM TABLE(INFORMATION_SCHEMA.PIPE_HISTORY(TABLE_NAME=>'TARGET_TABLE'));That’s it! You’ve successfully executed Amazon S3 to Snowflake Migration using Snowpipe.

While Snowpipe is a powerful tool for real-time data ingestion, it does have some limitations. Snowpipe does not provide built-in data deduplication mechanisms. If duplicate data exists in the source files, it can be loaded into the target table. On the other hand, Snowpipe and COPY INTO require file format configuration, and some file types or nested structures may require additional setup or preprocessing before loading cleanly into Snowflake tables.

Amazon S3 to Snowflake Validation Checklist

Before relying on your S3 to Snowflake pipeline in production, validate:

- The correct S3 bucket and prefix are being captured.

- File format detection is correct, or parser settings are explicitly configured.

- Compression settings are correct.

- CSV headers, delimiters, quote characters, and line endings are parsed correctly.

- Snowflake tables are created in the expected database and schema.

- Timestamp columns use the expected Snowflake timestamp type.

- Duplicate files or duplicate records are handled according to your business rules.

- New files in S3 appear in Snowflake within the expected freshness window.

- Row counts and sample records match between S3 files and Snowflake tables.

- Snowflake warehouse auto-suspend and sync schedule are configured for cost control.

- Error handling and monitoring are in place for malformed files or failed loads.

Customer Proof: Real-Time Analytics with Estuary

Estuary is used by teams that need faster, more cost-effective data movement for analytics and operational reporting. Glossier used Estuary to reduce data costs by 50% and unlock real-time supply chain and marketing analytics, while Xometry saved 60% on data integration with a secure private deployment.

This proof point shows how managed data pipelines can reduce operational burden compared with manually maintained ingestion workflows.

While these examples are not specific to S3-to-Snowflake ingestion, they show how teams use Estuary to reduce pipeline cost and operational burden for analytics and reporting workloads.

Conclusion

Amazon S3 to Snowflake integration can be handled with a managed pipeline, Snowpipe, COPY INTO, or custom scripts. The right method depends on your file formats, ingestion frequency, schema complexity, latency requirements, and how much operational work your team wants to manage.

Snowpipe is a strong Snowflake-native option for teams that are comfortable configuring stages, pipes, permissions, file formats, monitoring, and error handling. COPY INTO can work for one-time or occasional bulk loads, but it is not ideal for continuous ingestion.

Estuary is a better fit when you want a managed S3 to Snowflake pipeline with automated file capture, parser configuration, schema handling, repeatable syncs, and Snowflake materialization. It is especially useful when teams want to reduce manual ingestion work while keeping Snowflake tables updated from S3.

FAQs

Can Estuary load files from S3 into Snowflake?

Is Snowpipe enough for S3 to Snowflake ingestion?

How do I control Snowflake costs when loading S3 data?

About the author

Jeffrey is a data engineering professional with over 15 years of experience, helping early-stage data companies scale by combining technical expertise with growth-focused strategies. His writing shares practical insights on data systems and efficient scaling.